Table of Contents

ToggleIt Started Simple. Then Got Complicated.

A mid-sized bank wanted to move faster, so they plugged a public LLM into their loan review process.

Paste data in, get summaries out—it worked instantly. Soon, it wasn’t just helping. It was shaping decisions.

A month later, audit asked: “How was this decision made?” The answer? Partial logs, unclear reasoning, no model trace.

Everything looked right. Nothing could be proven.

The Hidden Trade-Off: Speed vs Control

Public LLMs make it easy to move fast. But regulated industries don’t operate on speed alone.

They operate on accountability.

Here’s what quietly changes when a workflow depends on a public LLM:

| What Teams Expect | What Actually Happens |

| “We’ll save time” | Yes, but with reduced visibility |

| “It’s just summarization” | Sensitive data still leaves the system |

| “We’ll monitor it later” | Hard to reconstruct decisions after the fact |

| “The model is reliable” | Behavior changes over time |

The gap between expectation and reality isn’t obvious at the start. It shows up later, during audits, incidents, or failures.

The Data Nobody Meant to Share

In another case, a healthcare provider used a public LLM to summarize patient case notes.

The goal was simple: reduce documentation time for doctors.

But here’s what actually happened:

-

- Patient records were sent to an external API

-

- Data anonymization wasn’t consistent

-

- Logs didn’t clearly show what was transmitted

No breach occurred. No data leak was reported.

But from a compliance standpoint, that didn’t matter.

The data had already crossed the boundary.

Why This Is a Problem

Regulated industries are built around one core idea:

Sensitive data should remain controlled, traceable, and auditable at all times.

Public LLMs break this assumption by design.

| Action | Risk Introduced |

| Sending data to external models | Loss of ownership |

| Processing sensitive inputs | Regulatory exposure |

| Relying on third-party infra | Unknown storage & handling |

Even if nothing goes wrong, the possibility is enough to trigger compliance concerns.

The Decision That Couldn’t Be Explained

An insurance company used an LLM to assist in claim assessments.

One claim was flagged as “high risk” and rejected.

The customer appealed.

Now the company had to answer:

-

- Why was the claim rejected?

-

- What factors influenced the decision?

-

- Can the process be audited?

The LLM response?

A well-written paragraph. No traceable logic.

The Real Issue: Black Box Decisions

Public LLMs are not built for explainability.

| Requirement | Reality with Public LLMs |

| Clear reasoning path | Not available |

| Reproducible outputs | Not guaranteed |

| Audit-ready logs | Limited |

In regulated environments, a correct answer is not enough.

It has to be provable.

The Model That Changed Overnight

A legal firm built a workflow to analyze contracts using a public LLM.

It worked reliably for weeks.

Then one day, outputs started changing.

-

- Clause interpretations differed

-

- Risk flags shifted

-

- Tone of responses changed

Nothing in their system had changed.

The model had.

The Problem of Silent Updates

Public LLM providers continuously update models.

That’s good for general performance—but risky for regulated workflows.

| Change | Impact |

| Model update | Different outputs for same input |

| No version control | Hard to validate consistency |

| No rollback | No way to revert behavior |

For industries that depend on consistency, this is a major issue.

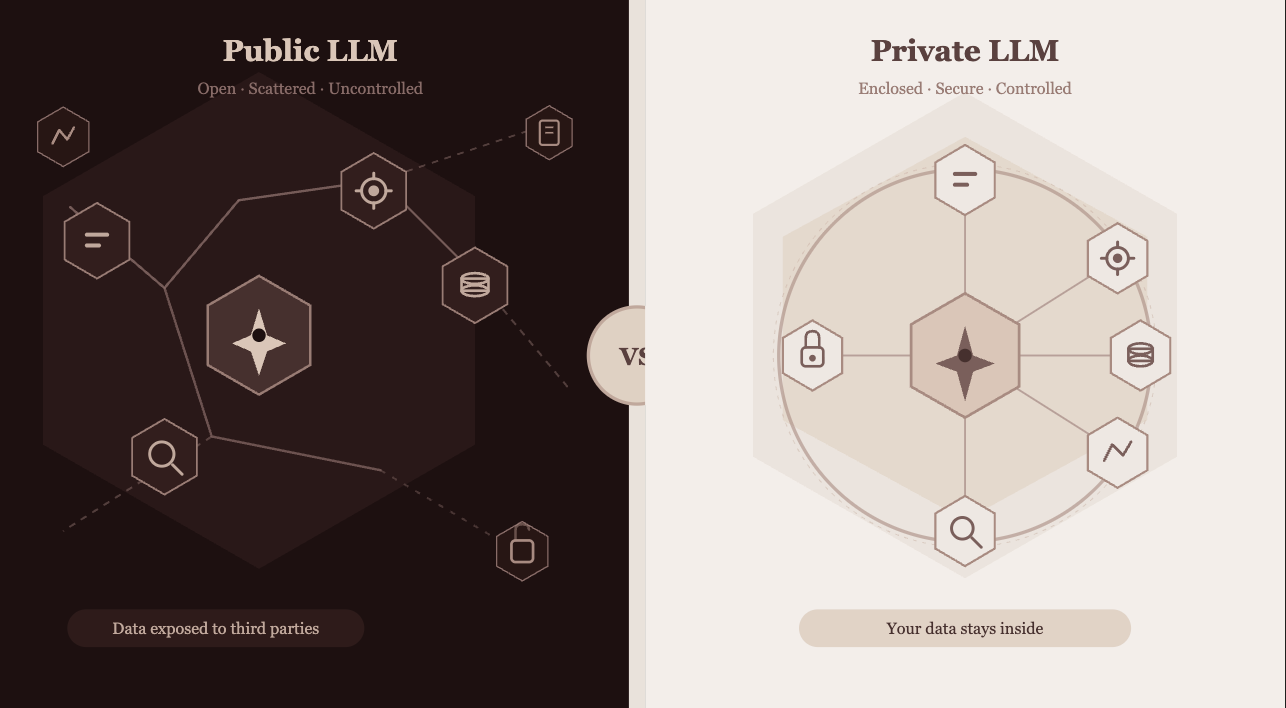

Public vs Controlled LLM Usage

| Factor | Public LLMs | Controlled Environments |

| Data handling | External | Internal / restricted |

| Audit logs | Partial | Complete |

| Model stability | Variable | Versioned |

| Compliance | Generic | Configurable |

| Security | Shared risk | Defined controls |

A More Reliable Setup

Enterprises are moving toward controlled AI systems where:

-

- Models run in private or secure environments

-

- Data access is restricted and monitored

-

- Outputs are logged with full context

-

- Policies define what the system can and cannot do

This setup doesn’t slow things down. It makes them usable in production.

Where Lyzr Agent Studio Fits

This is exactly the gap Lyzr Agent Studio addresses.

Instead of plugging into public LLMs directly, it provides a structured way to run AI agents within controlled boundaries.

-

- Data stays within defined environments

-

- Every action is logged and traceable

-

- Guardrails enforce compliance rules

-

- Multiple models can be used without lock-in

Back to the Bank

Revisiting the earlier bank example:

With a controlled setup, the same workflow would look different:

-

- Loan data stays within a secure environment

-

- Each step in the decision process is logged

-

- Outputs are tied to a specific model version

-

- Explanations are generated alongside decisions

Now, when audit asks:

“How was this decision made?”

There’s a clear answer.

Final Thought

Public LLMs make it easy to start.

Regulated industries don’t just need to start. They need to stand up to scrutiny.

The difference isn’t in the model.

It’s in how the system is designed around it.

Because in these environments, the real question isn’t:

“Does it work?”

It’s:

“Can it be trusted, explained, and audited?”

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here