Your ChatGPT Enterprise

alternative — LyzrGPT

With ChatGPT Enterprise, you’re paying per seat, locked into one model, and your data lives on someone else’s servers. LyzrGPT gives you everything — privately deployed, model-agnostic, and at a fraction of the cost.

Nvidia Deployments and record-breaking Groq inference

Adoption is easy. Scaling impact isn’t.

You pay more as teams use more — without predictable ROI.

Every team builds their own way. No standardization.

Work still depends on people, not systems.

You use AI — but don’t control how it behaves across workflows.

Not on a roadmap. Not a pilot.

These are live, on switch-over.

1,000+ agents. Not prototypes — production.

HR onboarding, KYC processing, sales outreach, support triage — pre-built, vetted, enterprise-grade. Your teams stop waiting for IT to build something and start using AI that actually does the work.

Your data never crosses the fence.

Deploy LyzrGPT inside your own VPC or on-prem. Every prompt, every output, every document stays inside your infrastructure. Full audit trails for every interaction. No negotiation required with your CISO.

Best model for each job. Every time.

Claude for long documents. Gemini for structured data. Groq for speed. GPT-4o for general tasks. Switch in one interface. No extra subscriptions. No wrangling APIs. Your team gets the right tool, automatically.

Your context comes with you.

Memory Pocket imports your conversation history, context, and workflows from ChatGPT, Claude, or Gemini directly into LyzrGPT. Your team doesn’t start from scratch. Productivity continues from hour one.

Audit-ready by design, not by request.

PII redaction at the model layer. Complete interaction logs. Guardrails that meet regulatory standards in banking, insurance, and healthcare. Not a compliance add-on — the actual foundation.

A model-agnostic workspace – securely in your VPC.

Chat with LLMs and agents, deployable in your VPC with easy context migration.

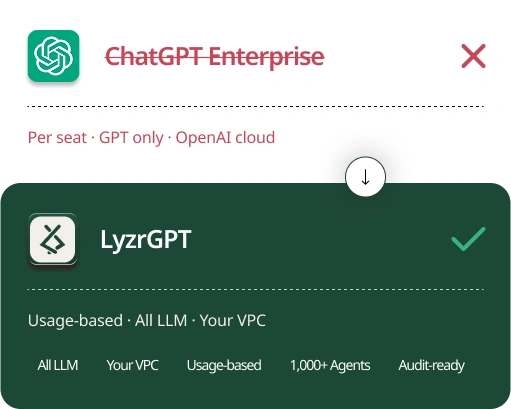

Why enterprises switch from ChatGPT to LyzrGPT

|

LyzrGPT

Enterprise AI workspace

|

ChatGPT Enterprise

OpenAI

|

|

|---|---|---|

| Model-agnostic architecture | Switch OpenAI / Anthropic / Google / Groq in one UI |

GPT series only |

| Responsible AI guardrails | Full logs + audit for banks |

Data isolation only |

| Context upload & migration | Import from any AI — Memory Pocket |

No portability |

| Chat with AI agents | Multi-agent: Research / Create / Analyse |

Custom GPTs / API only |

| Private deployment (VPC / on-prem) | VPC / on-prem deploy |

SaaS only |

| Pricing | Consumption-based |

Seat-based |

| Agent Studio access | Yes — included |

No |

$155,000 a year is a lot to leave on the table.

Run the numbers approximately for a 500-person enterprise. The difference is not marginal.

These aren’t edge cases. These are the conversations

happen in every enterprise AI review.

“We’re paying for 800 seats. Honestly, maybe 150 people use it regularly. The rest of the spend is just dead weight. We needed billing that reflects reality, not our headcount.”

3,000+ employees

“Our regulators don’t care what OpenAI’s data policy says. They need proof that our data doesn’t leave our infrastructure. That’s the entire conversation. ChatGPT Enterprise can’t answer that.”

“I have tasks where Claude is objectively better. I have tasks where Gemini is faster. I’m not anti-GPT — I just don’t want to be forced to use it for everything. That’s not AI strategy. That’s a vendor limitation.”

Before you book the demo,

honest answers.

Ready to transform your

customer experience?

Schedule a personalized consultation with our AI architects to map out your enterprise automation strategy.