Table of Contents

ToggleYou wouldn’t send your company’s financial records to a stranger’s computer.

Yet that’s exactly what happens when you feed sensitive data into public AI platforms.

Every query, every document, every customer interaction – all flowing through servers you don’t control, processed by models you didn’t build, stored in places you can’t audit.

For enterprises handling regulated data, that’s not just uncomfortable.

It’s a compliance nightmare.

Private AI agents solve this by running entirely within your infrastructure, processing data without it ever leaving your security perimeter — a model increasingly adopted in enterprise data science environments where sensitive datasets must remain protected. This is exactly where a reliable AI agent development service becomes essential, enabling businesses to deploy secure, customized agents tailored to internal systems and compliance needs.

They automate workflows, make decisions, and handle complex tasks – all while keeping your most sensitive information exactly where it belongs.

Behind your firewall.

This isn’t about paranoia.

It’s about control, compliance, and competitive advantage in industries where data breaches cost millions and regulatory violations end careers.

What exactly are private AI agents?

Think of private AI agents as autonomous workers who live entirely on your property.

They’re AI systems designed to perform specific tasks – analyzing documents, qualifying leads, processing claims, answering customer questions – but they run exclusively within your organization’s computing environment.

Unlike public artificial intelligence services whereyourdata travels to OpenAI’s servers or Google’s cloud, private agentic ai solutions operate on infrastructure you control.

They might run on your own servers, your private cloud instances, or dedicated hardware sitting in your data center.

The key difference? Data sovereignty.

When a private AI agent for enterprises processes a customer’s medical record, that information never touches the public internet.

When it analyzes proprietary research, competitor intelligence agencies can’t subpoena it from a third-party provider.

When it handles financial transactions, you maintain complete audit trails without relying on external service terms that could change overnight.

This matters more than most technology leaders initially realize.

According to IBM’s 2023 Cost of a Data Breach Report, the average cost of a data breach reached $4.45 million, with healthcare breaches averaging over $10 million.

Every piece of sensitive data sent to external AI services represents potential exposure.

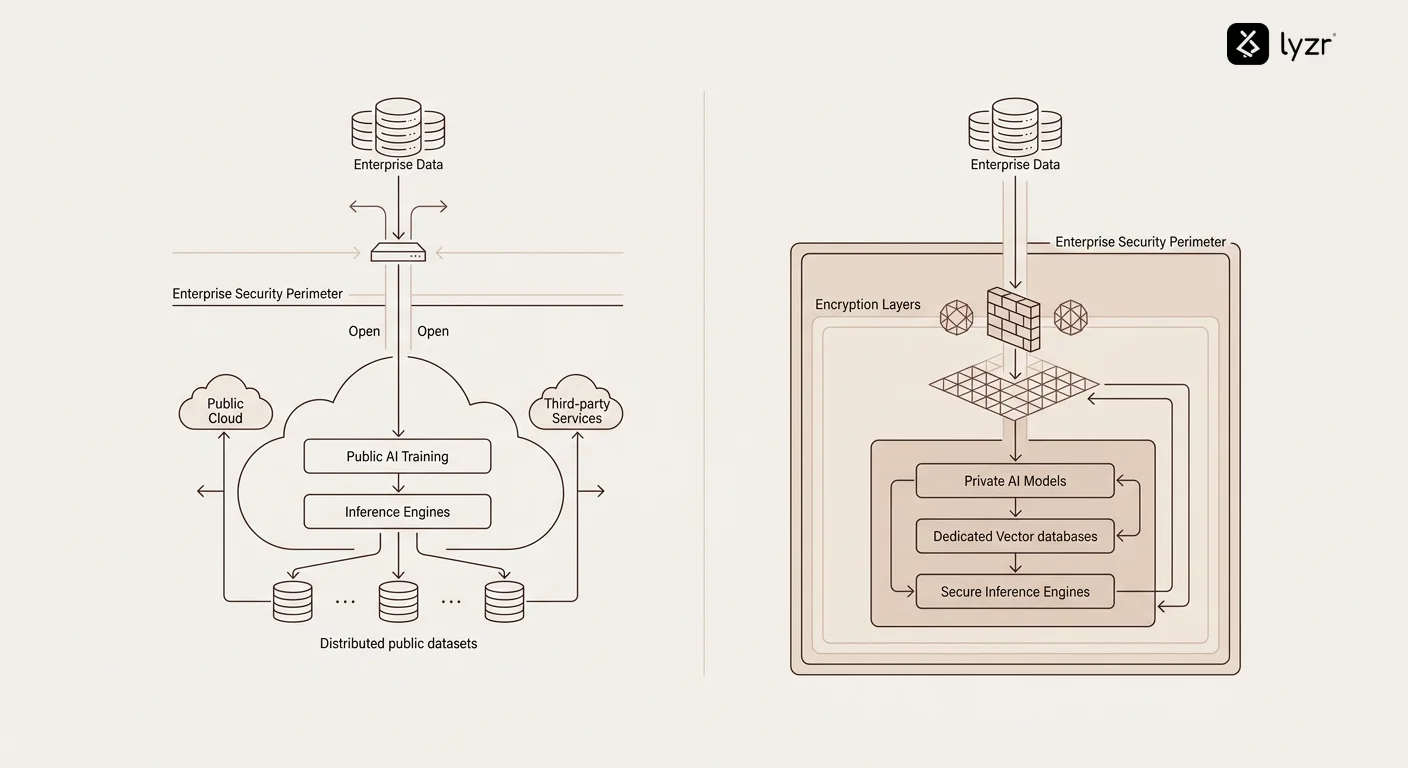

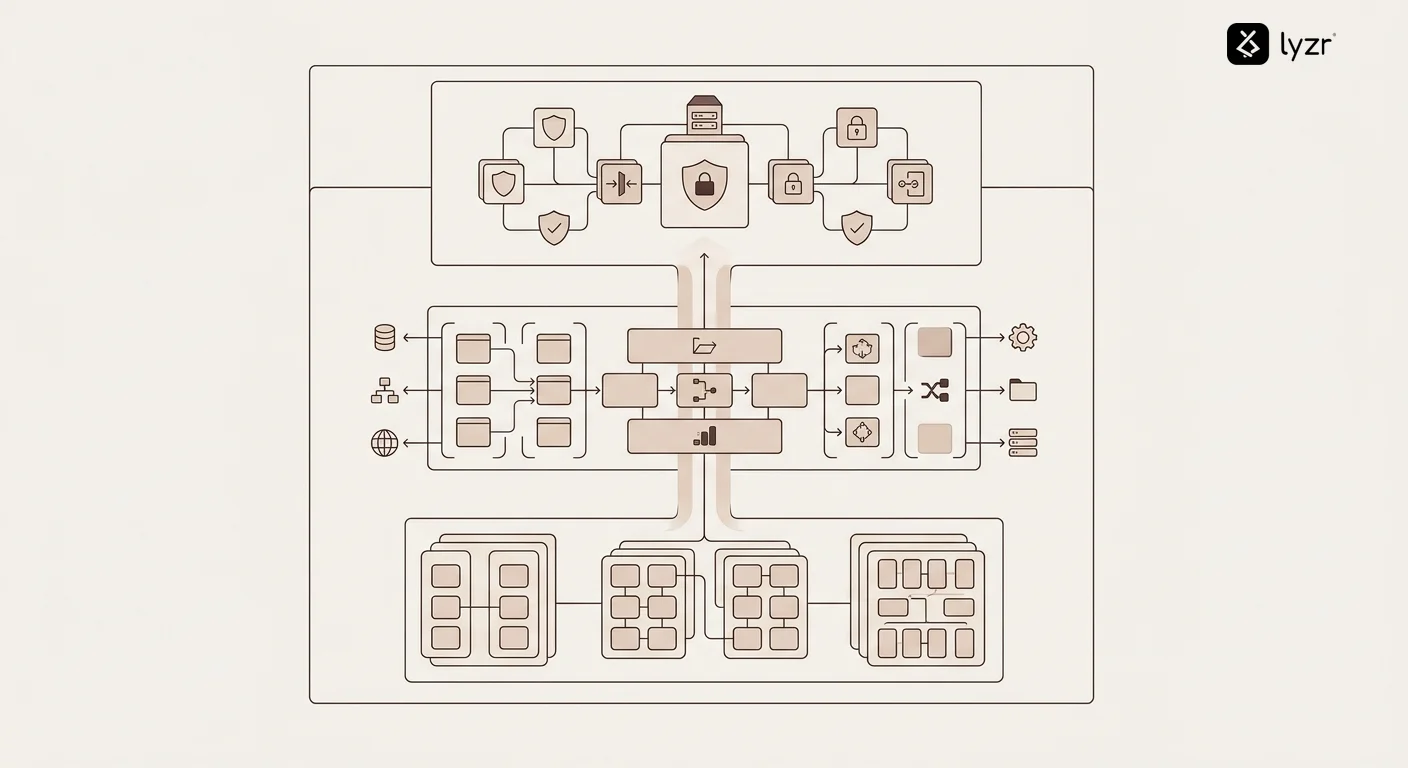

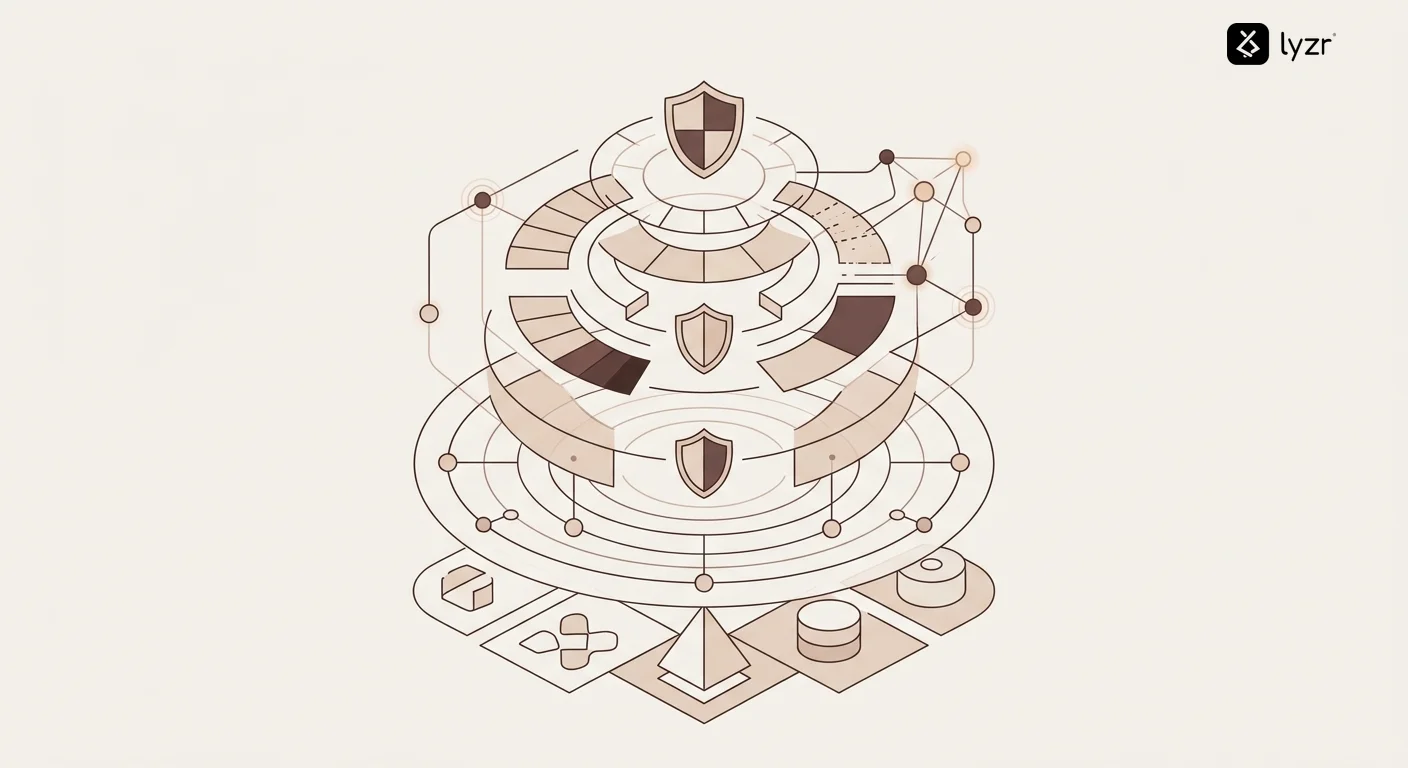

The architecture that makes them different

Private AI agents typically consist of three core components.

First, a language model or specialized AI that runs locally – this could be an open-source model like Llama, a commercially licensed model deployed on-premises, or a custom model trained on your own data.

Second, an execution environment that connects the AI to your internal systems – databases, APIs, business applications – without exposing those connections to the outside world.

Third, a security layer that enforces access controls, logs all activities, and ensures compliance with your governance policies.

Organizations deploying AI agents for private banking use this architecture to automate wealth management recommendations without ever sending client portfolios to external servers.

Why companies choose private over public AI

The decision to deploy private AI agents usually comes down to one of three triggers.

Regulatory requirements force the issue.

HIPAA, GDPR, SOC 2, PCI-DSS – these aren’t suggestions, they’re legal obligations with serious consequences for violations.

Healthcare organizations can’t casually send patient data to ChatGPT. Even routine tasks like documentation require careful consideration, the question of can AI write doctor’s notes safely is one that clinics must answer before adopting any AI tool.

Banks can’t process loan applications through public APIs without violating financial privacy laws.

Government contractors can’t analyze classified information on commercial cloud platforms.

For these organizations, private AI agents aren’t a preference – they’re the only legally viable option.

Competitive advantage creates urgency.

Your proprietary data represents years of accumulated business intelligence.

When you feed that data into public AI platforms, you’re potentially training models that your competitors could access.

Private AI agents let you build competitive moats by training models exclusively on your unique datasets, creating capabilities that rivals simply can’t replicate.

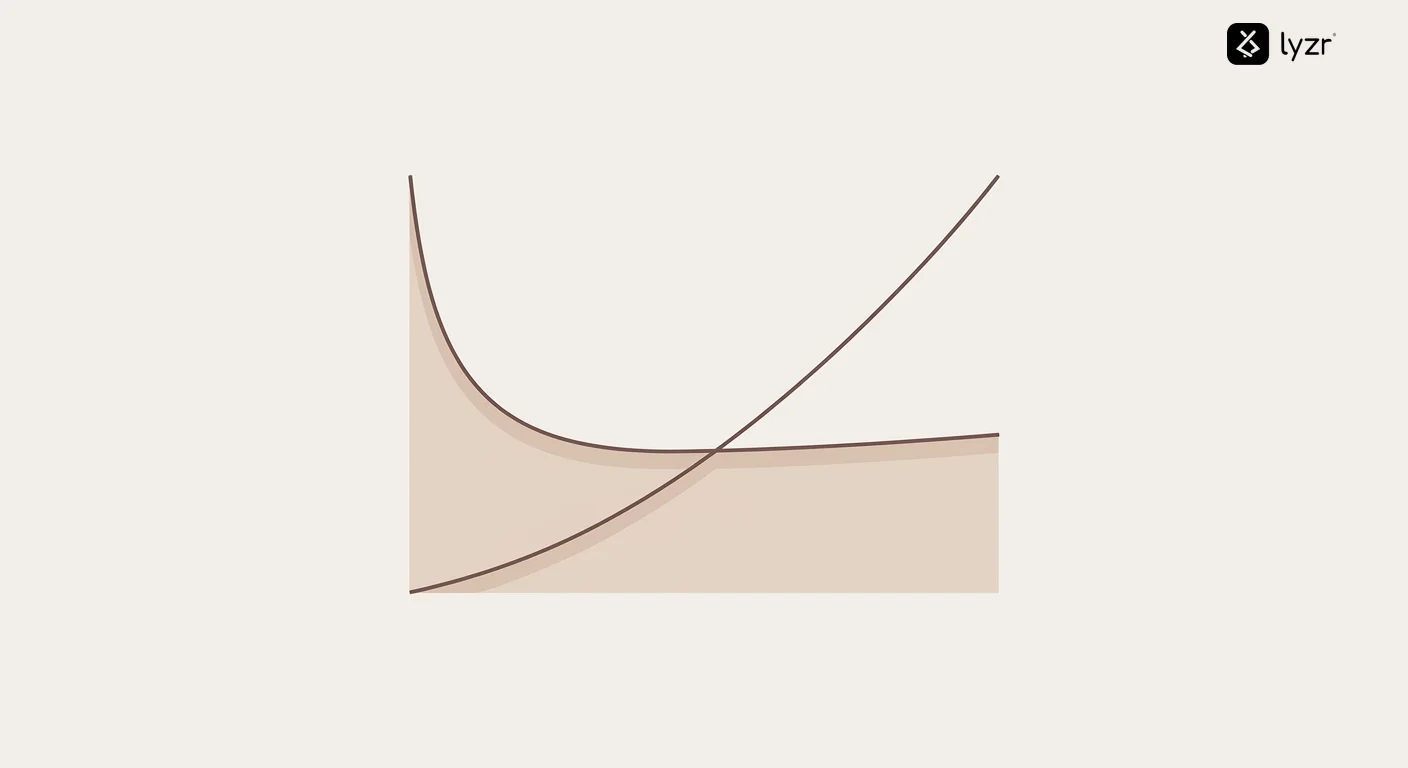

Cost and control drive long-term strategy.

Public AI services charge per API call, per token, per transaction.

Those costs scale linearly – more usage means proportionally higher bills, forever.

Private AI agents require upfront infrastructure investment but offer predictable ongoing costs.

More importantly, you control the roadmap.

When OpenAI changes pricing or deprecates a model, you adapt on their schedule.

With private agents, you decide when and how to upgrade.

| Factor | Public AI Services | Private AI Agents |

|---|---|---|

| Data Location | Third-party servers | Your infrastructure |

| Compliance Control | Limited, vendor-dependent | Full control, auditable |

| Cost Structure | Usage-based, variable | Infrastructure-based, predictable |

| Customization | Limited to API parameters | Complete model and workflow control |

| Vendor Lock-in | High dependency | Portable, controllable |

| Latency | Network-dependent | Local, optimizable |

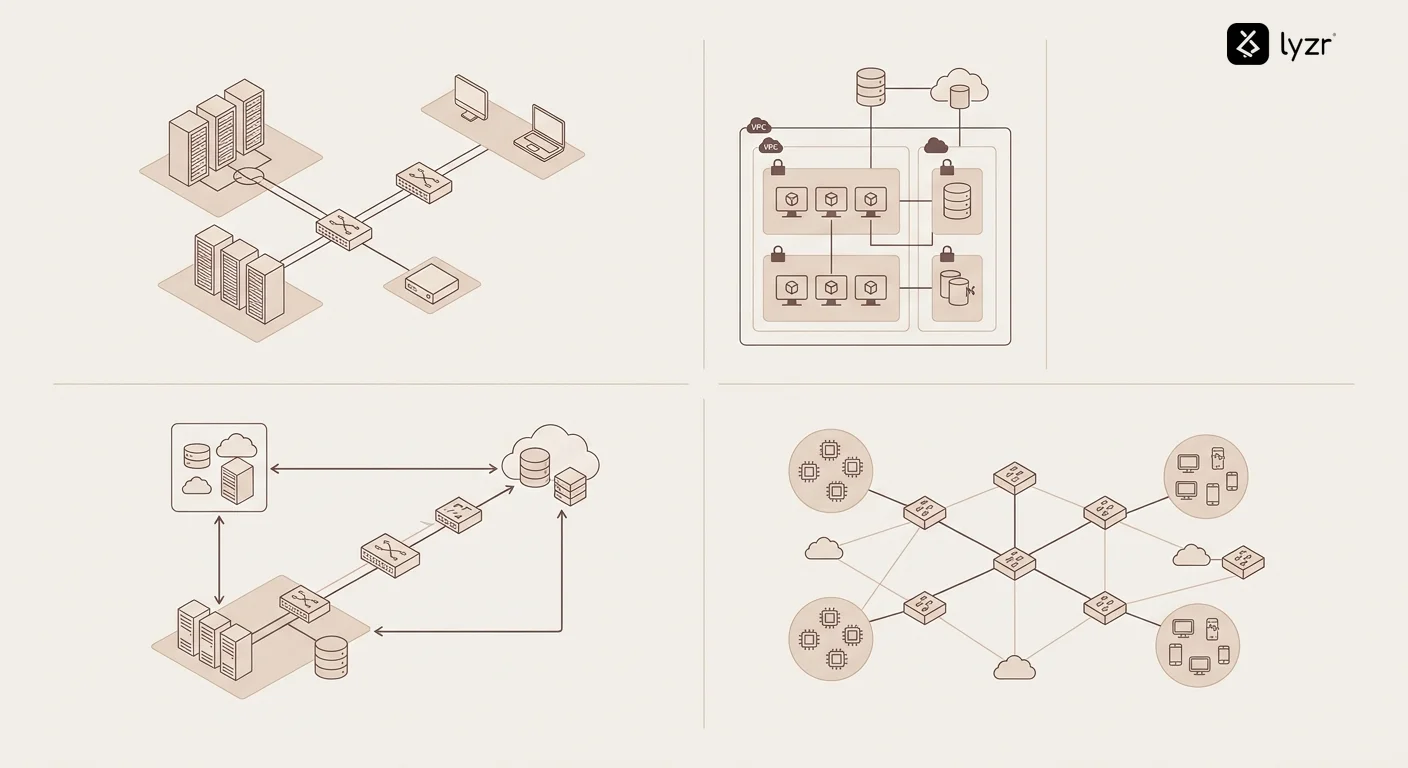

Where do private AI agents run?

The deployment environment determines both capabilities and constraints.

On-premises servers offer maximum control.

You own the hardware, manage the network, and maintain complete physical security.

This approach works well for organizations with existing data center infrastructure and strict air-gap requirements.

Defense contractors, financial institutions with legacy systems, and healthcare networks often choose this path.

The tradeoff? You handle maintenance, scaling, redundancy, and disaster recovery yourself.

Private cloud environments split the difference.

Virtual Private Clouds on AWS, Azure, or Google Cloud give you dedicated computing resources that don’t share infrastructure with other customers.

You get cloud flexibility – easy scaling, managed services, geographic redundancy – while maintaining network isolation.

Teams deploying autonomous agents on AWS typically use VPCs with private subnets, ensuring AI workloads never touch the public internet.

Hybrid architectures combine both approaches.

Extremely sensitive data stays on-premises while less critical workloads run in private cloud instances.

A bank might process loan approvals with AI agents on local servers but handle customer service inquiries in a private cloud environment.

This provides flexibility without compromising on the most critical security requirements.

What about edge deployment?

Some organizations deploy private AI agents directly on edge devices.

Medical imaging equipment might run diagnostic agents locally rather than sending scans to central servers.

Manufacturing facilities deploy quality control agents on factory floor hardware to avoid network latency.

Retail chains run inventory optimization agents in individual stores.

Edge deployment maximizes privacy – data never leaves the device – and eliminates network dependencies, but requires more powerful local hardware and distributed management capabilities.

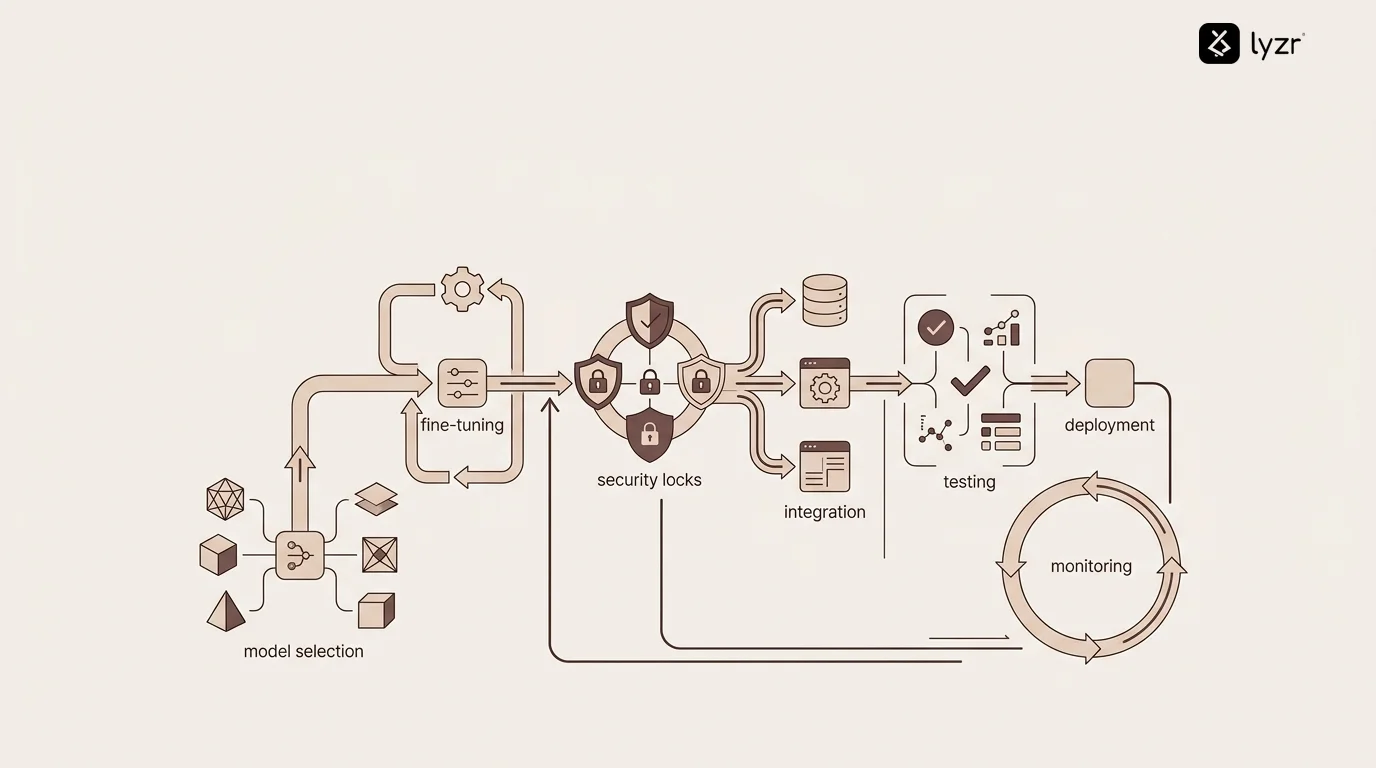

How do you actually build one?

Building private AI agents requires thinking through several layers of technical and operational decisions.

Start with the model selection.

Open-source models like Llama 2, Mistral, or Falcon give you complete control and no licensing restrictions for internal use.

You can fine-tune them on your specific data, modify their behavior, and deploy them however you want.

Commercially licensed models from Anthropic, Cohere, or others offer potentially better performance but come with usage restrictions and costs.

Custom-trained models built from scratch give you maximum optimization but require significant ML expertise and computational resources.

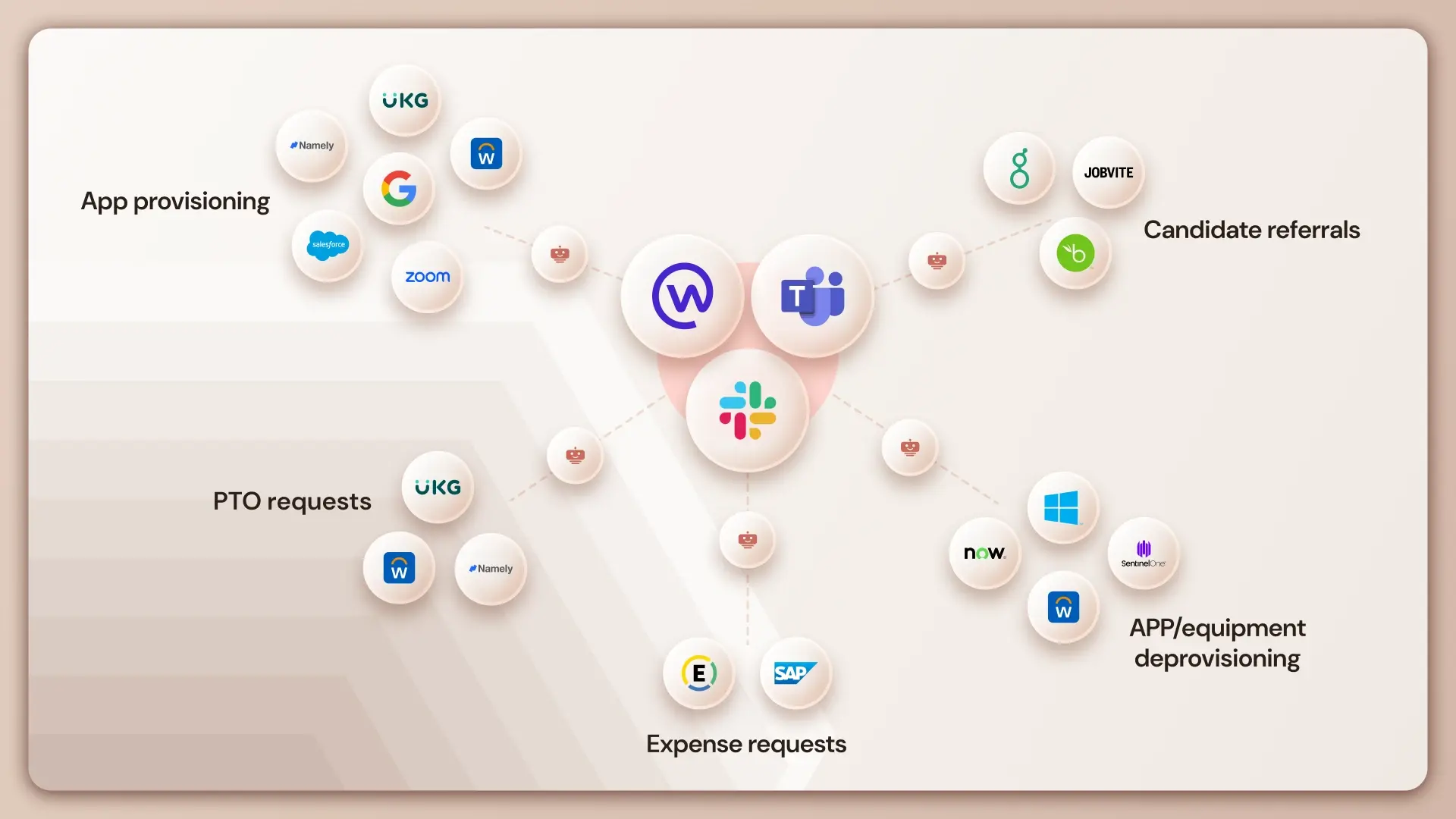

The integration layer connects your AI to actual business processes.

This means building secure APIs to your databases, creating connectors to enterprise applications like Salesforce or ServiceNow, implementing authentication systems that respect your existing identity management, and developing workflow orchestration that handles multi-step processes.

An AI agent for lead qualification needs to access your CRM, scoring rules, communication history, and sales playbooks – all while maintaining proper access controls.

The security and compliance framework determines whether your deployment actually meets regulatory requirements.

This includes encryption at rest and in transit, role-based access controls, comprehensive audit logging, data lineage tracking, model versioning and governance, and automated compliance reporting.

Organizations handling KYC verification with AI agents need particularly robust audit trails to demonstrate regulatory compliance during examinations.

Most enterprises don’t build all this from scratch.

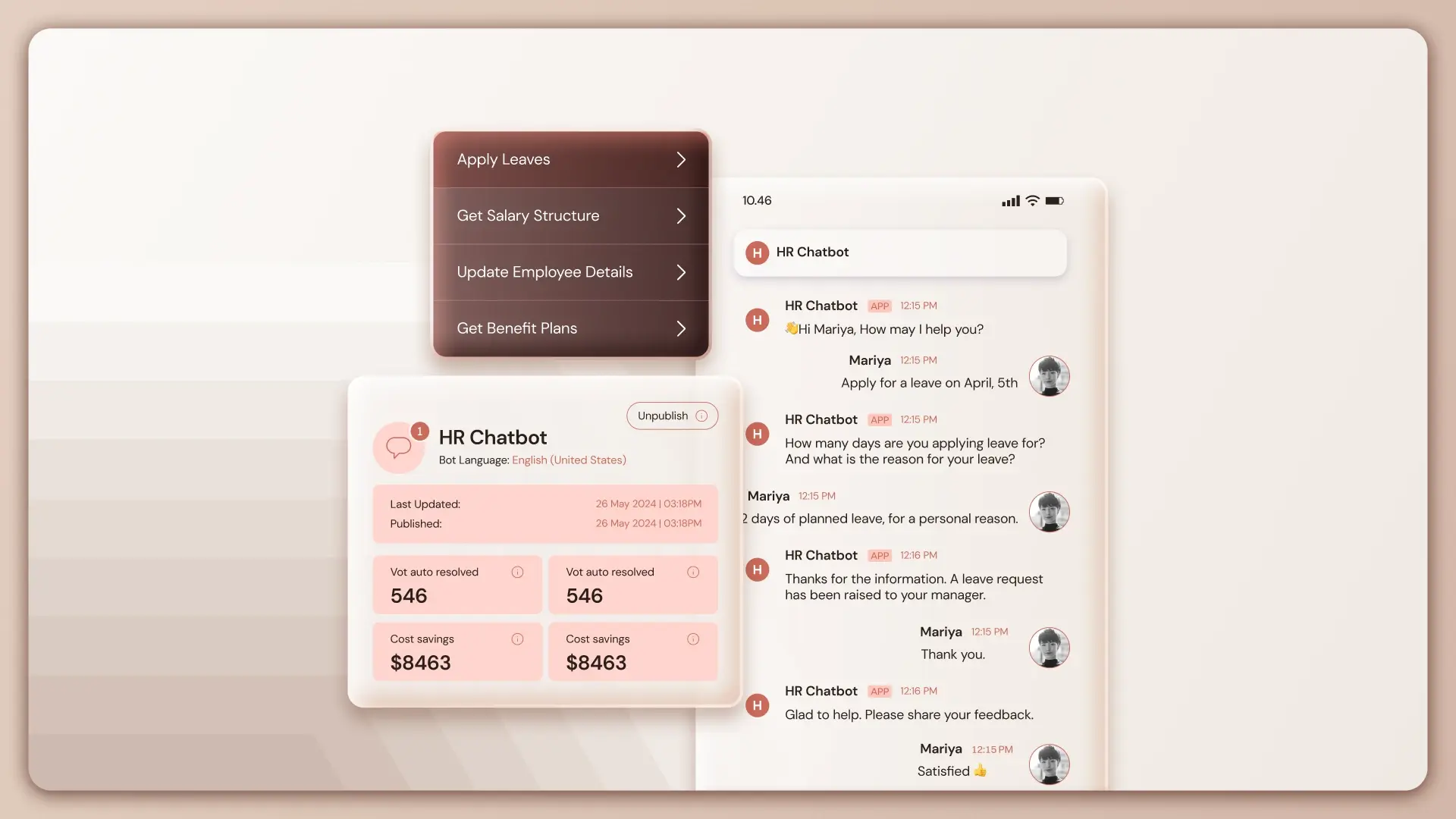

Platforms like Lyzr.ai provide the infrastructure layer – agent frameworks, integration tooling, security controls, and deployment automation – so teams can focus on building agents that solve specific business problems rather than reinventing enterprise AI architecture.

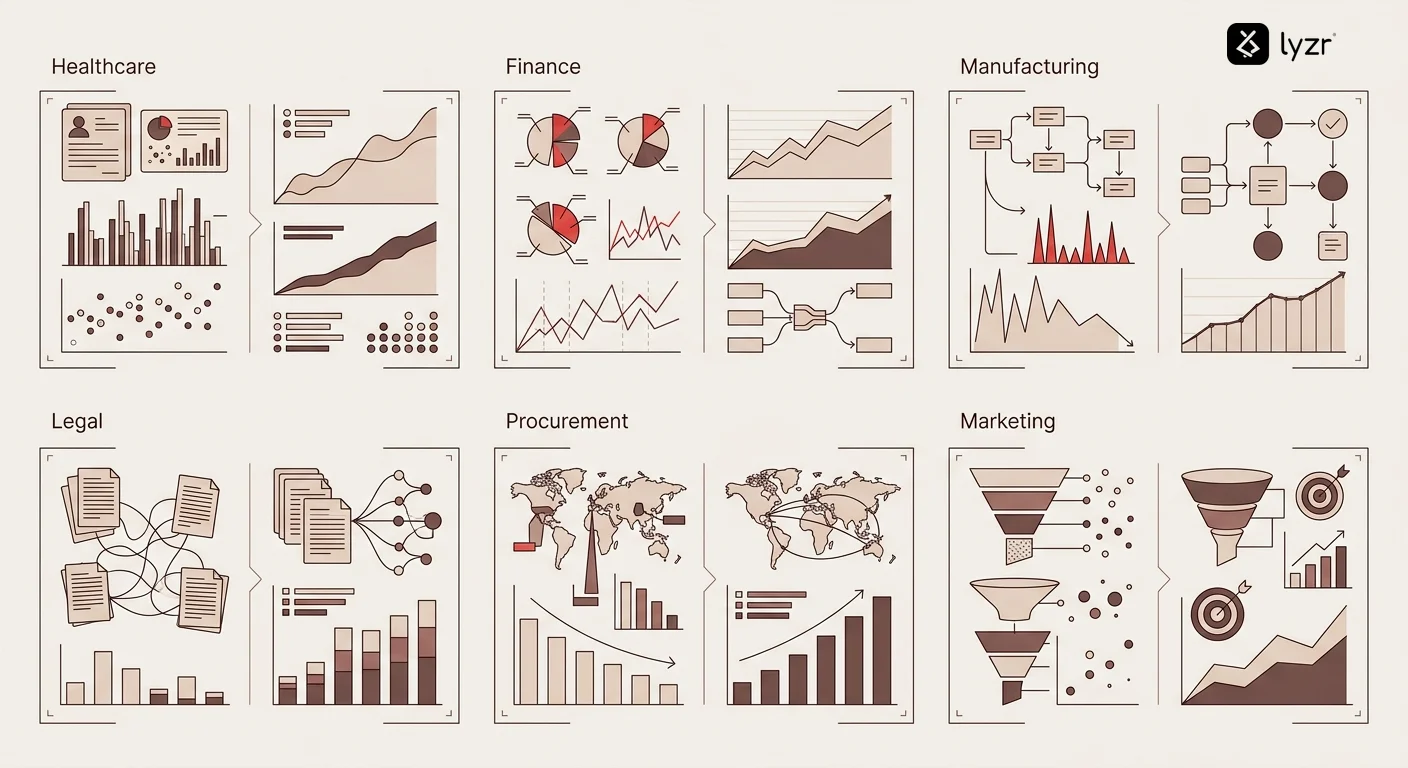

What can private AI agents actually do?

The use cases span every function where sensitive data meets automation needs.

In finance and banking, private agents handle everything from fraud detection analyzing transaction patterns without exposing customer data to regulatory reporting that compiles compliance documentation from confidential sources.

AI agents for commercial banking assess credit risk by analyzing financial statements, market data, and relationship history – all information that can’t leave the institution’s secure environment.

Healthcare organizations deploy private agents for clinical decision support, suggesting treatments based on patient histories and medical literature without sending protected health information to external services.

They automate prior authorization by reviewing insurance policies and medical necessity, reducing administrative burden while maintaining HIPAA compliance.

They analyze clinical trial data, identifying patterns and adverse events in proprietary research that represents billions in development investment.

Manufacturing and supply chain teams use private agents for predictive maintenance, analyzing sensor data and failure patterns to prevent downtime without sharing operational details with vendors.

They optimize inventory by processing proprietary sales forecasts, supplier relationships, and strategic plans.

They manage procurement processes with AI agents that negotiate contracts and analyze vendor performance using confidential pricing and relationship data.

Legal and professional services firms deploy private agents to analyze case files, precedents, and strategy documents that represent attorney-client privilege.

They draft contracts using firm-specific templates and clause libraries that embody years of negotiation experience.

They conduct due diligence, reviewing confidential business information during mergers and acquisitions.

Even marketing teams benefit when handling competitive intelligence, customer research, and strategic plans that shouldn’t leak to competitors.

AI agents for digital marketing can analyze campaign performance and customer behavior while keeping proprietary attribution models and targeting strategies internal.

Are private AI agents actually more secure?

Security isn’t automatic just because something runs privately.

A poorly configured private AI agent can be more dangerous than a well-managed public service.

The security advantage comes from control, not magic.

With private agents, you control the entire attack surface.

You choose which models to use, eliminating concerns about vendor data handling practices.

You define network boundaries, preventing unauthorized external access.

You manage encryption keys, avoiding third-party key management risks.

You implement access controls that align exactly with your organizational structure.

But control creates responsibility.

You need to patch vulnerabilities, monitor for intrusions, maintain redundancy, test disaster recovery, train administrators, and update security policies.

Public AI services handle much of this infrastructure security automatically, though you sacrifice control in the process.

The real security question isn’t public versus private – it’s whether your organization has the expertise and resources to manage private infrastructure securely. This is where agentic ai security becomes critical. As AI systems evolve into autonomous agents, protection must extend to governing agent behavior—controlling access and ensuring every decision remains observable, auditable, and compliant in real time.

For enterprises with established security operations centers, compliance teams, and infrastructure management capabilities, private agents offer superior security posture.

For smaller organizations without those resources, managed private deployment options – where specialized platforms handle infrastructure security while keeping your data isolated – often provide the best balance.

The insider threat angle

Private AI agents actually create new insider threat considerations.

Since the agents can access multiple internal systems and process sensitive data, they become high-value targets for compromised credentials.

Strong authentication, principle of least privilege, and comprehensive activity logging become critical.

You need monitoring systems that detect unusual agent behavior – accessing data outside normal patterns, making unexpected API calls, or operating at unusual times.

This level of scrutiny is actually easier with private agents than public services, where you have limited visibility into what happens after your data leaves your network.

What about performance and scaling?

Private AI agents face different performance dynamics than public services.

Latency often improves dramatically.

When your agents run in the same data center as your databases and applications, you eliminate network round trips to external APIs.

A query that takes 3-5 seconds through a public API might complete in 200 milliseconds locally.

For real-time applications – fraud detection, trading systems, customer interactions – this matters enormously.

Throughput becomes a function of your infrastructure investment.

Public AI services rate-limit based on pricing tiers, creating artificial capacity constraints.

Private agents scale with your hardware – add more GPUs, handle more requests.

Organizations processing high volumes of transactions benefit significantly.

A customer service operation with AI agents handling 10,000 inquiries daily might pay $15,000+ monthly for public API access but could run the same workload on $30,000 of hardware with negligible ongoing costs.

The challenge comes during scaling events.

Public services scale automatically – traffic spikes just cost more.

Private infrastructure requires capacity planning.

If you suddenly need 10x capacity, you can’t just add it instantly.

This makes hybrid approaches attractive – handle baseline load privately, overflow to public services during peaks.

Model optimization becomes more critical with private deployment.

Techniques like quantization, pruning, and distillation can reduce model size and inference time dramatically, letting you run more efficiently on the same hardware.

A 70-billion parameter model might require expensive GPU infrastructure, but a quantized version or a distilled smaller model could run on standard servers while maintaining acceptable accuracy.

How do you handle model updates and improvements?

Model governance becomes your responsibility with private AI agents.

Public services update their models continuously – sometimes improving performance, occasionally breaking existing integrations, always on their schedule.

Private agents give you control over if, when, and how to update.

This control cuts both ways.

You can thoroughly test new model versions before deploying to production, avoiding the disruptions that sometimes occur when public providers push updates.

You can maintain multiple model versions simultaneously, gradually migrating workloads rather than switching everything at once.

You can choose not to update if a new version doesn’t benefit your use cases.

But you also need processes for tracking model releases, evaluating improvements, testing against your specific workflows, and managing rollout schedules.

Organizations running AI agents for SEO or content creation often maintain multiple model versions – keeping older versions that produce a specific writing style while testing newer versions for improved capabilities.

Fine-tuning adds another dimension.

Private deployment lets you continuously fine-tune models on your own data, improving performance for your specific use cases in ways generic public models can’t match.

A financial institution might fine-tune models on historical market analysis, regulatory documents, and internal research to create agents that understand their specific analytical frameworks.

This creates compounding advantages over time – your models get better at your specific tasks while competitors using public services get the same generic improvements as everyone else.

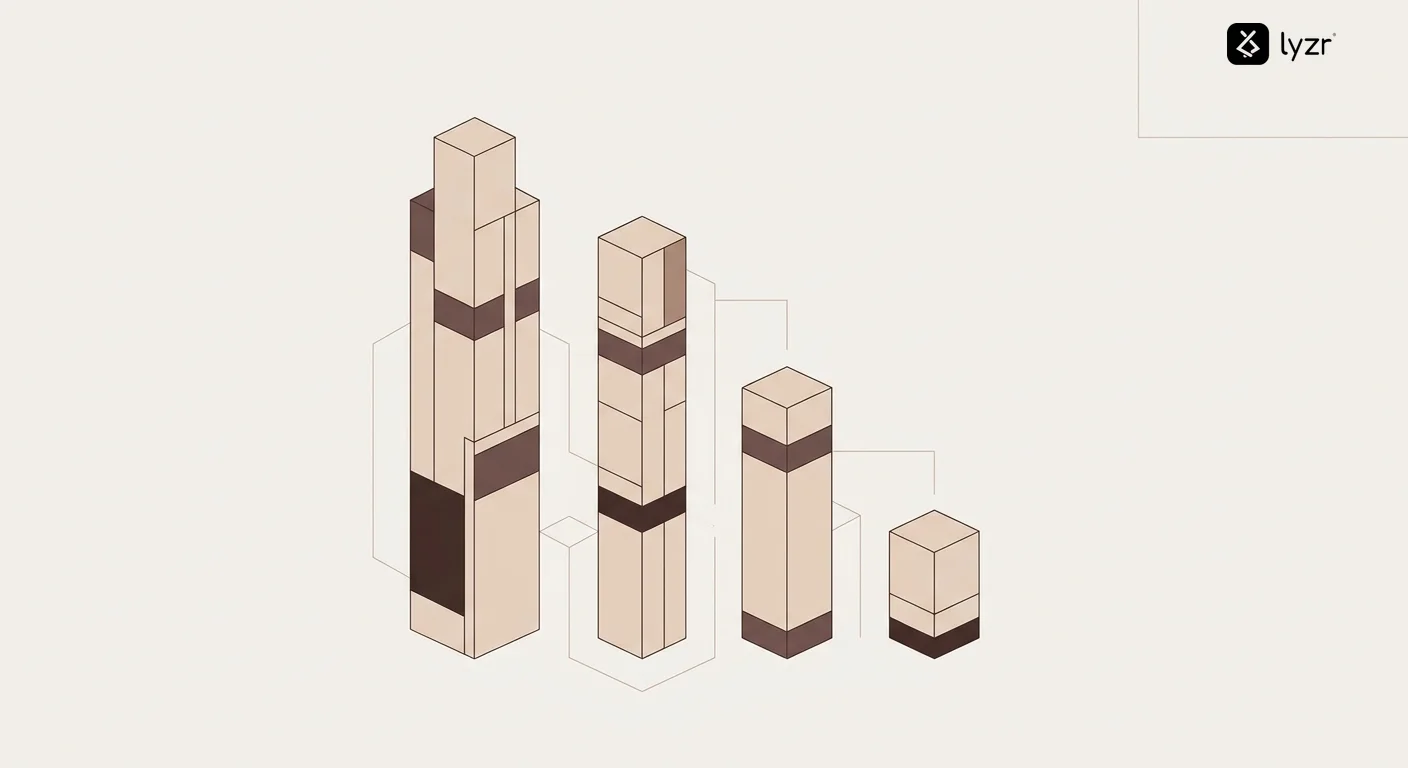

What does it cost to run private AI agents?

The cost equation for private AI agents differs fundamentally from public services.

The AI agent development cost begins with the initial infrastructure investment, which represents the first major expense.

GPU servers for running large language models range from $15,000 for entry-level setups to $500,000+ for high-performance clusters.

Cloud-based private deployment in a VPC costs $3,000-$50,000 monthly depending on instance types and usage patterns.

On-premises deployment adds facilities costs – power, cooling, physical security, network infrastructure.

Ongoing operational costs include infrastructure maintenance, security updates, monitoring systems, and specialized personnel.

A team running private AI agents typically needs ML engineers for model management, DevOps specialists for infrastructure, security professionals for compliance, and domain experts for fine-tuning and optimization.

Depending on scale, this might represent 2-10 full-time roles.

Model licensing adds variable costs.

Open-source models are free for internal use, but commercially licensed models might cost $100,000-$1,000,000+ annually depending on usage volume and deployment scope.

The break-even calculation depends on usage volume.

At low volumes – under 100,000 API calls monthly – public services usually cost less.

At moderate volumes – 500,000 to 5 million calls monthly – costs roughly equalize, with private deployment offering control benefits that justify similar expense.

At high volumes – over 10 million calls monthly – private agents typically cost 40-70% less than public services while providing better performance.

Organizations deploying AI agents for payroll processing or other high-frequency operations often reach break-even within 12-18 months.

| Cost Component | Low Scale | Medium Scale | High Scale |

|---|---|---|---|

| Infrastructure (Annual) | $36,000 | $120,000 | $400,000 |

| Personnel (Annual) | $300,000 | $600,000 | $1,200,000 |

| Licensing (Annual) | $50,000 | $200,000 | $500,000 |

| Total Private Cost | $386,000 | $920,000 | $2,100,000 |

| Comparable Public API Cost | $180,000 | $900,000 | $3,500,000 |

| Break-even Volume | Not reached | ~500K calls/mo | ~8M calls/mo |

Can you really trust AI that runs privately?

Trust in private AI agents requires addressing several dimensions beyond just data security.

Output reliability becomes your responsibility to validate.

Public AI services invest heavily in safety testing, bias mitigation, and factual accuracy improvements that benefit all users.

When you deploy private models, you need equivalent testing frameworks tailored to your specific use cases.

A private agent handling stock market analysis needs rigorous backtesting against historical data, validation of recommendations against expert judgment, and ongoing monitoring for model drift as market conditions change.

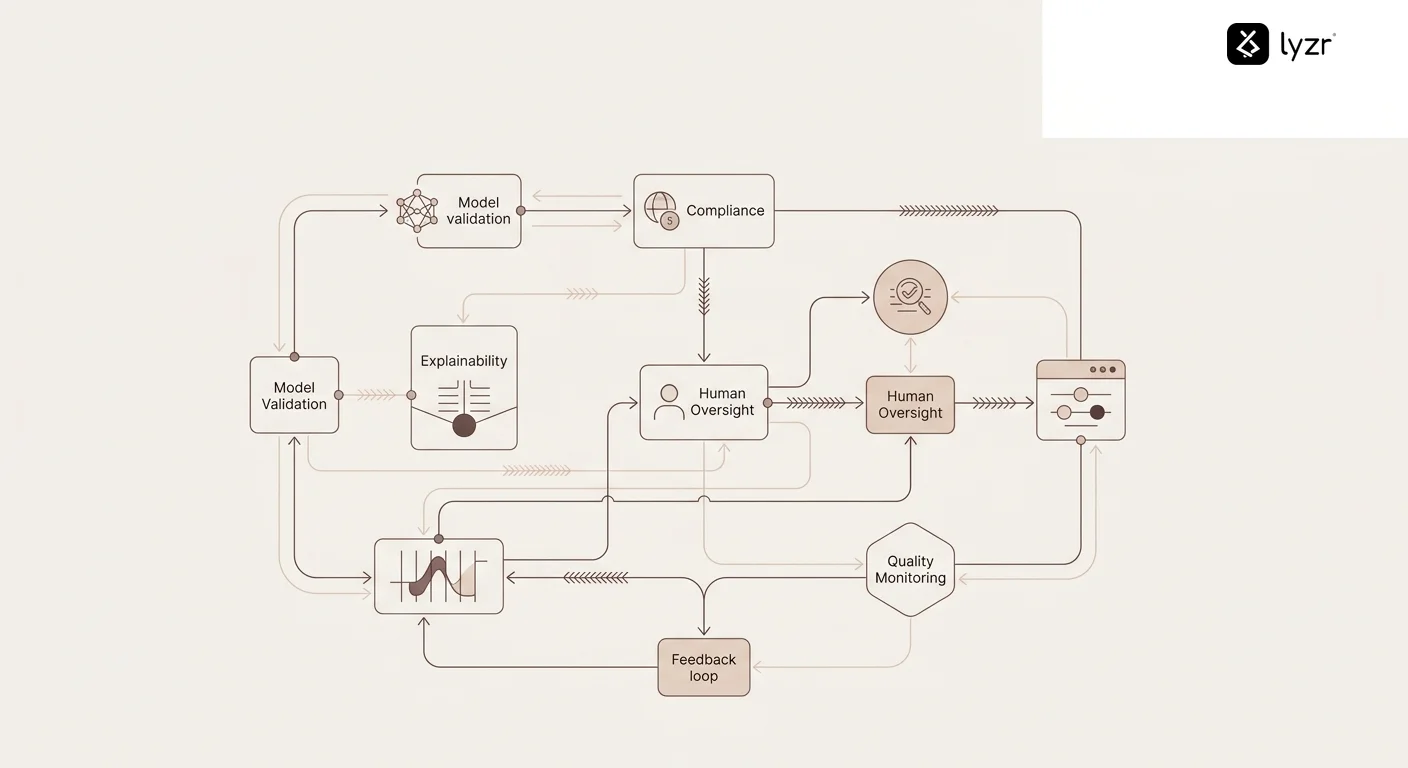

Explainability matters more when decisions have consequences.

If your private agent denies a loan, recommends a medical treatment, or flags a transaction as fraudulent, you need to explain why.

This requires building interpretability into your agent architecture – logging decision factors, maintaining audit trails, and potentially using techniques like attention visualization or counterfactual analysis.

Compliance verification shifts from trusting vendor attestations to conducting your own assessments.

You need to demonstrate to auditors that your private agents meet regulatory requirements.

This means maintaining documentation of model training data sources, tracking which data the agents access, logging all decisions for review, implementing human oversight workflows where required, and conducting regular bias and fairness testing.

Organizations in regulated industries often find this documentation burden easier with private agents because they control the entire system rather than relying on vendor SOC 2 reports that may not address industry-specific requirements.

Human oversight integration becomes critical.

Most private AI agent deployments include human-in-the-loop workflows for high-stakes decisions, confidence thresholds that escalate uncertain cases to human review, regular sampling and quality auditing of agent outputs, and feedback mechanisms that let domain experts correct agent mistakes.

This combination of automation and human judgment builds trust gradually as the system proves reliable.

How do private agents work with existing systems?

Integration challenges often determine whether private AI agent projects succeed or stall.

Legacy system connectivity creates the first hurdle.

Many enterprises run critical processes on decades-old systems – mainframes, custom databases, proprietary applications – that weren’t designed for modern API integration.

Private AI agents need middleware layers that translate between modern REST APIs and legacy protocols, handle data format conversions, and manage transaction consistency across systems.

A bank deploying private agents might need to connect to COBOL-based core banking systems, modern CRM platforms, real-time fraud detection systems, and external data feeds – all with different protocols, authentication methods, and data formats.

Data synchronization becomes complex when agents need current information from multiple sources.

Real-time integration requires event-driven architectures where systems push updates to agents as changes occur.

Batch integration processes data periodically, which works for less time-sensitive applications but may provide stale information.

Hybrid approaches use real-time integration for critical data and batch processing for historical context or reference information.

Authentication and authorization across systems requires careful orchestration.

Private agents might need to act on behalf of users, requiring delegation mechanisms that respect existing access controls.

Service accounts with carefully scoped privileges, OAuth flows for user delegation, and comprehensive logging of all access attempts become essential components.

Workflow integration determines how agents fit into business processes.

Some agents operate autonomously – monitoring systems and taking action when conditions are met.

Others participate in human-driven workflows – analyzing data when requested, providing recommendations for human decisions, or handling specific steps in multi-stage processes.

Organizations building AI agents for brand building typically integrate with content management systems, social media platforms, analytics tools, and creative review workflows – each integration point requiring careful design to maintain efficiency without bypassing necessary controls.

What’s the future of private AI agents?

Several trends will reshape how organizations deploy and use private AI agents over the next few years.

Specialized models optimized for private deployment are emerging rapidly.

While early private agents relied on general-purpose models designed for cloud deployment, newer models specifically target edge and on-premises use cases with better efficiency and smaller footprints.

Techniques like mixture-of-experts architectures let organizations deploy powerful models that only activate relevant portions for specific tasks, dramatically reducing compute requirements.

Federated learning approaches will let organizations train models across multiple private deployments without centralizing data.

Hospital networks could collaboratively improve diagnostic agents while keeping patient data at individual facilities.

Bank consortiums could train fraud detection models on collective transaction patterns without sharing customer information.

Regulatory frameworks specifically addressing private AI deployment are developing.

Current regulations like GDPR, HIPAA, and financial privacy laws weren’t written with AI agents in mind.

New frameworks will likely create clearer standards for private AI governance, potentially making private deployment the default requirement for sensitive data rather than an optional security enhancement.

Platform consolidation will make private agent deployment more accessible.

Rather than building entire agent infrastructures from scratch, organizations increasingly use platforms that provide the core capabilities – model serving, integration frameworks, security controls, monitoring systems – while letting teams focus on domain-specific agent logic.

This democratizes private AI, making it viable for mid-sized organizations that couldn’t previously justify the infrastructure investment.

Lyzr.ai exemplifies this trend, providing enterprise teams with production-ready infrastructure for deploying AI agents privately while maintaining complete data sovereignty.

The platform handles the complexity of model deployment, integration, security, and scaling – letting organizations focus on building agents that solve specific business problems rather than managing infrastructure.

How do you get started with private AI agents?

Starting with private AI agents requires strategic thinking rather than just technical implementation.

Begin with use case selection that balances value and complexity.

Ideal first projects handle high-volume, repetitive tasks with clear success metrics, work with data that can’t leave your infrastructure for compliance reasons, and provide measurable business value quickly enough to justify continued investment.

A customer service team might start with an agent handling common inquiries before tackling complex technical support.

A finance team might begin with expense report processing before moving to complex financial analysis.

Infrastructure decisions should match your organization’s existing capabilities.

If you already run significant on-premises computing infrastructure, extending it for AI agents makes sense.

If you’re primarily cloud-based, private cloud deployment in a VPC offers the right balance.

If you lack infrastructure expertise, managed platform solutions let you deploy private agents without building everything yourself.

Team composition matters as much as technology.

Successful private agent projects need domain experts who understand the business problem, ML engineers who can work with models and training pipelines, integration specialists who know your existing systems, security professionals who ensure compliance, and project managers who coordinate across these disciplines.

Most organizations underestimate the domain expertise component – technical capability alone doesn’t create useful agents.

Pilot projects should run for 60-90 days with clear success criteria.

Define metrics before starting – accuracy targets, processing time improvements, cost reductions, user satisfaction scores – and measure ruthlessly.

Successful pilots demonstrate value quickly and create momentum for broader deployment.

Failed pilots provide learning without catastrophic investment.

Governance frameworks should be established early, even for pilot projects.

Define who approves agent deployments, how model updates get tested and released, what monitoring and alerting looks like, and how incidents get handled.

These processes scale more easily when built into initial projects rather than retrofitted later.

Many forward-thinking enterprises use platforms like Lyzr.ai to accelerate this journey. As organizations begin exploring private AI deployments, gaining a clear understanding of how autonomous systems operate becomes increasingly important. Enrolling in an agentic ai course can help professionals learn how to design, deploy, and manage intelligent agents within secure environments. With the right knowledge, teams can move from experimentation to building scalable, compliant AI solutions that align with business goals.

Rather than spending months building agent infrastructure, teams can deploy production-ready private agents in weeks, focusing energy on solving business problems rather than reinventing technical foundations.

The platform provides pre-built integration frameworks, security controls, and monitoring capabilities that would otherwise require extensive custom development.

Your data deserves better than public cloud AI

The uncomfortable truth about public AI services is that convenience comes with hidden costs.

Every customer record sent to external APIs represents potential exposure.

Every proprietary analysis processed on vendor infrastructure creates dependency.

Every strategic document analyzed by public models might inform training data that benefits competitors.

Private AI agents aren’t perfect, and they’re not right for every situation.

They require investment, expertise, and ongoing management that public services handle automatically.

But for organizations handling sensitive data, operating in regulated industries, or building competitive advantages through proprietary AI capabilities, private deployment offers something public services fundamentally cannot – complete control over your most valuable asset: your data.

The question isn’t whether private AI agents will become standard in enterprises.

They already are, driven by regulatory requirements, competitive pressures, and the simple reality that critical business functions demand infrastructure you control.

The real question is whether your organization will lead this transition or scramble to catch up when compliance requirements or competitive dynamics force your hand.

Lyzr.ai makes this transition practical for enterprises ready to deploy AI agents without sacrificing data sovereignty.

The platform handles the infrastructure complexity – model deployment, integration, security, scaling – while keeping your data exactly where it belongs: behind your firewall, under your control, serving your competitive advantage.

Ready to explore what private AI agents can do for your organization? Visit lyzr.ai to see how enterprises are deploying secure, compliant AI automation that actually works.

TL;DR – Private AI Agents Essentials

- Private AI agents run entirely within your infrastructure, processing sensitive data without external exposure – critical for compliance, competitive advantage, and data sovereignty

- They work across industries handling everything from fraud detection and clinical decision support to procurement optimization and customer service, anywhere automation meets sensitive data

- Deployment options span on-premises servers, private cloud VPCs, hybrid architectures, and edge devices, each offering different tradeoffs between control, flexibility, and management complexity

- Security advantages come from control, not magic – you manage the entire stack, define access controls, and maintain complete audit trails, but you’re also responsible for patches, monitoring, and incident response

- Cost economics favor private deployment at scale – high initial investment but predictable ongoing costs make sense for high-volume operations, typically breaking even at 500,000+ monthly transactions

- Platforms like Lyzr.ai accelerate deployment by providing production-ready infrastructure, letting teams focus on solving business problems rather than building agent frameworks from scratch

Action Checklist – Implementing Private AI Agents

- Identify use cases where compliance requirements or competitive advantage justify private deployment over public AI services

- Assess your current infrastructure capabilities – on-premises compute resources, cloud environments, security operations, and ML expertise

- Calculate total cost of ownership comparing private deployment infrastructure and personnel costs against public API pricing at your expected usage volumes

- Establish governance frameworks defining approval processes, testing requirements, monitoring standards, and incident response procedures before deploying first agents

- Run a focused 60-90 day pilot project on a high-value, well-defined use case with clear success metrics and stakeholder buy-in

- Build cross-functional teams combining domain expertise, ML engineering, integration specialists, and security professionals rather than treating this as purely a technical project

- Implement comprehensive logging and monitoring from day one – audit trails, performance metrics, security alerts, and quality assessments

- Create human oversight workflows for high-stakes decisions, including confidence thresholds, escalation processes, and feedback mechanisms

- Evaluate platforms like Lyzr.ai that provide pre-built infrastructure, reducing time-to-value and ongoing management overhead

Frequently Asked Questions

What’s the difference between private AI agents and regular AI APIs?

Private AI agents run entirely within your infrastructure – on your servers, in your VPC, behind your firewall. Regular AI APIs process data on vendor servers, meaning your information leaves your security perimeter. Private agents give you complete control over where data goes, how models are updated, and who can access what, while public APIs offer convenience and automatic scaling at the cost of control and potential compliance issues.

Can small companies deploy private AI agents or is it only for enterprises?

Small companies can deploy private AI agents, especially using managed platform approaches that handle infrastructure complexity. The economics work best for organizations with either high transaction volumes that make public API costs expensive, strict compliance requirements that mandate private deployment regardless of cost, or proprietary data that creates competitive advantage worth protecting. Companies under 100 employees typically start with public services and migrate to private deployment as specific needs emerge.

How long does it take to deploy a private AI agent?

Timeline depends on complexity and existing infrastructure. Using platforms like Lyzr.ai, teams can deploy simple private agents in 2-4 weeks. Building custom infrastructure from scratch typically takes 3-6 months for initial deployment. The timeline includes infrastructure setup, model selection and optimization, integration with existing systems, security configuration, and testing. Most organizations run 60-90 day pilot projects before broader rollout to validate approach and refine processes.

Do private AI agents work offline or without internet connectivity?

Yes, private AI agents can operate completely offline once deployed. Since they run locally on your infrastructure and use locally hosted models, they don’t require internet connectivity for core operations. This works particularly well for edge deployments in manufacturing facilities, medical equipment, retail locations, or secure government facilities. The agents might need periodic internet access for model updates or accessing external data sources, but those connections can be carefully controlled and scheduled.

What happens to my private AI agents if the platform provider goes out of business?

This depends on your deployment architecture. If you’re using open-source models on infrastructure you control, the agents continue operating independently – you own the stack. Proprietary platform components might stop receiving updates, but existing functionality persists. Smart organizations architect for portability from the start, using standard interfaces and avoiding deep dependencies on vendor-specific features. Managed private deployment services should provide data export capabilities and transition support as part of enterprise contracts.

Can private AI agents integrate with public AI services for specific tasks?

Yes, hybrid architectures let private agents handle sensitive data processing while selectively using public services for specific tasks. You might run core business logic privately but use public image generation APIs for marketing assets, process customer data privately but use public sentiment analysis on anonymized feedback, or handle financial analysis privately while accessing public market data feeds. The key is architecting data flows so sensitive information stays private while leveraging public services where appropriate.

How do you update private AI agent models without downtime?

Blue-green deployment strategies let you run new model versions alongside existing ones, gradually shifting traffic after validation. You maintain two production environments, deploy updates to the inactive environment, test thoroughly, then switch traffic over. For critical applications, canary deployments send a small percentage of requests to new models while most traffic uses proven versions, expanding gradually based on performance metrics. Many organizations maintain multiple model versions simultaneously, routing different request types to optimized models for each use case.

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here