Table of Contents

ToggleA Quick Fix That Spread Fast

Someone pastes a client email into ChatGPT to clean up a reply.

Another drops in code to debug faster.

Sales uses it to summarize a call.

No approvals. No process. Just speed.

Now imagine this happening across teams, every single day.

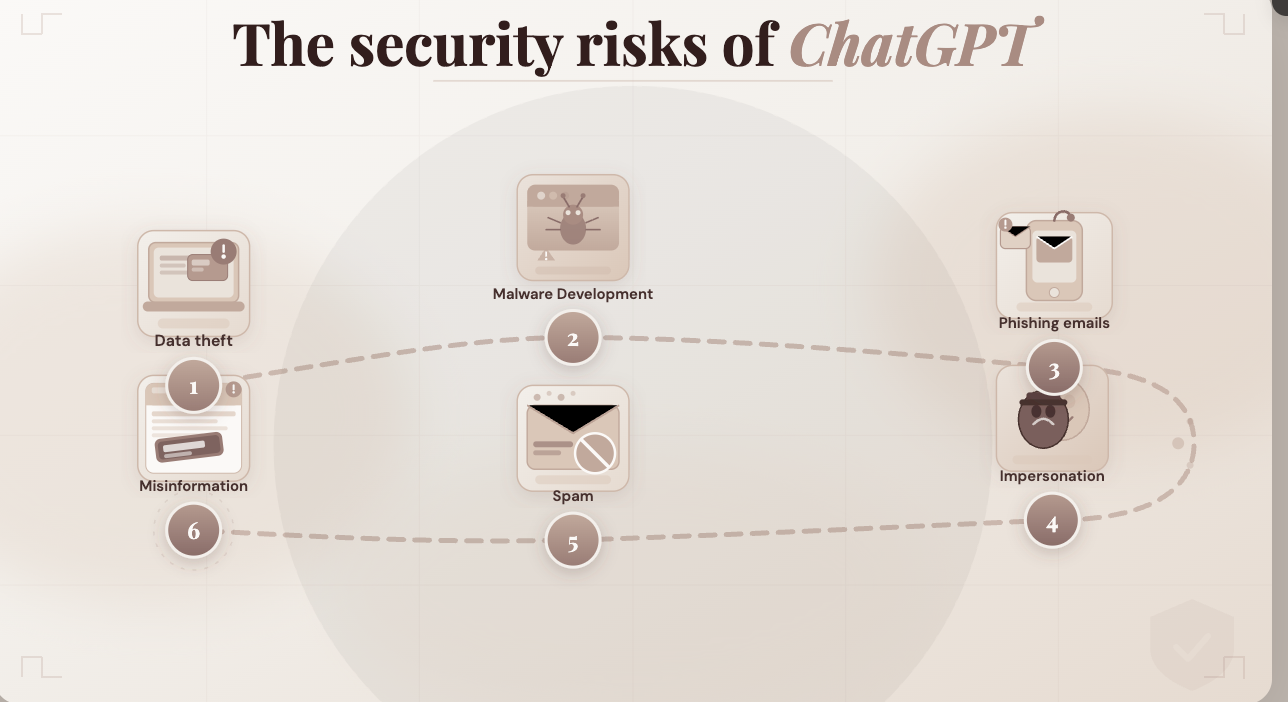

Why This Is Becoming a Real Security Concern

Search trends around “ChatGPT security risks,” “AI data privacy,” and “enterprise AI compliance” have surged—and for good reason.

What looks like harmless usage is quietly turning into:

Shadow AI: AI usage happening outside company control, visibility, and policy.

And that’s where things start to break.

Risk #1: Sensitive Data Gets Shared (More Often Than Expected)

Let’s be honest—most prompts aren’t generic.

They include real work:

- Customer conversations

- Financial numbers

- Internal reports

- Bits of source code

| Everyday Action | What It Means |

| “Fix this client email” | Sharing PII |

| “Summarize this report” | Exposing internal data |

| “Debug this code” | Leaking IP |

Here’s the catch:

Even if nothing is stored long-term, the data is still processed externally.

And for many companies, that alone is a compliance issue.

Risk #2: Zero Visibility for Security Teams

Most companies have no idea how employees are using ChatGPT at work.

No logs. No tracking. No audit trail.

| Question | Typical Answer |

| What data was shared? | Unknown |

| Who shared it? | Unknown |

| Why was it used? | No record |

This becomes a serious problem during:

- Security audits

- Compliance checks

- Internal investigations

If it’s not logged, it didn’t happen, at least from an audit perspective.

Risk #3: Prompt Injection Is Not Just Theory

This one sounds technical, but it’s already happening.

AI models follow instructions, sometimes too well.

| Scenario | What Goes Wrong |

| Malicious text in a customer query | AI reveals internal info |

| Hidden instructions in documents | Model overrides intended behavior |

| External content pasted into prompts | Sensitive context leaks out |

Simple way to think about it:

If the input is compromised, the output can be too.

Risk #4: Intellectual Property Slips Out Quietly

This is one of the most common (and least noticed) risks.

People paste:

- Internal code

- Product ideas

- Strategy docs

Not because they want to leak anything—just to get better output.

| Asset | Why It Matters |

| Source code | Core IP |

| Product plans | Competitive edge |

| Internal workflows | Operational advantage |

No breach. No alert.

Just gradual exposure.

Risk #5: Inconsistent Usage = Unpredictable Risk

Some teams are careful. Others aren’t.

- One team avoids sensitive data

- Another pastes everything

- Some validate outputs

- Some trust them blindly

| Without Clear Rules | What Happens |

| No guidelines | Everyone decides individually |

| No enforcement | Risk varies by team |

| No monitoring | Problems go unnoticed |

Security isn’t just about tools—it’s about consistency.

So… Should Companies Stop Using ChatGPT?

Not really.

That’s not realistic, and honestly, not necessary.

The real issue isn’t using AI at work.

It’s using it without control.

What Safer AI Usage Actually Looks Like

Teams that are getting this right are doing a few things differently:

Clear Boundaries: Define what data can and cannot be shared

Controlled Access: Avoid direct use of public AI for sensitive workflows

Built-in Guardrails: Block risky prompts before they go through

Full Visibility: Log every interaction for audit and review

Consistent Usage: Same rules across teams, not guesswork

Where LyzrGPT Comes In

This is exactly where LyzrGPT fits.

Instead of employees individually using public tools like ChatGPT:

- AI runs within controlled environments

- Sensitive data stays within defined boundaries

- Every interaction is logged and traceable

- Guardrails prevent risky inputs automatically

So teams still get the speed of AI—

without opening up hidden security gaps.

Final Thought

The risk with ChatGPT at work doesn’t look dramatic.

No alarms. No obvious failure.

Just small, everyday actions like:

- Pasting a message

- Summarizing a document

- Debugging code

Individually harmless.

But at scale?

That’s where AI security risks, data privacy concerns, and enterprise compliance gaps start to show up.

The shift isn’t about stopping AI.

It’s about making sure it’s used in a way

that doesn’t quietly put the business at risk.

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here