Table of Contents

ToggleEnterprise AI adoption is no longer an experiment. It is becoming core to how decisions are made, how workflows run, and how products evolve.

But as adoption grows, a critical architectural decision often gets overlooked early on, whether to rely on a single model vendor or design for flexibility across models.

At first, sticking to one provider feels simple. One API, one billing system, one integration path. But over time, that simplicity turns into a constraint.

This blog breaks down why model flexibility matters, where vendor lock-in creates risk, and how platforms like LyzrGPT address this gap.

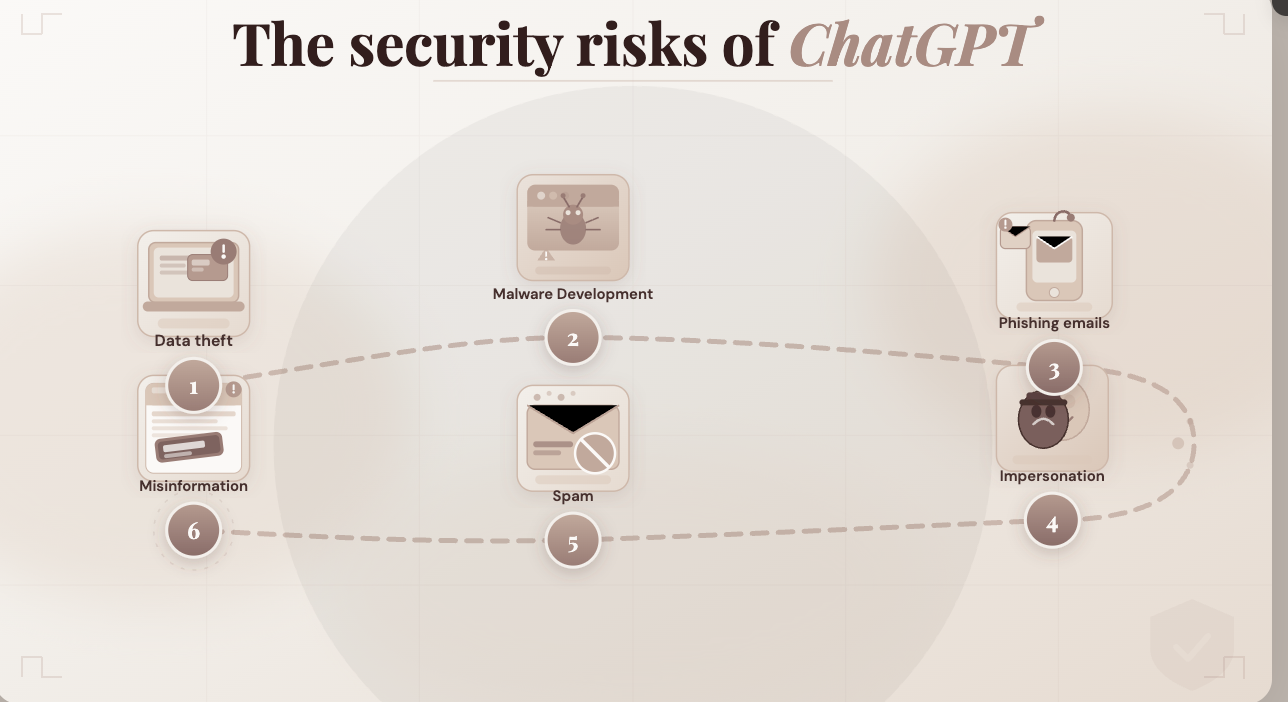

The Hidden Cost of Vendor Lock-In

Vendor lock-in in AI is not just about pricing. It impacts performance, adaptability, and long-term control.

What vendor lock-in looks like in practice

- All applications depend on a single LLM provider

- Switching models requires rework across codebases

- Teams optimize prompts for one model’s behavior

- Pricing changes directly impact margins

- New capabilities from other providers remain unused

The real impact

| Area | With Vendor Lock-In | With Model Flexibility |

| Cost control | Limited negotiation leverage | Ability to route to cost-efficient models |

| Performance | Fixed capability ceiling | Best model per use case |

| Innovation | Slower adoption of new models | Immediate experimentation |

| Risk | High dependency on one provider | Distributed risk |

| Customization | Constrained by one model’s behavior | Fine-tuned per workflow |

Why One Model Doesn’t Fit Every Use Case

Not all AI tasks are the same. Treating them as such leads to inefficiencies.

Example breakdown of enterprise use cases

| Use Case | Ideal Model Characteristics |

| Customer support automation | Fast, low-cost, high concurrency |

| Financial report generation | High accuracy, strong reasoning |

| Code generation | Structured output, context awareness |

| Document summarization | Balanced speed and coherence |

| Fraud detection analysis | Deep reasoning, pattern recognition |

Using a single model across all of these creates trade-offs.

Example scenario

A fintech company uses one premium model for everything:

- Customer support queries cost more than necessary

- Internal analytics tasks become expensive at scale

- Response latency increases during peak hours

With model flexibility:

- Support queries route to a lighter, faster model

- Financial analysis uses a high-reasoning model

- Internal tasks run on cost-efficient alternatives

Same system. Better allocation.

The Pace of Model Innovation Is Too Fast to Ignore

The AI ecosystem is evolving quickly. New models bring improvements in:

- Context length

- Reasoning ability

- Cost efficiency

- Latency

- Multimodal capabilities

Locking into one vendor means missing out on these improvements unless that vendor catches up.

What happens without flexibility

- Teams wait for their provider to release features

- Competitors adopt better models faster

- Migration becomes expensive and delayed

What happens with flexibility

- Teams test new models immediately

- Workloads shift dynamically based on performance

- Competitive advantage is maintained

Operational Challenges Without Model Flexibility

As systems scale, rigid model choices create operational friction.

Common challenges

1. Cost spikes

If pricing changes or usage increases, there is no fallback option.

2. Downtime risks

If a provider faces outages, systems fail without redundancy.

3. Performance limitations

Different tasks demand different strengths, which one model cannot cover consistently.

4. Engineering overhead

Switching models later requires:

- Rewriting prompts

- Adjusting outputs

- Retesting workflows

What Model Flexibility Actually Means

Model flexibility is not just about having multiple APIs. It is about intelligently orchestrating models based on context.

Core capabilities

| Capability | Description |

| Model routing | Select the best model per request |

| Fallback handling | Switch models during failures |

| Cost optimization | Balance performance and spend |

| Prompt abstraction | Write once, run across models |

| Evaluation layer | Compare outputs across models |

This approach shifts AI from static integration to dynamic infrastructure.

Real-World Example

Enterprise knowledge assistant

Without flexibility

- Uses one high-end model for all queries

- Cost per query remains high

- Simple queries consume unnecessary resources

With flexibility

| Query Type | Model Used |

| Basic FAQ | Lightweight model |

| Policy explanation | Mid-tier model |

| Complex compliance query | Advanced reasoning model |

Result:

- Reduced cost per interaction

- Faster responses for simple queries

- Higher accuracy for complex ones

The Strategic Shift Enterprises Need

AI is becoming infrastructure, not just a feature.

That means decisions made today will shape:

- Cost structure

- Product performance

- Ability to adapt

Relying on a single vendor creates a bottleneck at the infrastructure level.

Model flexibility removes that bottleneck.

Where LyzrGPT Fits In

This is where LyzrGPT comes into play.

Instead of forcing teams to choose one model, LyzrGPT is built around flexibility from the ground up.

What LyzrGPT enables

Unified model access

Access multiple leading models through a single interface without rewriting applications.

Intelligent routing

Automatically direct requests based on:

- Task complexity

- Cost constraints

- Latency requirements

Built-in fallback systems

If one model fails, another takes over without breaking workflows.

Prompt consistency

Abstract prompts so they work across models without constant adjustments.

How LyzrGPT Solves the Problem

| Challenge | Traditional Setup | With LyzrGPT |

| Switching models | Requires engineering effort | Instant configuration |

| Cost optimization | Manual tracking | Automated routing |

| Vendor dependency | High | Reduced |

| Performance tuning | Static | Dynamic |

| Scaling workloads | Expensive | Optimized per task |

Example Workflow with LyzrGPT

Scenario: Insurance claim processing

- User submits claim documents

- System extracts and summarizes data

- Risk analysis is performed

- Final report is generated

Without LyzrGPT

- One model handles all steps

- High cost and slower processing

- Limited optimization

With LyzrGPT

| Step | Model Strategy |

| Data extraction | Fast, cost-efficient model |

| Summarization | Balanced model |

| Risk analysis | High reasoning model |

| Report generation | Structured output model |

Outcome:

- Faster processing time

- Lower operational cost

- Improved accuracy where it matters

Closing Thoughts

Choosing a single model might work in early stages. But as AI becomes central to operations, that choice limits growth.

Model flexibility offers:

- Better cost control

- Higher performance across use cases

- Faster adoption of innovation

- Reduced dependency risk

LyzrGPT addresses this need by turning model selection into a dynamic layer rather than a fixed decision.

Instead of adapting workflows to fit a model, enterprises can adapt models to fit their workflows.

That shift changes how AI systems scale, evolve, and deliver value.

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here