Table of Contents

ToggleHere’s the scenario no IT leader wants to face: an employee runs sensitive HR data through an AI tool they found online. The model trains on it. You find out six months later, from your legal team, not from your monitoring system, because you didn’t have one.

This isn’t a hypothetical. It’s happening across enterprises right now, at different stages of chaos.

The good news? Most AI compliance problems are completely preventable, if you have the right checklist and you actually run through it before things escalate.

This guide is that checklist. We’ve broken it into 6 areas, with tables you can actually use in your next team meeting.

1. Why Most IT Teams Are Already Behind on AI Compliance

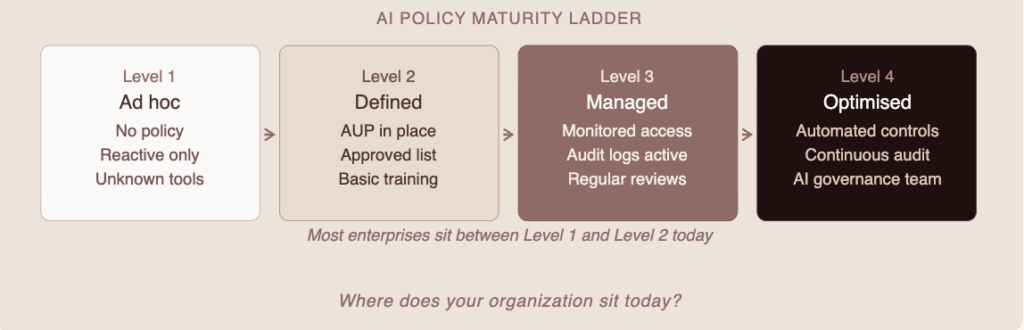

Let’s be direct. If your organization is using any AI tool, ChatGPT, Copilot, Claude, a custom LLM, anything, and you haven’t formalized a compliance framework yet, you’re operating on borrowed time.

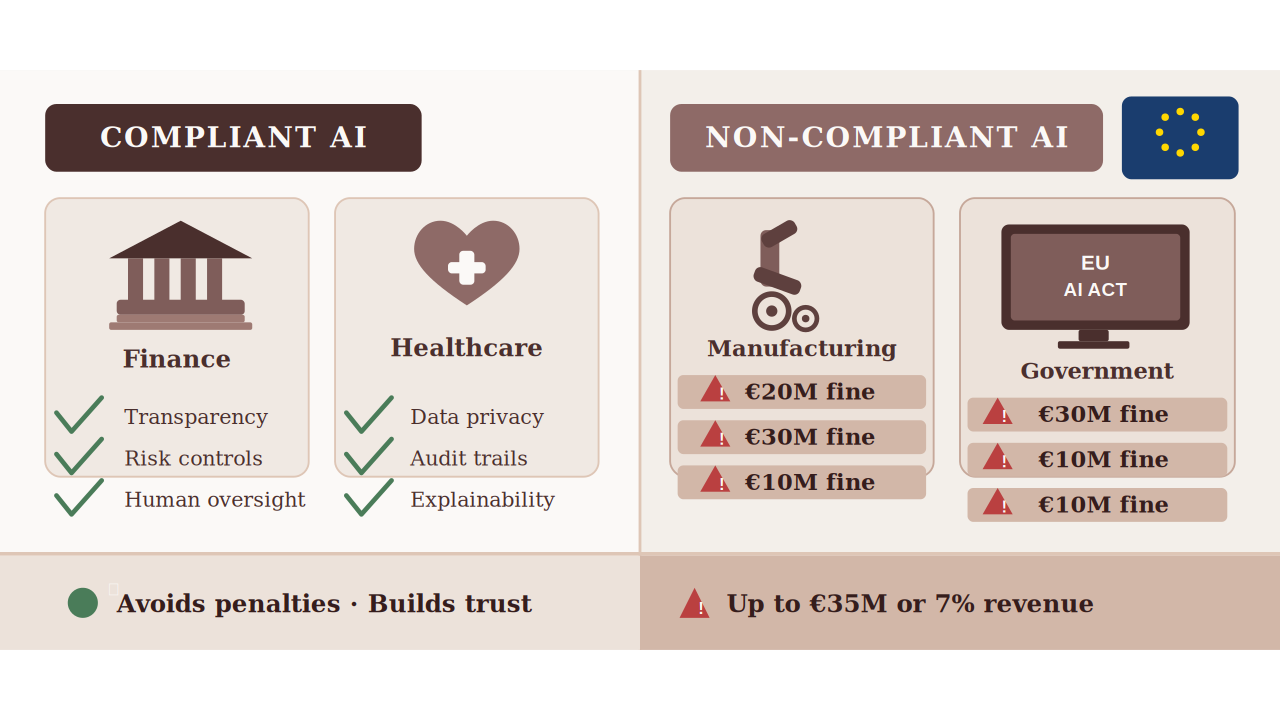

Regulations like the EU AI Act, GDPR, HIPAA, and SOC 2 are starting to explicitly address AI systems. Auditors are asking about them. Boards are asking about them.

The question is no longer “do we need AI compliance?”, it’s “how far behind are we?”

The core tension: AI adoption inside enterprises moves fast. Compliance frameworks move slowly. Your job is to close that gap before a regulator, auditor, or breach does it for you.

The good news is that building a solid AI compliance posture doesn’t require starting from scratch, it requires being systematic. Let’s walk through each layer.

2. Start Here: The AI Inventory You Probably Haven’t Done Yet

Before you can govern AI, you have to know where it’s running. Most enterprises significantly undercount this. Shadow AI, tools adopted by teams without IT’s knowledge, is rampant.

What a proper AI inventory looks like:

| Inventory Item | What to Capture | Priority |

| All AI tools in active use | Tool name, vendor, department, use case | Critical |

| Data types each tool touches | PII, PHI, financial, IP, internal docs | Critical |

| Who approved each tool | IT-approved vs. shadow AI vs. unknown | Critical |

| Vendor data retention policies | Does the vendor train on your data? For how long? | High |

| User access levels | Who has access — role-based or open? | High |

| Integration touchpoints | What internal systems does each AI connect to? | High |

| Output storage / logging | Are AI outputs saved? Where? | Medium |

Quick sanity check: Ask 10 employees across different teams what AI tools they use regularly. If more than 3 names come up that aren’t in your IT inventory — you have a shadow AI problem that needs immediate attention.

3. Data Governance: The Area Where Things Go Wrong Most Often

Data governance is where most AI compliance failures actually live. Not in the model, not in the output, in what data gets fed into the system in the first place. Organizations can minimize these risks by adopting a scalable compliance management solution that enforces strict data governance and ensures only compliant data enters AI workflows.

Employees share more than you think. Compensation files, unreleased product roadmaps, patient records, legal documents, all of it has found its way into public AI tools at enterprise after enterprise. Not maliciously. Just… conveniently.

Your data governance checklist for AI:

| Control | Why It Matters | Priority |

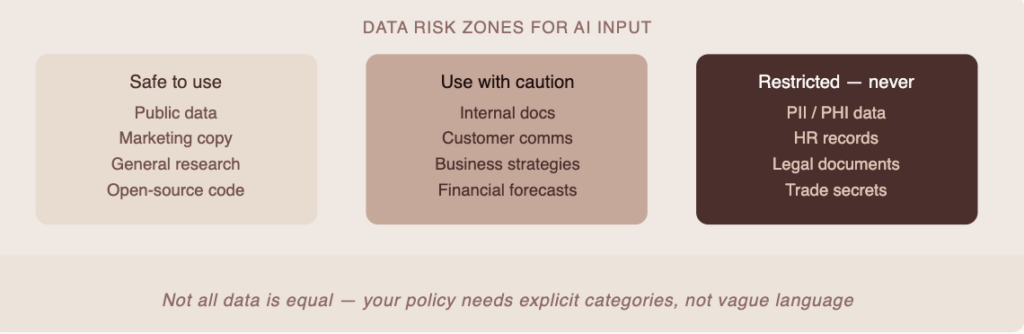

| Data classification policy updated to include AI use | Defines what can/can’t be inputted into AI tools | Must-have |

| DLP rules for AI endpoints | Prevents sensitive data from leaving via AI prompts | Must-have |

| Vendor DPA (Data Processing Agreement) in place | Legal protection, required for GDPR | Must-have |

| Data residency confirmed for each AI vendor | Where is your data being processed and stored? | High |

| Opt-out of model training confirmed (where available) | Many vendors allow this — most teams forget to ask | High |

| Retention schedules defined for AI-generated outputs | Do outputs count as business records? Often yes. | Medium |

| Right-to-deletion coverage for AI systems | Can you fulfil a GDPR deletion request across AI tools? | High |

4. Risk and Access Controls: Because “Everyone Can Use It” Is Not a Policy

One of the most common mistakes in enterprise AI rollouts: treating AI tools like email. “Everyone gets access, figure it out.” That approach creates outsized risk, especially when AI tools are integrated with sensitive internal systems.

Access controls for AI need to follow the same logic as access controls for anything else: least privilege, role-based, and auditable.

Access and risk control checklist:

| Control | Notes | Risk Level |

| Role-based access for AI tools defined | Not everyone needs full capability access | High |

| MFA enforced on all AI platform logins | Basic hygiene — non-negotiable | High |

| SSO integration for AI tools (where available) | Easier to revoke access when employees leave | High |

| AI tool usage logged and auditable | Audit logs required for SOC 2, ISO 27001 | High |

| Privileged access review for AI integrations | AI tools with API access to internal systems need scrutiny | Medium |

| Offboarding includes AI tool deprovisioning | Often missed in standard offboarding checklists | Medium |

| Contractor/vendor AI access scoped and time-limited | Third-party AI access is a growing audit point | Medium |

5. Regulatory Alignment, Mapping Your AI Stack to the Frameworks That Matter

The regulatory landscape for AI is evolving fast, but certain frameworks are already directly affecting enterprise IT teams. Here’s a practical map of what applies to whom — and the key AI-specific controls each one requires.

| Framework | Who It Affects | Key AI Requirement | Where IT Leads |

| EU AI Act | Any org using “high-risk” AI in EU | Risk classification, transparency, logging | Risk assessment, documentation |

| GDPR | Any org handling EU resident data | Automated decision-making disclosure, data minimization | Data governance, vendor DPAs |

| HIPAA | Healthcare organizations (US) | PHI must not be inputted into non-BAA AI tools | Tool approval, BAA procurement |

| SOC 2 | SaaS and tech companies (US) | AI systems in scope for availability, confidentiality | Audit logging, access controls |

| ISO 27001 | Enterprises seeking certification | AI as part of information security risk management | Risk register, controls mapping |

| CCPA | Orgs with CA consumer data (US) | AI-driven profiling disclosure requirements | Privacy notices, data mapping |

Practical tip: Start with the 1–2 frameworks most relevant to your industry and geography. Build controls around those first, then expand. Trying to be compliant with everything simultaneously usually results in being compliant with nothing.

6. Policy, Training, and the Human Layer: Because Your People Are the Biggest Variable

You can have perfect technical controls and still have a compliance failure, because someone, a well-meaning employee who just wanted to move faster, did something your policy didn’t anticipate.

The human layer of AI compliance is often treated as an afterthought. It shouldn’t be. It’s where most real-world incidents originate.

The policy and training checklist:

| Item | What “Done” Looks Like | Priority |

| AI Acceptable Use Policy published | Written, approved, accessible to all staff | Critical |

| Approved AI tools list maintained | Living document, updated when tools are added/removed | Critical |

| Policy covers what data cannot be shared with AI | Explicit categories, not vague language | Critical |

| AI compliance training for all employees | Not a 45-minute generic e-learning — role-specific scenarios | High |

| Incident reporting process for AI misuse defined | Employees know how to flag issues without fear | High |

| AI output review requirements defined by role | Especially for customer-facing or regulated outputs | High |

| AI ethics/bias policy documented | Especially for AI used in hiring, lending, or scoring | Medium |

| AI policy review cadence set (e.g. quarterly) | The landscape changes fast, policies need to keep up | Medium |

7. How to Run Your First AI Compliance Review, A Practical Starting Point

If you’re reading this and thinking “we need to do all of this,” start here. You don’t need to fix everything at once. You need a structured starting point.

Here’s a 4-week sprint that gets you from zero to a working baseline:

Week 1 — Discover: Run the AI inventory exercise. Survey department heads. Use your SSO logs to find OAuth-connected apps. The goal is a complete picture of what’s running.

Week 2 — Classify: Map each tool to its data types and risk level. Flag anything touching PII, PHI, or confidential IP. These become your highest-priority action items.

Week 3 — Control: Implement quick wins, enforce MFA where missing, confirm vendor training opt-outs, draft your Acceptable Use Policy if you don’t have one.

Week 4 — Document: Formalize your risk register, assign owners to each compliance area, and set a review cadence. This becomes your audit evidence if you ever need it.

The most common mistake: Treating this as a one-time project. AI compliance is an ongoing function. The tools change, regulations evolve, and your employee base does things you don’t expect. Build the habit, not just the checklist.

The Bottom Line

AI compliance for enterprise IT isn’t about slowing down AI adoption. It’s about making sure the adoption that’s already happening doesn’t create legal, regulatory, or reputational risk that blindsides you later.

The teams that get this right aren’t the most restrictive ones. They’re the ones who built clear policies, maintained visibility into their tools, and treated compliance as an enabler, not a blocker.

Start with the inventory. Build from there. The checklist above gives you everything you need to have a defensible AI governance posture, one that can withstand an audit, a board question, or an incident.

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here