Table of Contents

ToggleYour AI agents just made 10,000 decisions while you were reading this sentence.

Some approved loans. Others responded to customers. A few flagged compliance risks. And you have no idea if any of them screwed up.

That’s the scary reality of autonomous AI. These systems are brilliant, fast, and occasionally catastrophically wrong. Without proper AI agent governance, you’re not building the future – you’re building liability.

Here’s what most companies get wrong: they treat AI governance like IT security from 2005. Box-checking exercises, compliance theater, policies nobody reads. Meanwhile, their agents are out there making real decisions that affect real people.

The companies winning right now? They’ve figured out how to balance autonomy with accountability. They’ve built governance frameworks that protect the business without strangling innovation.

Let’s talk about how they’re doing it.

What exactly is AI agent governance, anyway?

Think of AI agent governance as the rulebook, referee, and replay system all rolled into one. It’s how you make sure your autonomous agents play nice, stay compliant, and don’t accidentally create PR nightmares.

But here’s where it gets interesting: governance isn’t about control. Not really.

It’s about enabling scale. You can’t have humans reviewing every single agent decision when you’re processing thousands of transactions per hour. According to Gartner, 75% of enterprise AI projects fail because organizations can’t operationalize them safely. Governance is the missing link.

Good governance means your agents know their boundaries, log their reasoning, and escalate when they’re uncertain. It means you can audit any decision, explain any outcome, and prove to regulators that your AI isn’t a black box making arbitrary calls.

The framework includes policies, technical guardrails, monitoring systems, and human oversight protocols. Together, they create what forward-thinking teams call “responsible autonomy” – agents that can act independently within well-defined constraints.

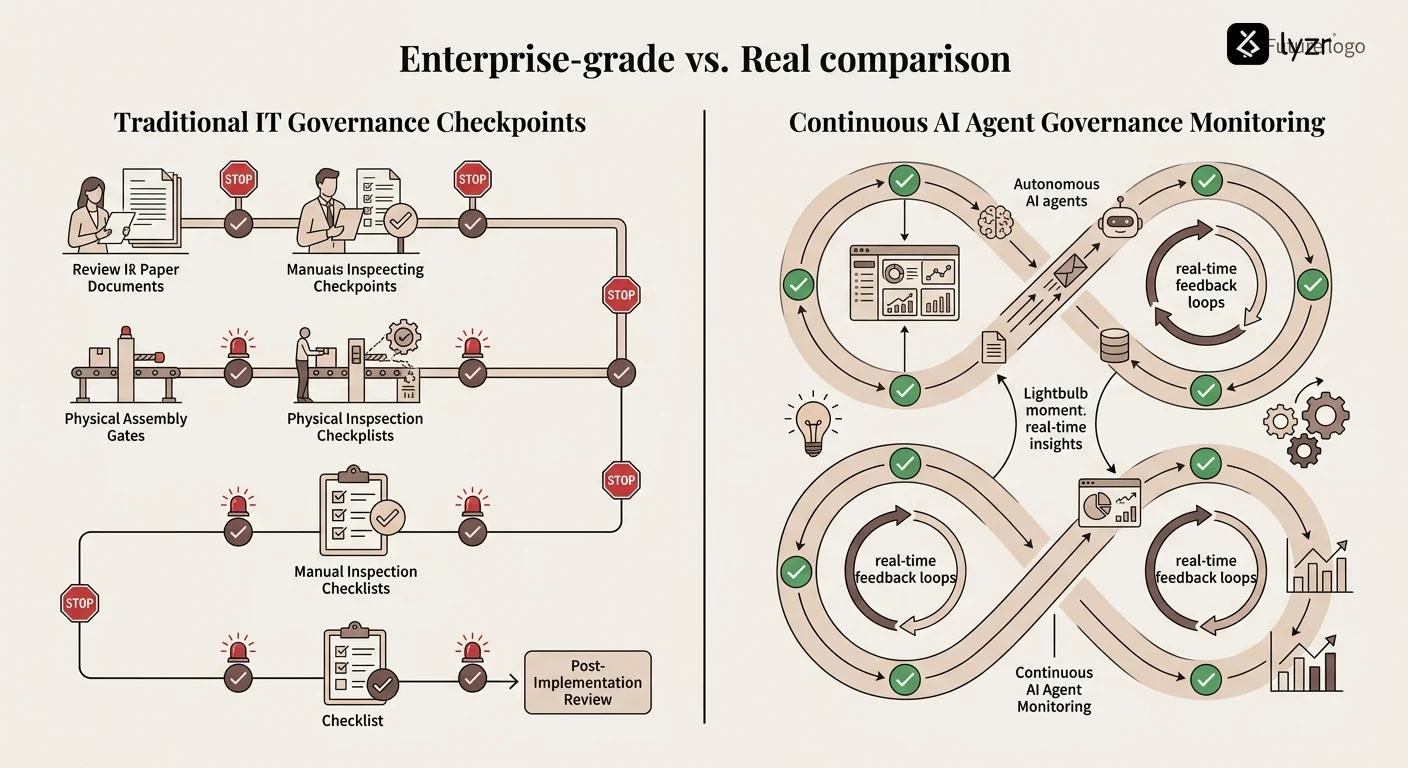

Why traditional compliance frameworks fail with AI agents

Your compliance team thinks they’ve got this covered. They took the existing IT governance playbook, slapped “AI” on the cover, and called it a day.

Spoiler: it won’t work.

Traditional frameworks were built for static systems – software that does what you programmed it to do, every single time. AI agents learn, adapt, and make contextual decisions. That changes everything.

When a traditional system fails, you can trace it back to a code bug or configuration error. When an AI agent framework produces an unexpected outcome, the root cause might be training data bias, model drift, or emergent behavior nobody anticipated.

Here’s what breaks: annual compliance reviews don’t cut it when your agent’s behavior shifts weekly based on new data. Static approval workflows collapse when agents need to make split-second decisions. One-size-fits-all policies fail when you’re deploying multiple specialized agents across different departments.

The financial services industry learned this the hard way. Early AI agents for loan approval made technically correct decisions that turned out to be ethically problematic or legally questionable. They followed the letter of the policy but missed the spirit.

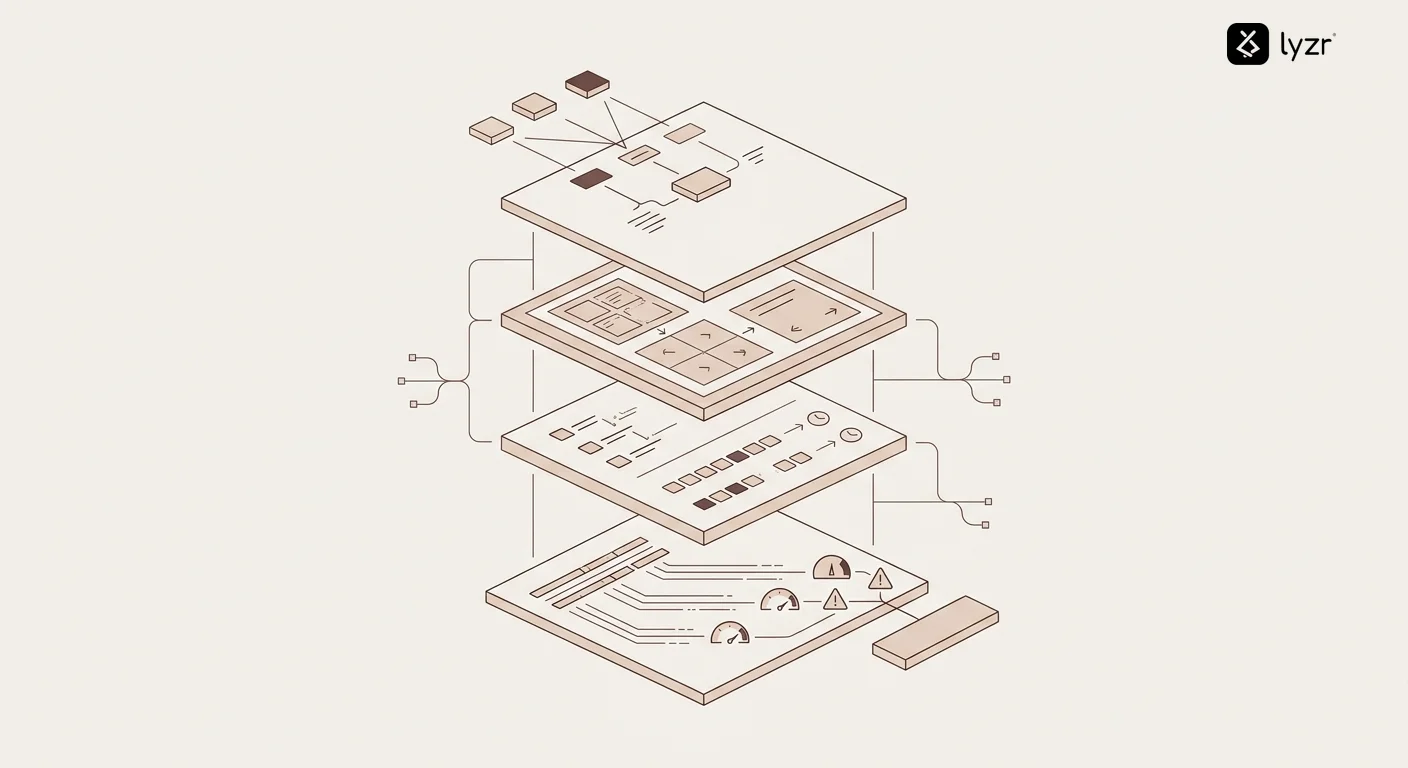

The four pillars of effective AI agent governance

Let’s get practical. After working with dozens of enterprises deploying autonomous agents, a pattern emerges. Successful AI governance for enterprises rests on four foundational pillars.

Transparency: making the invisible visible

You can’t govern what you can’t see. Every agent decision needs a paper trail – not for bureaucracy’s sake, but for accountability.

This means logging inputs, reasoning processes, confidence scores, and outputs. When an AI agent in commercial banking denies a loan, there should be a clear chain of logic explaining why. Not just “the model said no” but “here are the specific factors, their weights, and how they combined to produce this outcome.”

The best systems capture decision context too. What was happening in the broader environment? Were there any edge cases or unusual patterns? Did the agent consider alternatives before reaching its conclusion?

Accountability: who’s responsible when agents fail?

Here’s an uncomfortable question: if your AI agent makes a mistake that costs someone their job, their loan, or their trust, who takes the heat?

Good governance establishes clear ownership chains. Every agent has a human sponsor responsible for its behavior. That person doesn’t micromanage every decision, but they own the outcomes.

According to research from Stanford University, organizations with explicit AI accountability frameworks experience 40% fewer governance incidents. The difference? Someone’s name is on the line, which focuses attention wonderfully. This is especially important when working with an AI Development Company, where accountability must be clearly defined across both internal teams and external partners.

Accountability also means escalation protocols. Agents should recognize when they’re operating outside their competency zone and bump decisions to human judgment. Think of it as knowing when to phone a friend.

Control: guardrails that enable instead of restrict

The temptation is to lock everything down. Create so many rules that agents can barely breathe. That’s not governance – that’s paralysis.

Smart control mechanisms work like bumpers in a bowling alley. They keep the ball in play while preventing gutter balls. Your AI agents for lead qualification should have freedom to experiment with messaging and timing, but hard limits on what data they access and what commitments they make.

This includes technical controls like API rate limits, data access restrictions, and action boundaries. It also includes policy controls – guidelines that help agents navigate gray areas without constant human intervention.

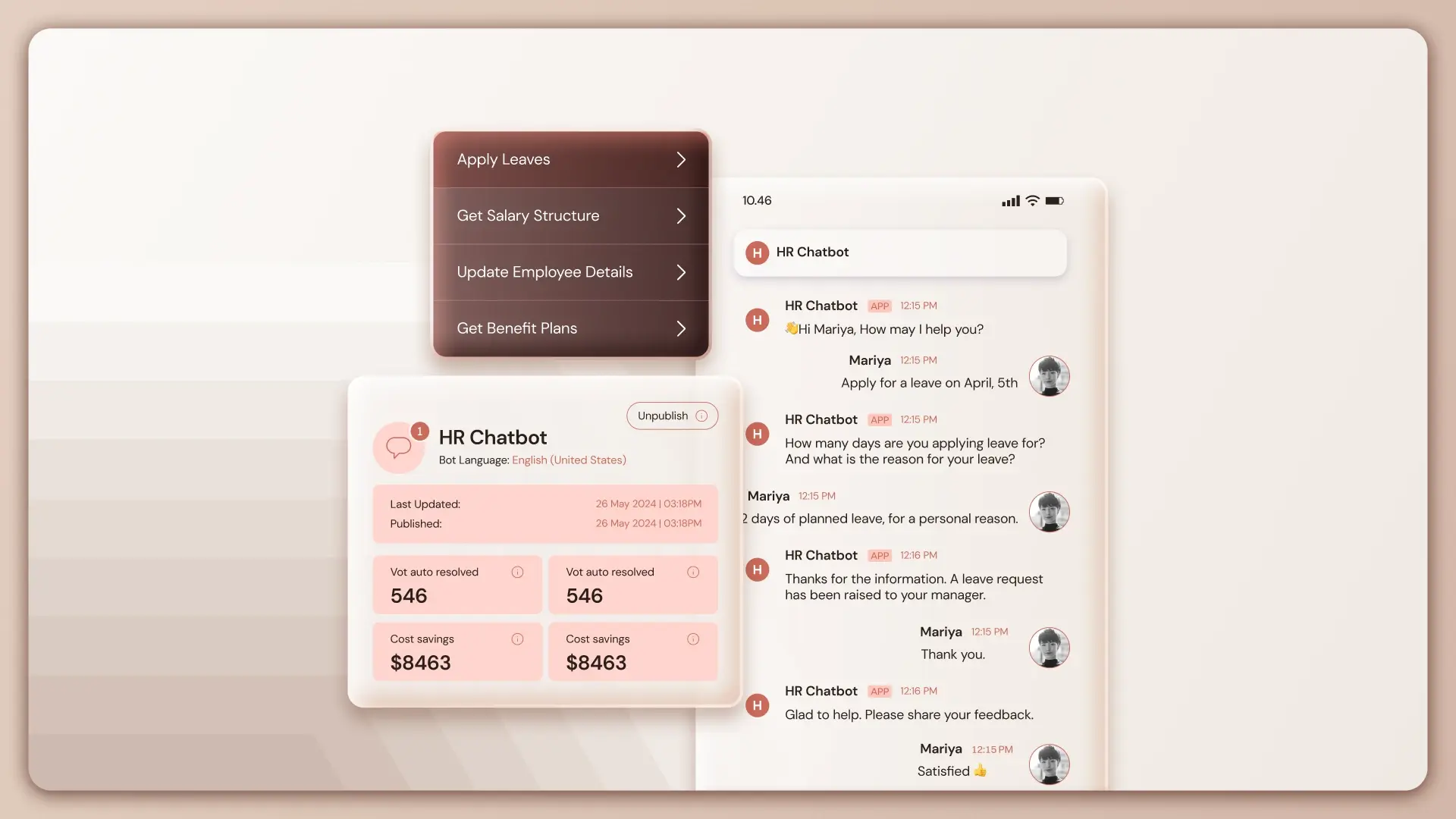

The Lyzr Agent Control Plane approach treats control as a dynamic system, not a static rulebook. As agents demonstrate reliability in certain contexts, their autonomy can expand. When they struggle, guardrails tighten automatically.

Continuous monitoring: because set-it-and-forget-it is dead

Remember when you deployed software and it just… worked? Same behavior, same outputs, predictable and boring?

AI agents aren’t like that. They drift. Their performance changes as data distributions shift. A customer service AI agent that’s brilliant in January might start giving weird answers in March because customer language patterns evolved.

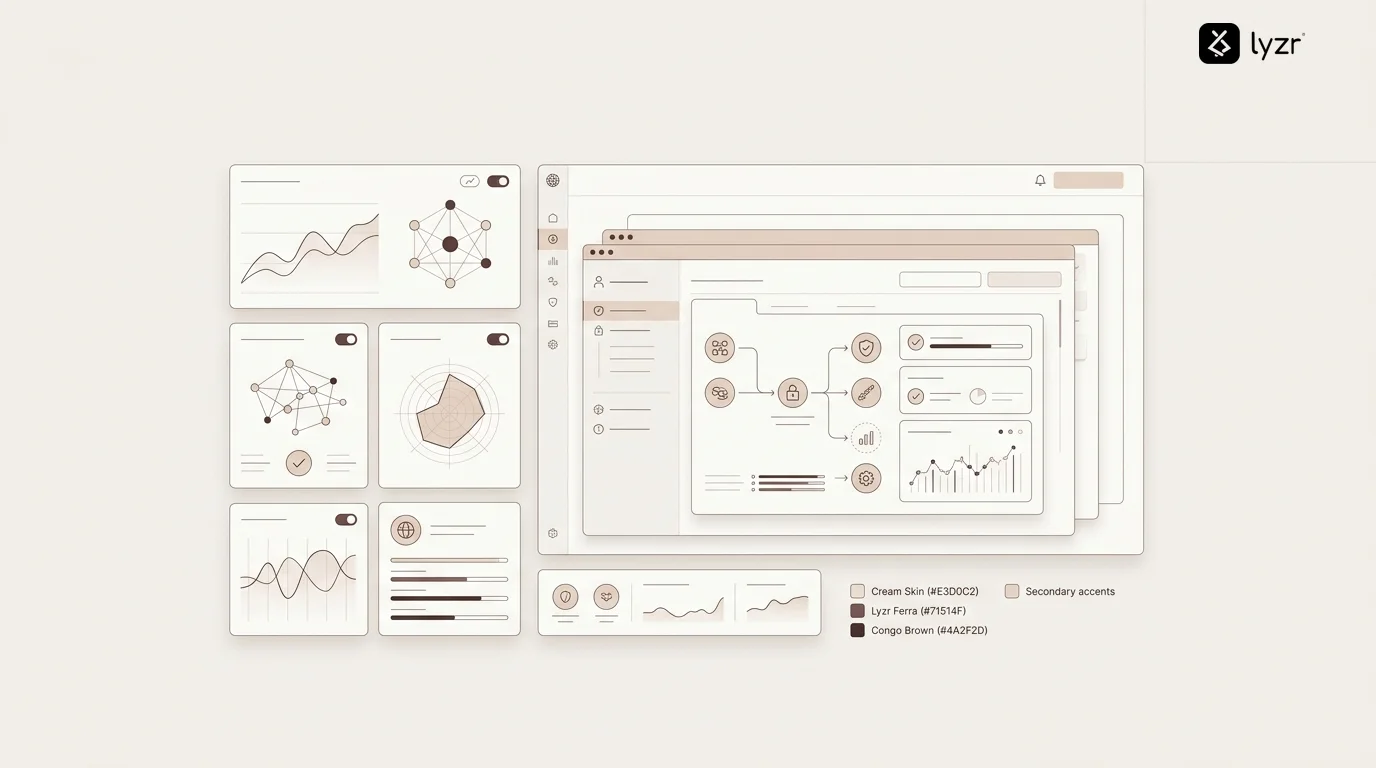

Continuous monitoring means real-time dashboards tracking key metrics: decision accuracy, confidence levels, escalation rates, user satisfaction, compliance violations, and bias indicators. When metrics drift outside acceptable ranges, alerts fire and humans investigate.

But monitoring isn’t just about catching failures. It’s about spotting opportunities. Maybe your agent discovered a more efficient workflow. Maybe it’s handling a use case you didn’t anticipate. Good monitoring captures the positive surprises too.

How do you ensure AI agents follow company policies?

This is where most companies face their first real crisis. You’ve written beautiful policies about ethical AI use, data privacy, and responsible automation. Your agents ignore them spectacularly.

Not out of malice – they just don’t understand abstract policies the way humans do. “Be fair” means nothing to an algorithm. “Respect customer privacy” is ambiguous. “Use good judgment” is completely useless.

The solution? Translate policies into executable constraints. Instead of “don’t discriminate,” implement technical checks that flag decisions correlated with protected characteristics. Instead of “maintain transparency,” require agents to generate explanations meeting specific criteria.

Forward-thinking teams are building AI agents specifically for AI governance. These meta-agents monitor other agents, checking for policy violations, detecting drift, and ensuring consistency. It’s like having a compliance officer who never sleeps and reviews every decision.

You also need regular policy audits. As your business evolves, policies should too. A quarterly review ensures your governance framework keeps pace with operational reality.

What compliance risks do autonomous AI agents create?

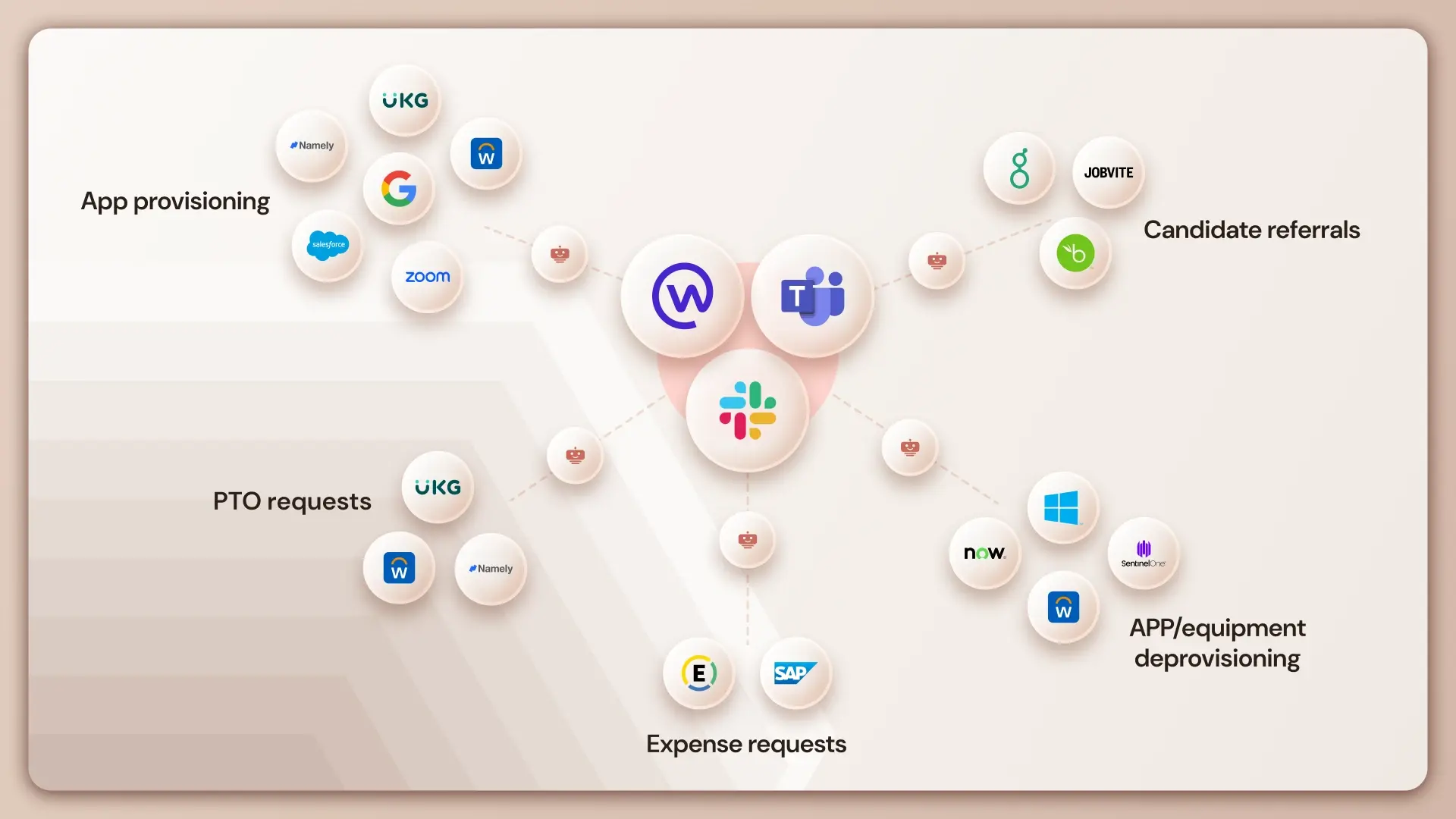

Let’s talk about what keeps legal teams up at night. Autonomous agents touch virtually every regulatory framework: data privacy (GDPR, CCPA), financial regulations (SOX, Dodd-Frank), industry-specific rules (HIPAA for healthcare, FCRA for credit), and emerging AI-specific legislation.

The biggest risk? Automated discrimination. An agent optimizing for efficiency might inadvertently create patterns that violate fair lending laws or employment regulations. It’s not intentional bias – it’s mathematical optimization producing legally problematic outcomes.

Data handling is another minefield. Agents accessing customer information need ironclad controls. According to Federal Trade Commission guidelines, companies remain liable for their agents’ data practices even when decisions are fully automated.

Then there’s explainability. The EU’s AI Act and similar regulations require organizations to explain automated decisions affecting individuals. If your AI agent for email marketing can’t articulate why it excluded someone from a campaign, you’ve got a problem.

Cross-border complications multiply everything. An agent deployed globally might comply with US regulations while violating European or Asian rules. Geographic policy enforcement becomes critical.

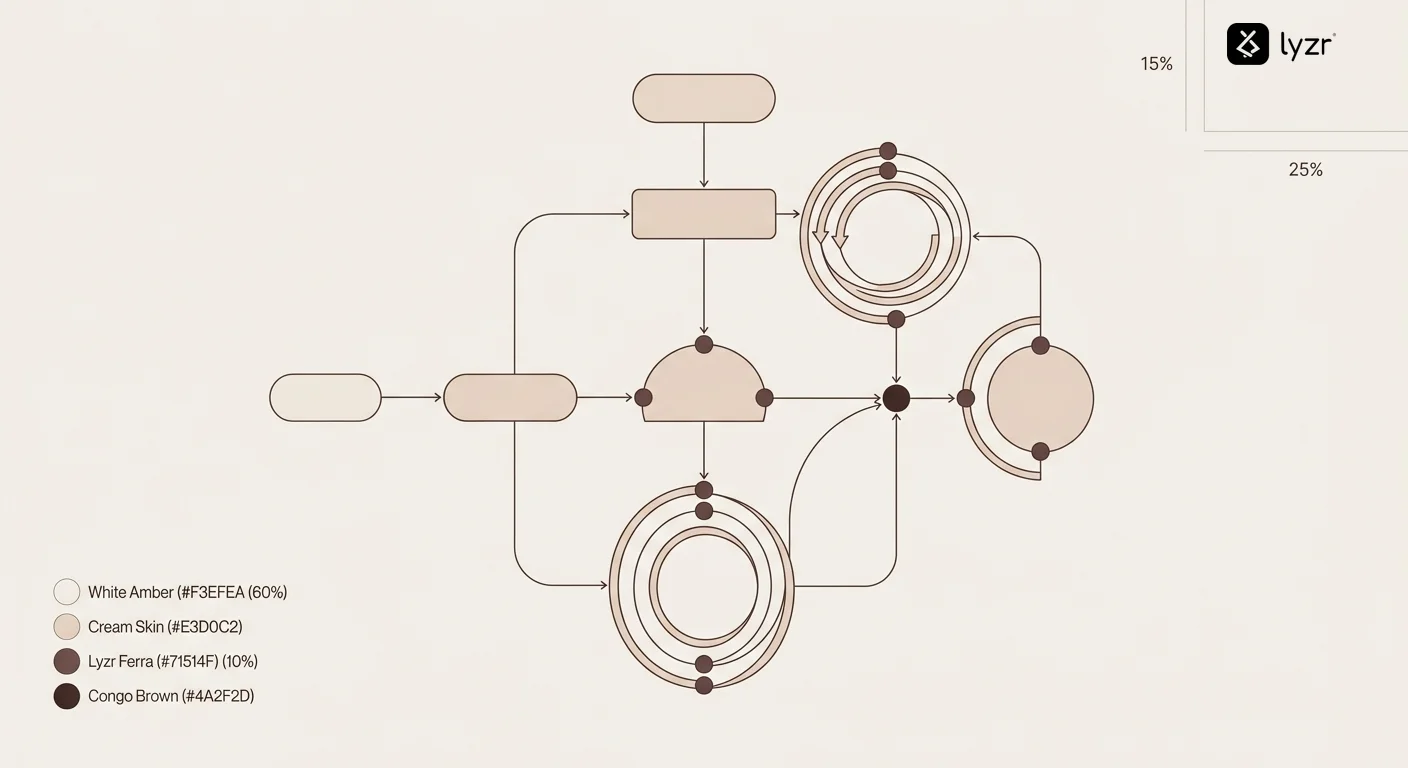

Building governance into your AI agent architecture

Here’s the dirty secret: you can’t bolt governance onto agents after deployment. It has to be architectural from day one.

Start with modular design. Each agent should have clear boundaries – specific tasks it handles, specific data it accesses, specific actions it can take. When problems emerge, modular architecture lets you isolate and fix without disrupting the entire system.

Build in logging at the infrastructure level. Every API call, every data access, every decision gets recorded automatically. Not as an afterthought feature, but as fundamental system behavior. Using platforms that deploy autonomous agents on AWS with governance built in saves countless headaches.

Implement permission systems that work like least-privilege access in security. Agents start with minimal capabilities and earn expanded permissions through demonstrated reliability. A new AI agent for content creation might initially require human approval for publication. After 1,000 successful pieces, it graduates to autonomous publishing with spot-check audits.

Version control for agent behavior is non-negotiable. When you update an agent’s training data, decision logic, or parameters, that’s a new version. You should be able to roll back to previous versions if the update causes problems.

Who owns AI agent governance in your organization?

This question starts more fights than you’d expect. IT wants ownership because agents are technical infrastructure. Legal demands control because compliance is their domain. Business units insist they should govern their own agents because they understand the context.

Everyone’s right, which means everyone’s wrong.

Effective governance requires a cross-functional AI governance board. Representatives from IT, legal, compliance, business operations, and risk management meet regularly to review agent performance, approve new deployments, and update policies.

Day-to-day oversight typically lives with a dedicated AI operations team. They monitor dashboards, respond to alerts, conduct routine audits, and coordinate with stakeholders. Think of them as mission control for your agent fleet.

Individual agent ownership stays with business units. The marketing team owns their AI agents for digital marketing. Finance owns their forecasting agents. They’re responsible for ensuring agents serve business objectives while operating within governance frameworks.

This distributed ownership model scales better than centralized control. It keeps governance connected to operational reality while maintaining consistent standards across the organization.

What tools enable effective AI agent governance?

Governance without tooling is wishful thinking. You need technical infrastructure that makes oversight possible without drowning teams in busywork.

Observability platforms designed for AI systems track agent behavior in real-time. Unlike generic monitoring tools, these understand AI-specific metrics like prediction confidence, model drift, and decision consistency.

Policy enforcement engines translate human-readable rules into technical constraints. When you update a compliance policy, these tools automatically propagate changes to all affected agents. No manual reconfiguration needed.

Audit trail systems create immutable records of agent decisions. When regulators come asking questions three years from now, you can reconstruct exactly what happened, why, and who was responsible.

Bias detection tools continuously test agent outputs for discriminatory patterns. They run synthetic scenarios checking whether decisions vary inappropriately across demographic groups.

The most sophisticated setups use agent builder platforms with governance baked into the development workflow. Compliance checks happen automatically during agent creation, not as a separate approval step.

How do you balance agent autonomy with human oversight?

This is the central tension in AI agent governance. Too much oversight kills the efficiency gains that made you deploy agents in the first place. Too little creates unacceptable risk.

The answer lies in risk-based tiering. Low-stakes, high-frequency decisions run fully autonomous. Your AI agents for SEO don’t need human approval to optimize meta descriptions. High-stakes, low-frequency decisions require human review. Major policy changes or large financial commitments shouldn’t be fully automated.

The middle ground – moderate stakes, moderate frequency – uses confidence thresholds. When an agent is highly confident in its decision, it proceeds autonomously. When confidence drops below a threshold, it escalates to human review.

Smart systems adjust these thresholds dynamically. During high-risk periods (regulatory audits, system changes, market volatility), thresholds tighten and more decisions get human review. During stable periods, agents get more autonomy.

Human oversight should focus on edge cases, not routine operations. Your team reviews the 5% of decisions agents flag as uncertain, not the 95% they’re confident about. This concentrates human expertise where it matters most.

The role of agent testing in governance frameworks

You wouldn’t deploy software without testing it. Why would you deploy AI agents differently?

Pre-deployment testing runs agents through simulated scenarios covering normal operations, edge cases, and adversarial conditions. How does your AI agent for campaign automation behave when data quality degrades? When user behavior shifts unexpectedly? When someone tries to game the system?

Shadow mode deployment is your friend. Run the new agent alongside existing processes without giving it actual decision authority. Compare its recommendations to human decisions. This reveals divergence patterns before they cause real-world problems.

Ongoing testing matters just as much. Regular synthetic testing injects controlled scenarios into production systems, verifying agents still behave as expected. It’s like fire drills – you practice emergency responses before emergencies happen.

A/B testing for agents helps you optimize governance itself. Try different guardrail configurations, monitoring approaches, or escalation thresholds. Measure impact on both performance and compliance. Governance should evolve based on evidence, not assumptions.

Common AI agent governance mistakes (and how to avoid them)

Let’s learn from others’ pain. These mistakes show up repeatedly across organizations deploying autonomous agents.

Mistake one: treating governance as a launch gate instead of an ongoing practice. Teams nail the initial compliance review, then forget about governance until something breaks. Governance needs continuous attention, not one-time approval.

Mistake two: optimizing for coverage instead of impact. Companies create elaborate governance procedures for low-risk agents while giving minimal oversight to high-risk ones. Focus your governance energy where it matters most.

Mistake three: governance by committee paralysis. When every decision requires approval from six departments, nothing happens. Good governance enables speed through clear frameworks, not endless meetings.

Mistake four: ignoring the humans in the loop. Your agents don’t operate in isolation – they interact with employees, customers, and partners. Governance frameworks that forget the human element create friction and resistance.

Mistake five: one-size-fits-all governance. The same controls appropriate for a LinkedIn marketing agent make no sense for a financial forecasting agent. Different agents need different governance approaches based on their risk profiles and use cases.

Future-proofing your AI agent governance strategy

AI moves fast. Regulatory frameworks move faster. Your governance strategy needs flexibility baked in from the start.

Build for regulatory change. New AI regulations are coming – that’s not speculation, it’s reality. The EU AI Act is here. US states are crafting their own rules. Your governance framework should accommodate new requirements without complete overhauls.

Invest in explainability infrastructure. As regulations increasingly require transparency, the ability to explain any agent decision becomes non-negotiable. Systems that can’t generate comprehensible explanations will face compliance walls.

According to research from Brookings Institution, 67% of organizations underestimate the governance complexity of scaled AI deployments. They plan for today’s three agents, not next year’s thirty. Design governance systems that scale horizontally without proportional increases in overhead.

Stay vendor-neutral in your architecture. Building governance around a specific platform or tool creates lock-in. When better solutions emerge – and they will – you want the flexibility to adopt them without rebuilding everything.

Making AI agent governance actually work

Here’s what nobody tells you: perfect governance is impossible. You’re balancing competing priorities – speed versus safety, innovation versus compliance, autonomy versus control. Something will always feel suboptimal.

The goal isn’t perfection. It’s building systems that fail gracefully, catch problems early, and improve continuously.

Start small. Pick one high-value use case and build comprehensive governance around it. Learn what works in your organizational culture. Then expand to additional agents, refining your approach based on real experience.

Measure what matters. Track metrics that indicate governance health: decision accuracy, compliance violations, escalation rates, audit findings, stakeholder satisfaction. Use data to drive improvements, not gut feelings.

Create feedback loops. When agents make mistakes, feed those lessons back into training, policies, and guardrails. When governance blocks legitimate use cases, adjust your frameworks. Rigid systems break – adaptive ones evolve.

Smart teams are adopting platforms that make governance easier by design. Lyzr.ai builds AI agent infrastructure with governance controls integrated from the ground up – not as add-ons, but as core functionality. Policy enforcement, audit logging, decision transparency, and compliance monitoring work out of the box.

When you’re building agents through Lyzr Agent Studio, governance guardrails configure alongside agent capabilities. You’re not choosing between speed and control – you get both simultaneously.

The companies thriving with autonomous AI aren’t necessarily the ones with the most sophisticated algorithms. They’re the ones who figured out governance first, then scaled confidently on that foundation.

Your agents are going to make mistakes. That’s inevitable. The question is whether you’ll catch those mistakes before they compound, whether you can explain what happened when asked, and whether you can prevent similar issues going forward.

AI agent governance isn’t a project with a finish line. It’s an ongoing practice that evolves with your business, your agents, and the regulatory landscape. Get the fundamentals right now, and you’ll be ready for whatever comes next.

TL;DR: AI Agent Governance Essentials

- AI agent governance balances autonomy with accountability through transparent decision logging, clear ownership chains, smart guardrails, and continuous monitoring.

- Traditional IT compliance frameworks fail with AI agents because they can’t handle learning systems that adapt and drift over time.

- Effective governance requires translating abstract policies into executable technical constraints that agents can actually follow.

- Risk-based tiering allows low-stakes decisions to run autonomously while requiring human review for high-impact choices.

- Building governance into agent architecture from day one prevents the nightmare of retrofitting controls onto deployed systems.

- Cross-functional governance boards with representatives from IT, legal, compliance, and business units make better decisions than siloed approaches.

Action Checklist: Implementing AI Agent Governance

- Audit your current AI agents and map their decision authorities, data access, and risk levels

- Establish a cross-functional AI governance board with clear decision-making authority

- Implement comprehensive logging infrastructure that captures every agent decision with full context

- Translate your company policies into executable technical constraints for agent behavior

- Set up real-time monitoring dashboards tracking decision accuracy, compliance metrics, and escalation rates

- Create risk-based escalation thresholds that balance autonomy with oversight

- Deploy shadow mode testing before giving new agents full decision authority

- Schedule quarterly governance reviews to assess effectiveness and adjust frameworks

- Build feedback loops that turn governance failures into systematic improvements

Frequently Asked Questions

What’s the difference between AI governance and AI agent governance?

AI governance covers all AI systems broadly – models, data, infrastructure, and development practices. AI agent governance specifically addresses autonomous systems that make decisions and take actions independently. Agent governance requires additional focus on decision transparency, action boundaries, escalation protocols, and real-time monitoring because agents operate with less human supervision than traditional AI systems.

Do small companies need formal AI agent governance?

Yes, though the sophistication level scales with your deployment. Even small companies face compliance risks and reputational exposure from poorly governed agents. Start with basics: decision logging, clear ownership, and defined escalation paths. You don’t need enterprise-grade infrastructure, but you do need systematic oversight. The cost of governance is far less than the cost of a compliance violation or customer trust breach.

How often should we audit our AI agents?

Continuous automated monitoring plus quarterly deep-dive reviews is the standard approach. Real-time monitoring catches immediate issues – anomalies, policy violations, performance degradation. Quarterly audits examine broader patterns, test governance effectiveness, review policy adequacy, and assess compliance with evolving regulations. High-risk agents may require monthly reviews, while low-risk agents might stretch to semi-annual audits.

Can AI agents govern other AI agents?

Absolutely, and this is becoming common practice. Meta-agents specialized in governance can monitor other agents continuously, checking for policy compliance, detecting anomalies, and flagging decisions for human review. These governance agents handle the volume and speed that human oversight can’t match. However, they don’t replace humans entirely – humans still own ultimate accountability and handle complex judgment calls that governance agents escalate.

What happens if an AI agent violates regulations?

The deploying organization bears liability, not the AI system itself. Depending on the violation’s severity and jurisdiction, consequences range from warning letters to substantial fines, operational restrictions, or legal action. Good governance doesn’t eliminate violations entirely but demonstrates due diligence, which significantly influences regulatory response. Having audit trails proving you attempted reasonable oversight makes a massive difference in penalty severity and reputational damage.

How do we measure AI agent governance effectiveness?

Track both leading and lagging indicators. Leading indicators include monitoring coverage, policy update frequency, escalation response times, and testing completeness. Lagging indicators include compliance violations detected, audit findings, decision accuracy rates, and stakeholder confidence scores. The best metric? Time between when an issue occurs and when you detect it – effective governance catches problems quickly before they compound.

Should we build or buy AI agent governance tools?

Unless governance tooling is your core competency, buy or partner. Building comprehensive governance infrastructure requires significant ongoing investment in platform development, security updates, compliance adaptation, and feature expansion. Established platforms already solve common challenges and update for regulatory changes. Focus your engineering resources on what differentiates your business, not rebuilding governance infrastructure that specialized providers maintain better and cheaper.

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here