Table of Contents

ToggleA healthcare company rolled out an AI assistant to help doctors summarize patient notes.

It worked instantly.

- Notes got shorter

- Documentation got faster

- Doctors started relying on it

Within weeks, it moved from “pilot” to “default workflow.”

Then compliance stepped in.

“What data is the model using?”

“Where is it processed?”

“Can we audit these summaries?”

Silence.

The system worked.

But no one could explain how.

That’s when the rollout stopped.

This Is Where Most AI Projects Break

Not at the model level. Not at the use case level. But at governance.

Searches around “AI governance framework,” “enterprise AI compliance,” and “responsible AI deployment” are rising because companies are hitting the same wall: AI is easy to start. Hard to control.

So What Does AI Governance Actually Mean?

Forget long policy documents for a second. In real workflows, AI governance comes down to a few uncomfortable questions:

- What exactly is being sent to the model?

- Can every output be traced back?

- Are we enforcing rules—or just hoping for the best?

If these don’t have clear answers, governance doesn’t exist—no matter what the policy says.

The 5 Things Enterprises Realize (Usually Too Late)

1. “We Should’ve Defined Data Boundaries Earlier”

In the healthcare example, patient notes were being sent for summarization.

No one flagged it initially because: “It’s just summarization.”

But here’s how that plays out:

| What Teams Think | What’s Actually Happening |

| “We’re just cleaning text” | Sensitive data is being processed |

| “No storage, so no issue” | External processing still counts |

| “It’s internal usage” | Still subject to compliance rules |

Reality: If data boundaries aren’t defined upfront, they get crossed by default.

2. “We Can’t Explain the Output”

Another team, this time in banking, used AI to assist in credit decisions.

Everything looked fine—until a decision was challenged.

They were asked:

- Why was this flagged as high risk?

- What factors influenced the output?

The response?

A clean paragraph. No traceable reasoning.

| Requirement | What They Had |

| Input logs | Partial |

| Output logs | Available |

| Decision path | Missing |

| Model version | Unknown |

Reality: If decisions can’t be explained, they can’t be defended.

3. “We Assumed the Model Would Follow Policy”

A legal team used AI to review contracts.

Sometimes it flagged risks correctly.

Sometimes it missed obvious clauses.

Why?

Because the model wasn’t aligned with internal policy—it was just generating probable responses.

| Without Guardrails | What Happens |

| No defined rules | Inconsistent outputs |

| No restrictions | Policy violations |

| No enforcement | Risky responses slip through |

Reality:

AI doesn’t follow company rules unless those rules are enforced in the system.

4. “We Didn’t Plan for Model Changes”

One team built a workflow that worked perfectly—for a while.

Then outputs started changing.

- Same input

- Different result

Nothing in their system had changed.

The model had.

| Change | Impact |

| Model update | Output shifts |

| No version control | No consistency |

| No rollback | No recovery |

Reality:

Without versioning, AI systems are not stable systems.

5. “We Had No Way to Monitor Usage”

Once AI usage spreads, it becomes invisible.

Different teams use it differently:

- Some cautiously

- Some aggressively

- Some without understanding the risks

And leadership sees… nothing.

| Without Monitoring | Outcome |

| No usage visibility | Blind spots |

| No policy tracking | Compliance gaps |

| No alerts | Issues found too late |

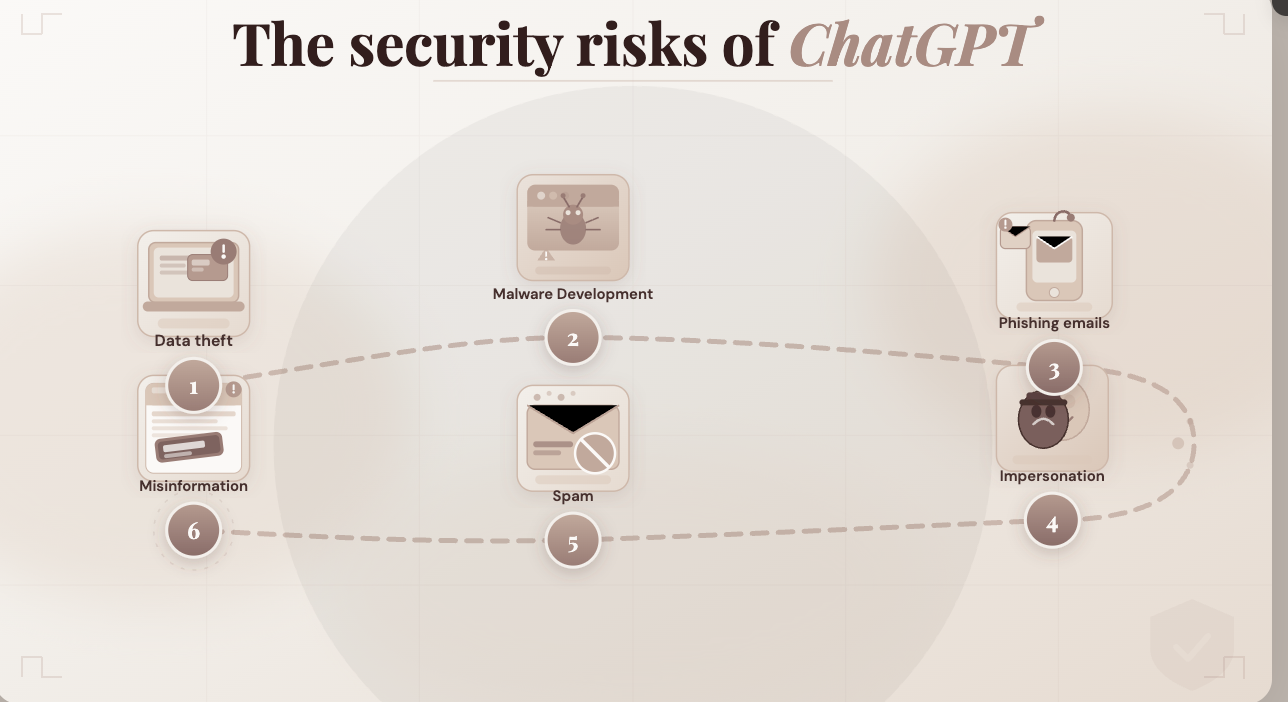

What All These Failures Have in Common

Different industries. Different use cases.

Same pattern:

- AI gets adopted quickly

- Governance is assumed, not implemented

- Problems show up only when questioned

Governance vs “We’ll Figure It Out Later”

| Factor | No Governance | With Governance |

| Data usage | Ad-hoc | Defined |

| Decisions | Hard to explain | Fully traceable |

| Outputs | Inconsistent | Controlled |

| Compliance | Reactive | Built-in |

| Scaling | Risky | Predictable |

Where LyzrGPT Fits

Going back to the healthcare example:

With LyzrGPT, that rollout would look very different.

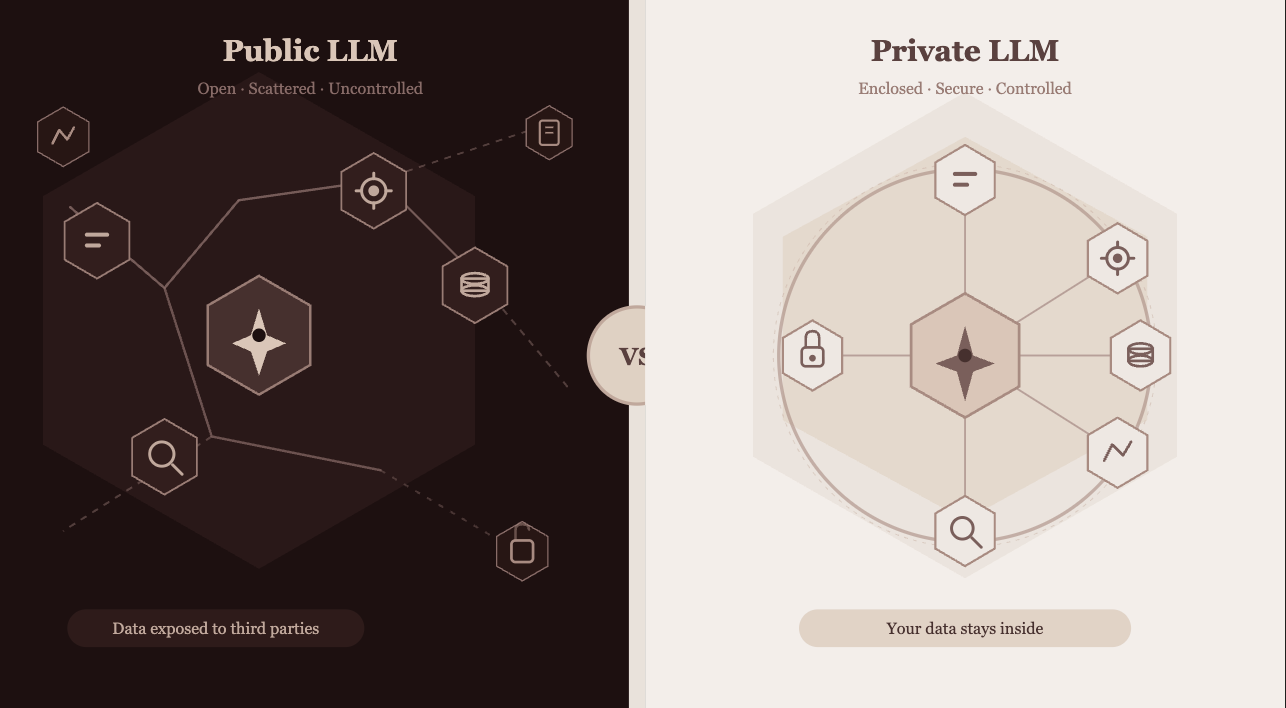

- Patient data stays within controlled environments

- Every summary is logged with full traceability

- Guardrails ensure compliance rules are followed

- Model behavior is versioned and stable

So when compliance asks:

“How does this system work?”

There’s a clear, defensible answer.

Closing Thoughts

Most AI systems don’t fail because the model is wrong.

They fail because no one defined how the system should behave.

Governance isn’t a blocker.

It’s what makes deployment possible.

Because in enterprise environments, the real test isn’t:

“Does it work?”

It’s:

“Can it pass audit, scale safely, and hold up under scrutiny?”

If that’s the bar, it’s worth rethinking how AI is deployed.

Try LyzrGPT—and see what governed AI actually looks like in practice.

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here