Table of Contents

ToggleThere’s a quiet reckoning happening inside boardrooms and government ministries.

It doesn’t always make headlines — but it’s shaping decisions about vendors, infrastructure, and the technology organisations have built their operations around.

The trigger varies.

- A new data localisation law

- A security audit revealing where AI queries are actually being processed

- A regulator asking a question no one has a clean answer to

“Where does your data go when your AI model thinks?”

That question — once rare — is now showing up everywhere.

And most organisations aren’t fully prepared for what it means.

The Dependency Nobody Planned For

When enterprises moved fast on AI adoption, the priority was capability:

- Can this model do what’s needed?

- How quickly can it be integrated?

- What does the API cost?

What didn’t get enough attention?

Control.

AI workloads were handed off to external APIs. Sensitive queries left organisational boundaries and travelled to infrastructure owned by third parties — often in different jurisdictions.

Decisions made by these models were opaque, with limited visibility into:

- How outputs were generated

- What data influenced them

This was an acceptable trade-off when AI felt experimental.

It becomes harder to justify when AI is embedded in critical workflows.

A Few Scenarios That Illustrate This

| Scenario | The Hidden Risk |

| Financial institution running loan assessments through an external AI | No full visibility into decision logic or regulatory alignment |

| Government ministry using a globally hosted AI for internal documents | Citizen data may move outside legal jurisdiction |

| Enterprise using a single-vendor AI for customer workflows | One vendor policy change can disrupt operations |

These aren’t edge cases.

They reflect how AI adoption has actually happened — fast, useful, and quietly dependent on systems the organisation doesn’t own or fully understand.

So What Is Sovereign AI, Really?

Sovereign AI didn’t emerge as a trend. It emerged as a response.

Organisations realised they had:

- Built on infrastructure they don’t control

- Used models they can’t inspect

- Processed data in places they didn’t explicitly choose

At its core:

Sovereign AI is the capacity to run AI workloads within defined boundaries.

Those boundaries can take different forms:

- Geographic — data stays within a country

- Organisational — sensitive queries stay within internal systems

- Regulatory — outputs are auditable under local law

- Operational — model behaviour is consistent and explainable

This isn’t about stepping away from AI.

It’s about owning the conditions under which AI operates.

A Useful Analogy

Think about the shift from all-in public cloud to hybrid infrastructure.

At one point, everything moved to the cloud. Over time, organisations realised:

- Some workloads require tighter control

- Some data cannot leave specific environments

- Some risks cannot be outsourced

Hybrid became the default — not because cloud failed, but because control mattered.

The same shift is now happening with AI.

What Sovereign AI Actually Requires

Instead of features, think in terms of control.

| Control Area | What It Looks Like in Practice |

| Data Residency | Data is processed where it is supposed to be — not wherever the model happens to run |

| Model Governance | Clear control over which model handles which task |

| Auditability | Ability to trace how and why an output was generated |

| Vendor Independence | Freedom to switch providers without breaking workflows |

These are no longer optional.

They are becoming baseline expectations for enterprise AI.

Why This Is a Now Problem

There’s a tendency to delay governance conversations.

That window is closing.

Regulatory Pressure Is Increasing

- Data localisation requirements are tightening

- Finance and healthcare regulators are treating AI like critical infrastructure

- Data protection laws now influence where AI inference can run

The Business Impact Is Clear

- Costs scale unpredictably when everything runs through external APIs

- Routing flexibility can reduce unnecessary spending

- Vendor lock-in grows over time, making change harder and more expensive

Sovereign AI is no longer a future consideration. It’s a present constraint.

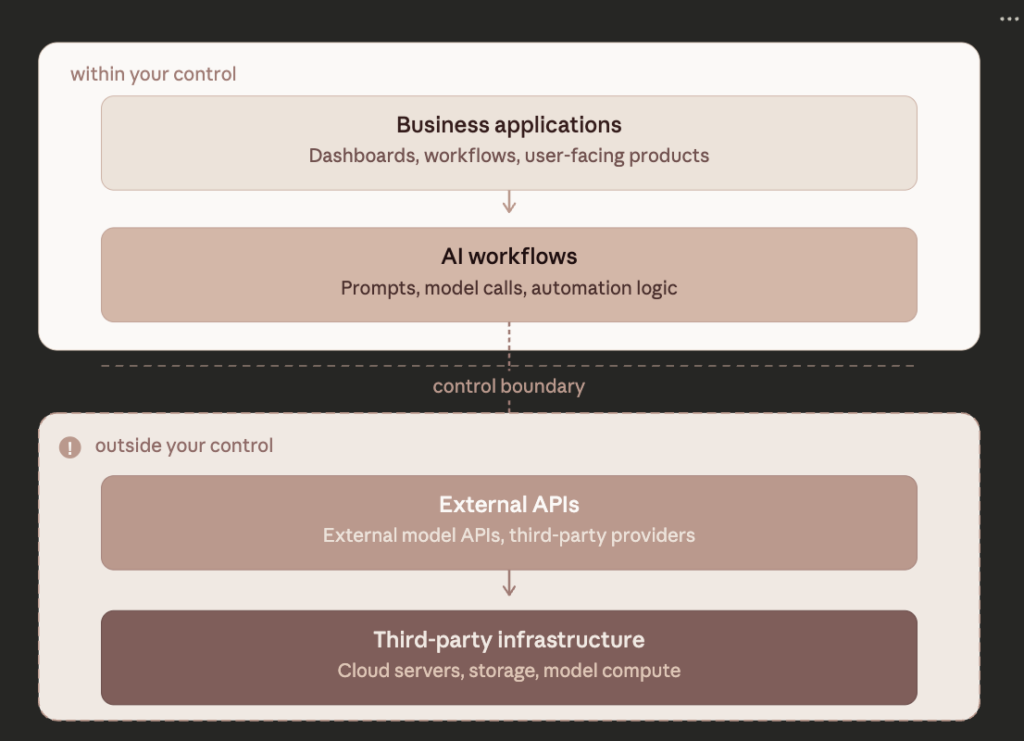

The Gap in the Current Stack

Most enterprise AI setups today weren’t designed for control.

What They Typically Look Like

- Built around a single model or provider

- Data flows outward by default

- Governance depends on contracts, not systems

- Switching models requires rebuilding integrations

- Data control requires renegotiation, not configuration

This reflects how AI tools were originally designed:

Fast access. High capability. Centralised infrastructure.

But enterprise needs have evolved.

And now there’s a gap between:

- What organisations need → control, compliance, flexibility

- What their systems allow → limited visibility, limited control

LyzrGPT: Built Around the Control Problem

LyzrGPT starts from a different premise.

Instead of anchoring everything to one model or provider, it focuses on how AI operates inside enterprise environments.

What It Enables

1. Intelligent Query Routing

Not all queries are equal.

Different tasks carry different levels of sensitivity, cost, and risk.

| Query Type | Routing Decision |

| Sensitive financial or personal data | Routed to private or on-premise models |

| Internal document summarisation | Handled within controlled infrastructure |

| Lower-stakes content generation | Routed to cost-efficient external models |

| Compliance-heavy workflows | Processed with full audit tracking |

These decisions are configurable — not fixed.

2. Model-Agnostic Architecture

No dependency on a single provider.

Models can be:

- Switched

- Upgraded

- Replaced

Without rebuilding the system around them.

3. Built-in Governance and Auditability

Every interaction can be tracked:

- Which query was processed

- Which model handled it

- Under what conditions

Without relying on external vendors to provide that visibility.

LyzrGPT doesn’t replace AI capability.

It introduces control over how that capability is used — which is where most enterprise gaps exist today.

The Shift in Thinking

Most AI conversations still focus on adoption:

- Are we using AI?

- How widely?

- How fast can we expand?

Those questions matter.

But they miss something more fundamental.

Whose AI is actually being used — and who controls how it operates?

The organisations that will hold an advantage won’t just be early adopters.

They’ll be the ones that built AI into their systems in a way they actually control.

Closing Thought

Sovereign AI isn’t an end state.

It’s a foundation.

And it’s built:

- Before the audit

- Before the incident

- Before the regulator asks a question with no clear answer

Interested in how LyzrGPT enables controlled, multi-model AI deployment for enterprise environments? Explore more at lyzr.ai

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here