Table of Contents

ToggleThink back to early 2023.

Every leadership off-site had a ChatGPT slide.

Every CIO was being asked “what’s our AI strategy?” in board meetings. LinkedIn was flooded with posts about how someone used ChatGPT to write a 10-page report in 20 minutes. A VP of Marketing drafted a full campaign brief on a flight and sent it before landing.

The energy was real. And honestly — it was warranted.

For years, enterprise AI had been a story of expensive disappointments. Custom models, data science teams that took quarters to deliver, tools that required PhD-level setup, and results that never quite matched the pitch deck.

Then ChatGPT arrived and did something none of those efforts managed: it worked, immediately, for almost anyone.

No training data. No technical expertise. Just type a question and get something useful back.

That accessibility was, and still is, genuinely remarkable.

The First Wave: Where ChatGPT Delivered

The early wins weren’t flukes. Enterprises that ran pilots in 2023 saw real, measurable improvements in specific areas:

| Use Case | Who Benefited | What Changed |

| Document drafting | Marketing, Legal, HR | First drafts in minutes vs. hours |

| Meeting summarization | Operations, Consulting | Faster action item extraction |

| RFP and proposal writing | Sales, Business Dev | Research and structure compressed |

| Customer response drafting | Support teams | Faster resolution, consistent tone |

| Internal knowledge retrieval | All teams | Less time hunting through wikis |

McKinsey’s 2023 global AI survey found that 40% of respondents planned to increase AI investment across business functions, a jump driven largely by the accessibility that generative AI had demonstrated. Gartner placed generative AI at the peak of the hype cycle faster than any technology category they had tracked before.

None of that was exaggerated. The productivity gains for people who actually used ChatGPT were real. A marketing manager shaving two hours off a campaign brief is a real two hours. A consultant summarizing a 40-page RFP before a kickoff call is genuinely useful.

So what went wrong?

The Number That Changes Everything

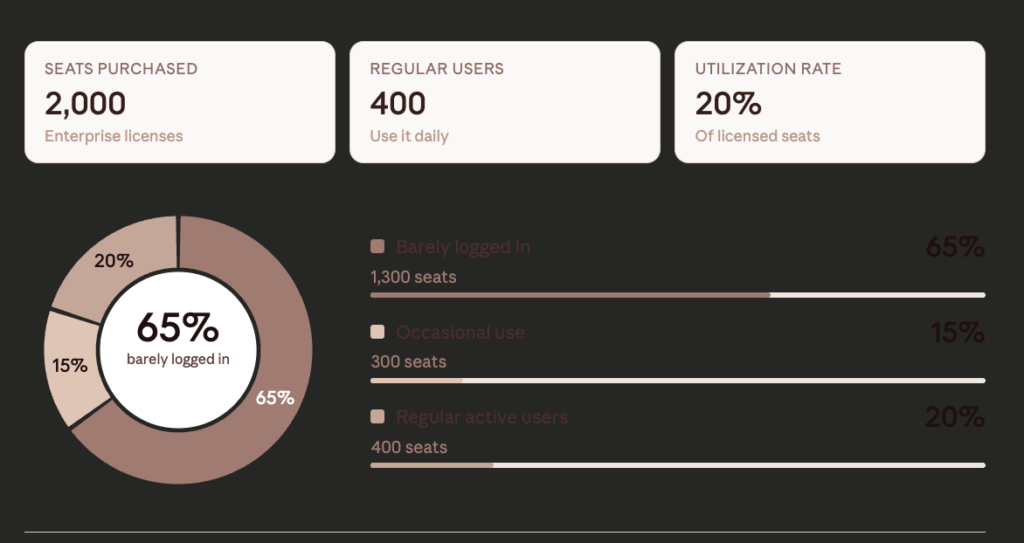

Here’s a stat that doesn’t get talked about enough: despite significant investment in enterprise AI licenses, most of them go mostly unused.

A Boston Consulting Group study found that while roughly 90% of employees were curious about generative AI, only around 20–25% were using it regularly for work. Analysis of Microsoft 365 Copilot deployments, one of the largest enterprise AI rollouts in history — found that in many organizations, fewer than 30% of provisioned seats saw consistent active use.

Think about what that means in practice. A company buys 2,000 ChatGPT Enterprise seats. Three months later:

- 400 people use it regularly (mostly Marketing, Strategy, HR)

- 300 more open it occasionally when they remember it exists

- 1,300 people have barely logged in

- The licenses are live, the budget is spent, the value is not scaling

This is not a one-company story. This pattern repeated across industries throughout 2023 and 2024. The enterprise AI adoption graph looked exciting in press releases and disappointing in analytics dashboards.

Why the Gap Exists: Five Structural Problems

When you dig into why AI adoption stalls at the team level rather than scaling across the organization, you see the same problems surfacing again and again. They’re not unique to ChatGPT — they show up in virtually every enterprise generative AI rollout.

1. It Lives Next to Work, Not Inside It

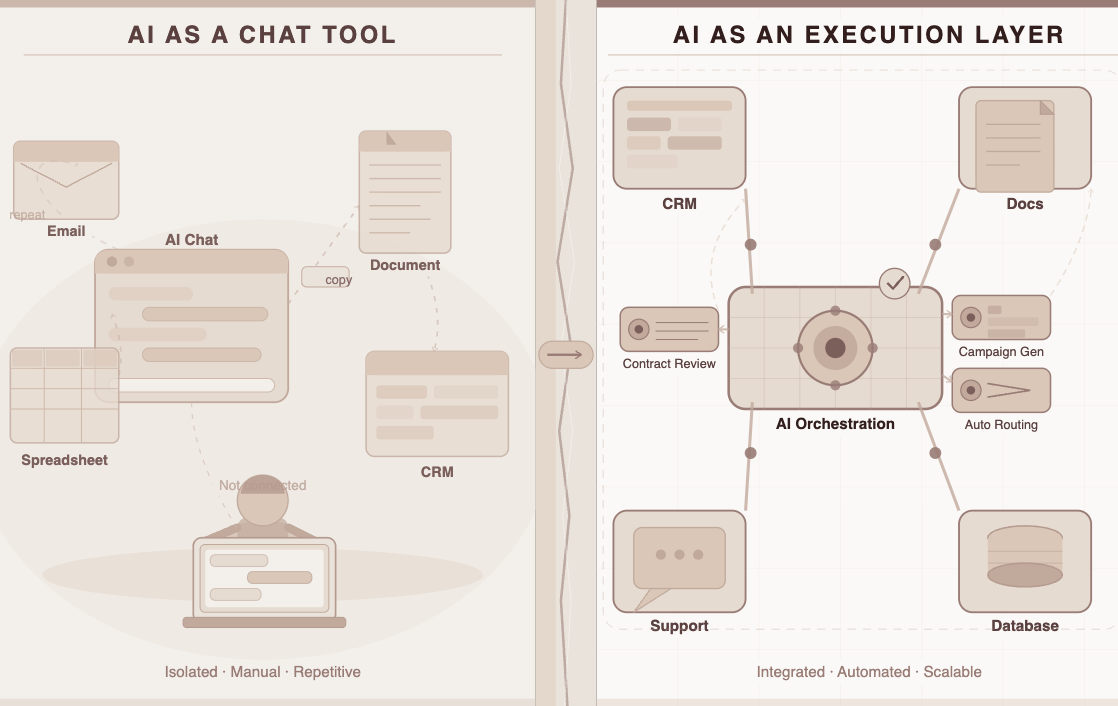

This is the big one. Most employees use ChatGPT the way they use a search engine: they ask something, get a response, and then manually carry that response into whatever tool they’re actually working in.

There’s no connection to the CRM. No link to the project management tool. No path into the knowledge base, the ticketing system, or the document repository. The AI sits beside enterprise workflows, not inside them.

Ask a consultant how they use it. They’ll describe a pattern like: open ChatGPT in one tab, paste in a document, get a summary, copy the summary into their slide deck, then go back to ChatGPT to draft the key takeaways section. It’s helpful, but it’s also seven manual steps. That’s not workflow automation — it’s assisted copy-pasting.

2. Adoption Concentrates and Then Stops

Every organization has a cohort of early adopters — typically 10–15% of employees — who integrate tools into their daily routines quickly. They find the best prompts, develop repeatable uses, and see genuine ROI.

Then there’s everyone else.

For the remaining 85%, there’s no compelling reason to change behavior. Their job isn’t to explore AI tools. Their job is to close tickets, deliver projects, and meet targets. If AI doesn’t show up in the flow of how they already work, they won’t go looking for it.

3. The Data Privacy Question Never Gets Fully Resolved

Enterprise data is sensitive by definition. Customer records, financial projections, strategic plans, HR information — teams are rightly cautious about what gets pasted into a third-party chat interface.

Even when enterprise agreements are in place, employees default to caution. And the tasks that would benefit most from AI help — the ones involving real, specific company data — are exactly the ones that people hesitate to bring into a chat window.

| Data Type | Risk Perception | Actual Usage Rate |

| Generic industry information | Low | High |

| Internal strategy docs | High | Very low |

| Customer data | Very high | Near zero |

| Financial projections | High | Low |

| HR/people data | Very high | Near zero |

The result: ChatGPT gets used for the lower-stakes tasks where it delivers the least differentiated value.

4. The Cost Math Gets Uncomfortable

Enterprise AI licenses aren’t cheap. At scale, they represent a meaningful budget line — and finance teams notice when that line doesn’t produce proportional returns.

When you pay for 2,000 seats and 400 people use them, you’ve effectively paid 5x the per-user cost you budgeted for. That math becomes harder to defend in a budget review, which leads to consolidation, reduced seat counts, or the dreaded “we need to reassess our AI spend” conversation.

5. Nothing Gets Remembered or Shared

This one is underappreciated. Every ChatGPT session starts from scratch. There is no memory of last week’s conversation. There’s no institutional learning.

A marketing manager spent two hours developing the perfect prompt for competitive analysis. It lives in her browser history, or maybe a notes doc she keeps herself. It’s not shared with the team. It’s not reusable. It’s not improving over time.

Meanwhile, across the company, five other people are each spending two hours reinventing the same approach. The knowledge management problem doesn’t get better — it just gets more expensive, which is exactly why companies consider using knowledge management alternatives to Shelf.

Let’s Be Honest About What’s Actually Happening

Here’s the uncomfortable framing that most enterprise AI conversations avoid: the model isn’t the problem.

ChatGPT is genuinely capable. GPT-4 and its successors can reason, write, analyze, and synthesize at a level that was science fiction ten years ago. The problem isn’t capability.

The problem is that enterprises are trying to deploy a system-level solution as a desktop app.

Giving everyone a chat interface and saying “use AI” is like handing a team a database and saying “use data.” The capability is real, but without the integration layer — the structure, the routing, the connections to actual systems — the value stays theoretical for most people.

The companies getting genuine scale from AI in 2024 didn’t just buy more seats. They built enterprise AI into how work flows.

What “System-Level” Actually Looks Like in Practice

Here are two real scenarios that illustrate the gap between AI-as-chat-tool and AI-as-execution-layer:

Scenario: A Marketing Team

| Chat Tool Approach | System-Level Approach |

| Open ChatGPT, paste campaign brief manually | Agent pulls current brief from project management tool automatically |

| Copy-paste brand guidelines into prompt each time | Brand guidelines are part of the agent’s permanent context |

| Generate copy variants, manually review them | Variants are routed to a review workflow and logged against the campaign |

| Results stay in chat history | Outputs are stored, tagged, and reusable for future campaigns |

Scenario: An Operations Team Managing Vendor Contracts

| Chat Tool Approach | System-Level Approach |

| Export contract to PDF, paste into chat | Agent reads contract directly from document management system |

| Ask it to find non-standard clauses | Agent compares clauses against approved playbook in knowledge base |

| Read the output, manually flag exceptions | Exceptions are automatically routed to compliance reviewer |

| No record of analysis | Flagged issues are logged in the contract database |

The capability gap between these two columns isn’t a model problem. It’s an architecture problem. And it explains why enterprise AI agents — systems that can take action across tools, not just generate text in a chat box — have become the next phase of this conversation.

The Shift from Tools to Systems

There’s a concept getting serious attention from enterprise technology teams right now: agentic workflows.

Rather than an employee going to an AI tool, asking a question, and manually acting on the answer — an agentic system monitors a workflow, detects what’s needed, takes action across the relevant systems, and produces a structured output that feeds into the next step. The human reviews decisions that require judgment. The routine parts run automatically.

This isn’t AI replacing workers. It’s AI actually integrated into how work gets done — which is what enterprise buyers thought they were buying in the first place.

What defines a genuinely enterprise-ready AI system, as opposed to an AI tool:

| Capability | Chat Tool | AI System / Agent |

| Workflow integration | Manual copy-paste | Native connection to existing tools |

| Output format | Free-form text | Structured, system-compatible outputs |

| Knowledge retention | Zero — every session resets | Persistent, improving over time |

| Governance | None by default | Audit trails, access controls, data boundaries |

| Cost efficiency | One model for everything | Right-sized model per task type |

| Adoption | Individual opt-in | Embedded in team’s daily workflow |

The AI agent framework debate has matured significantly over the past 18 months. The question is no longer “can AI do this task?” — it’s “how do we get AI working inside our actual systems without creating compliance nightmares or rebuilding our entire tech stack?”

Where LyzrGPT Comes In

This is the context in which LyzrGPT was built.

Not as another chat interface layered on top of GPT-4. Not as a prompt library or a ChatGPT skin with your company logo. LyzrGPT is positioned as the enterprise infrastructure layer that makes AI actually work at scale — the piece between “the model is capable” and “the model is delivering value across our organization.”

A few things distinguish it from what most enterprise teams have tried before:

Multi-model routing. Not every task needs the most powerful (and expensive) model. LyzrGPT routes queries to the right model based on what the task actually requires — which means dramatically lower inference costs at scale without sacrificing output quality where it matters.

Workflow execution, not just generation. Through Lyzr Agent Studio, enterprises can build agents that connect to their existing systems — Salesforce, SAP, ServiceNow, SharePoint, and others — and take action, not just produce text. The output of an AI interaction can feed directly into whatever system needs it next.

Knowledge retention that accumulates. Rather than starting every interaction from zero, LyzrGPT builds and maintains a knowledge graph that makes agents smarter over time. The prompt a team develops today doesn’t disappear into someone’s browser history — it becomes a shared asset.

Governance built in. For regulated industries especially, this is the thing that tends to unlock adoption. LyzrGPT includes audit trails, data handling rules, and output review mechanisms. That means legal, compliance, and finance teams — the groups most likely to block AI rollouts — have the controls they need to actually approve broader deployment.

Private deployment. For enterprises where data residency is non-negotiable, LyzrGPT runs inside your own VPC. The model doesn’t have to mean giving a third party access to your data.

There’s a useful framing from Lyzr’s own production playbook: success is not about picking the best model. It’s about embedding whichever model works inside flexible, resilient workflows — and building systems that retain memory, absorb feedback, and improve over time rather than stagnating. That’s a fundamentally different design philosophy than “give everyone a chat tool and see what happens.”

Where This All Lands

ChatGPT changed something important. It made AI accessible to people who had never interacted with it before. It demonstrated, at scale, that language models can do useful work across a remarkable range of tasks. It created organizational appetite and executive willingness to invest in AI that simply didn’t exist before.

That contribution shouldn’t be minimized.

But the gap between “this is impressive in a demo” and “this is delivering value at scale across our organization” turns out to be a gap of infrastructure, not intelligence. The model can think. The question is whether the enterprise has built the plumbing to let it work.

The pattern from MIT’s research across 300+ enterprise AI implementations is consistent: the projects that scale are the ones designed as systems, not tools. They’re the ones where AI is integrated into workflows rather than sitting beside them, where knowledge is retained rather than reset, and where governance is built in rather than bolted on.

The next phase of enterprise AI isn’t about more seats or better prompts. It’s about building AI into the way work actually flows.

That’s not a difficult idea. But it does require a different kind of infrastructure than a chat window.

Want to see how enterprise AI systems actually get deployed in production? Read Lyzr’s guide to taking agents to production.

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here