Table of Contents

ToggleSomeone on your team is pasting a client proposal into ChatGPT to clean it up. Someone else just ran last quarter’s financials through a free AI summarizer. A developer is debugging proprietary code with a consumer chatbot because it’s faster than asking the internal team.

Nobody flagged it. Nobody stopped it. Nobody even noticed.

Here’s the thing, none of these people are doing anything wrong in their minds. They’re just trying to get work done faster.

But 80% of enterprises have already experienced an AI-related data incident, and in most of those cases, the employees involved genuinely had no idea they were creating a problem.

That’s what makes this so hard to fix. It’s not a bad actor problem. It’s a no-guardrails problem.

And leadership isn’t exactly on top of it either, 67% of enterprise leaders admit they have no visibility into which AI tools their employees are using. You can’t govern what you can’t see.

Wait, Aren’t Employees Just Being Productive?

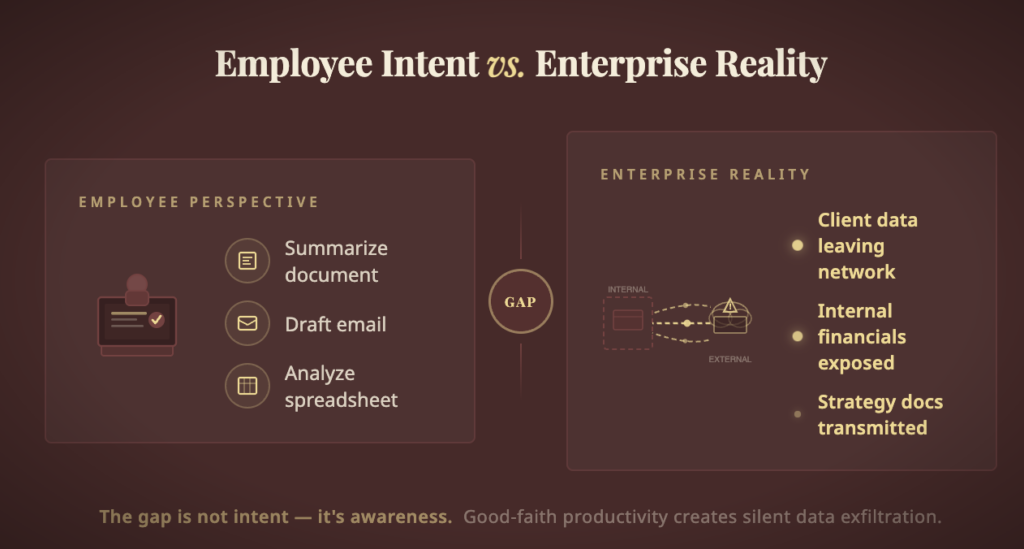

Yes. That’s precisely what makes this so complicated.

Workers using public AI tools aren’t trying to cause harm. They’re trying to do their jobs better. But there’s a massive gap between individual productivity and organizational safety, and public AI tools were designed to close the first gap without any regard for the second.

Here’s what’s actually leaving the building every time someone pastes data into a public AI tool:

💡 What employees think they’re doing: Summarizing a doc, drafting an email, running a quick analysis.

🚨 What’s actually happening: Proprietary data, client names, internal financials, and strategy memos are being sent to external servers, potentially cached, logged, and used for model retraining.

And once it’s out? There’s no “undo.”

The Numbers That Woke Up the C-Suite

For years, enterprise leaders looked the other way. The productivity gains felt real. The risks felt abstract. Then the data started landing on desks, and it was impossible to ignore.

| The Shadow AI Reality | Stat |

| 🔍 Enterprises with zero AI tool visibility | 67% |

| 😰 Enterprises that have already had an AI-related data incident | 80% |

| 💸 Additional breach cost from shadow AI | ~$670,000 per incident |

| 📅 Average days to detect a shadow AI breach | 247 days |

| 🔒 Organizations with NO basic AI data controls | 83% |

| ⚠️ Security leaders expecting a shadow AI breach within 12 months | 49% |

That 247-day number deserves its own moment. Eight months. That’s how long, on average, a company is hemorrhaging data before anyone notices. In that window, customer records get exposed, competitive intelligence leaks, and compliance violations accumulate silently.

The abstract became very, very concrete.

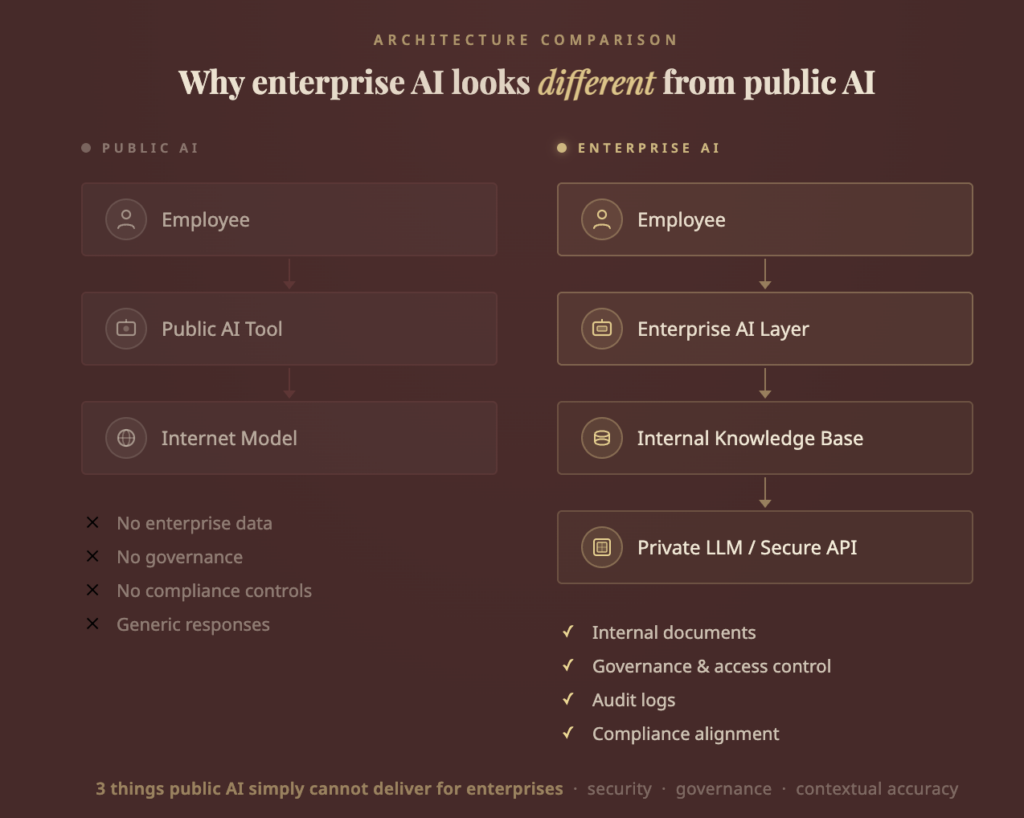

3 Things Public AI Simply Cannot Deliver for Enterprises

1. “Where Did Our Data Go?”: The Control Problem

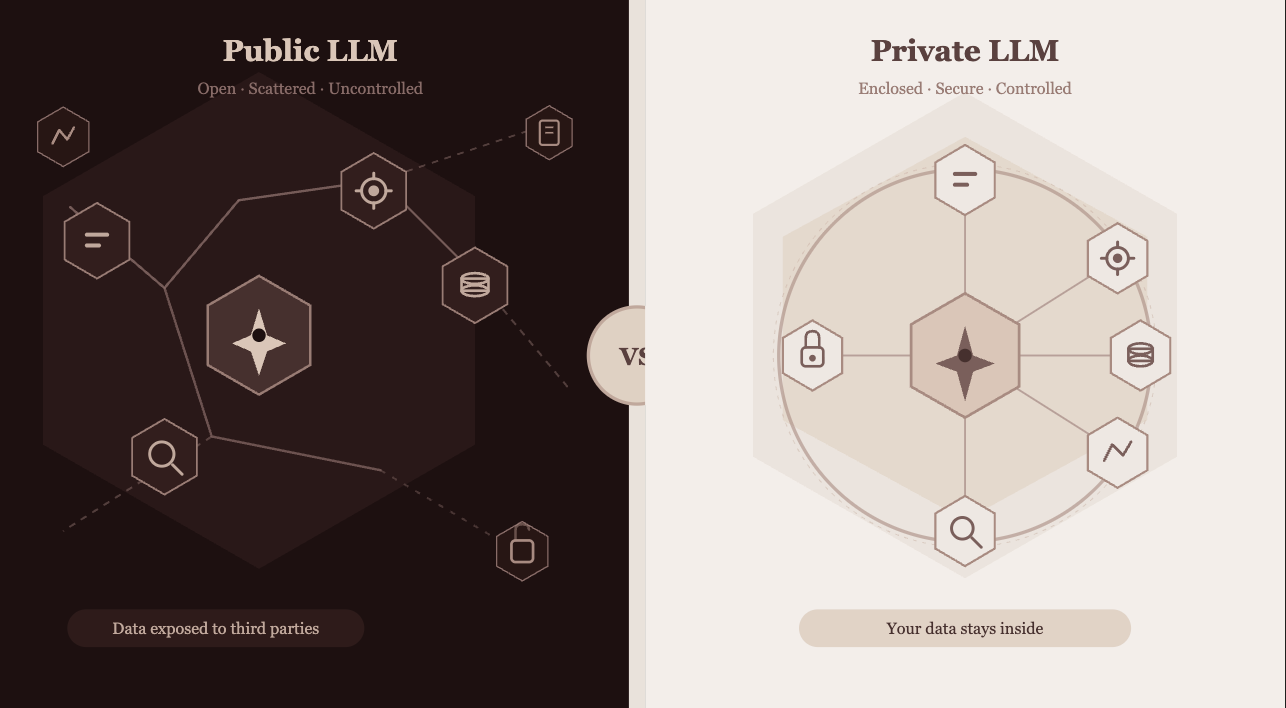

A public AI tool is a black box with an internet connection.

When your employee uses it, your data travels to servers you don’t own, in jurisdictions you may not have approved, processed by infrastructure you have zero insight into. Traditional security tools, built to monitor files and emails, weren’t designed to catch a natural language conversation slipping out the back door.

The exposure points are everywhere:

- Data leakage → Confidential reports, financial models, and client data shared externally with no audit trail

- IP theft risk → Strategy docs or product plans fed into public tools can create murky ownership questions and competitive exposure

- Vendor opacity → No contractual guarantee about what happens to your data after it’s submitted

- Cross-border processing → Data processed outside your approved jurisdictions means automatic regulatory violations in many frameworks

2. Regulators Are Coming and They’re Not Interested in “We Didn’t Know”

Here’s the uncomfortable truth: ignorance is not a compliance defense.

The regulatory landscape around AI is tightening globally, and it’s doing so faster than most enterprise legal teams anticipated. The laws don’t make exceptions for employees who were “just being productive.”

| Regulation | What It Means for Public AI Use | Potential Penalty |

| GDPR (EU) | Data processed outside approved systems triggers automatic violation | Up to 4% of global annual revenue |

| HIPAA (US) | Any patient data in a public AI tool = breach; only 35% of orgs track AI usage | Up to $1.9M per violation category |

| EU AI Act | Transparency and risk-classification obligations for all AI deployed at scale | Up to €35M or 7% of global turnover |

| PCI DSS | Financial data in unsanctioned tools risks losing payment processing rights | Loss of merchant status + fines |

| SOC 2 | Undocumented AI usage undermines audit compliance across all trust criteria | Loss of certification |

3. ChatGPT Has Never Heard of Your Business, and That’s a Real Problem

Beyond security and compliance, there’s a performance ceiling that enterprises keep bumping into.

Public LLMs know everything about the internet. They know nothing about your company.

They don’t know:

- Your internal processes or institutional knowledge

- Your client relationships or account history

- Your proprietary methodologies or frameworks

- Your industry’s specific regulatory language or risk criteria

For a quick draft, a brainstorm, or even experimenting with an ai ad maker for low-risk creative tasks? Good enough. For a clinical decision support tool, a legal document review system, or a customer-facing support agent? Not even close.

“The companies winning with AI aren’t using the most AI. They’re using AI that’s actually trained on their world.”

Enterprise-grade AI, fine-tuned models, RAG systems built on internal knowledge bases, private deployments, doesn’t just outperform generic tools. It compounds. Every document it learns from makes it more accurate for your specific context. Public tools reset to zero every conversation.

So What Are Enterprises Actually Moving Towards?

Q: If not public AI, then what?

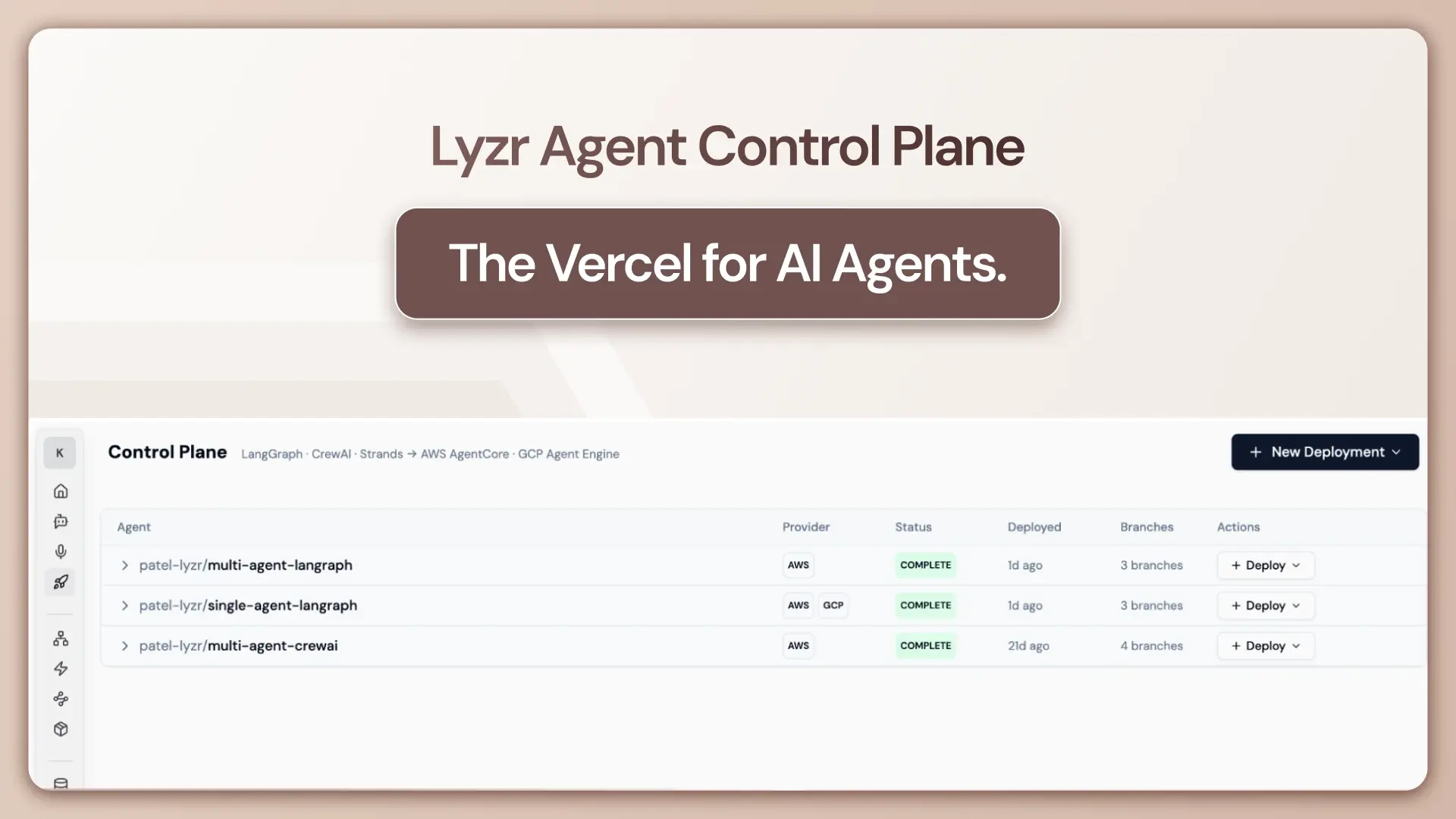

A: Three paths have emerged, and most enterprises use a mix of all three:

| Path | What It Is | Best For |

| Private cloud deployment | Running foundation models on enterprise-controlled infrastructure | Max data sovereignty, regulated industries |

| Enterprise-licensed AI | ChatGPT Enterprise, Claude for Enterprise, Gemini for Workspace, with contractual data protections | Mid-market, faster rollout, lower infra cost |

| Custom fine-tuned / RAG models | Models trained on internal docs, processes, and knowledge bases | Deep specialization, competitive differentiation |

Q: Is this just large enterprises, or is the whole market shifting?

A: The whole market. Enterprise generative AI spending hit $13.8 billion in 2024, a 6x increase from $2.3 billion the year before. By 2025, that number reached $37 billion (Menlo Ventures), up 3.2x year-over-year. Private AI infrastructure investment grew 44.5% year-over-year. This is a structural realignment, not a trend.

Q: Which industries are furthest along?

A: Healthcare, financial services, and legal are leading, unsurprisingly, given regulatory pressure. Healthcare AI vertical spend hit $1.5 billion in 2025, a 3x increase in a single year. Technology companies follow closely, but even there, the shift is toward sanctioned, governed tooling rather than the open-access wild west of 2023.

Here’s What the Migration Actually Looks Like (It’s Not a Light Switch)

Enterprises don’t flip off public AI overnight. The migration happens in four stages — and organizations that skip stages tend to regret it.

Stage 1 → Audit

Discover what’s actually running. Most companies are shocked. Shadow AI inventories regularly turn up 5–10x more tools than IT thought existed.

Stage 2 → Govern

Stand up an AI governance framework or center of excellence. Define what’s approved, what’s prohibited, and who owns the decision.

Stage 3 → Replace

Identify the highest-risk use cases (anything touching customer data, financials, or regulated content) and migrate them to approved enterprise alternatives first.

Stage 4 → Embed

Roll out training that’s not just “here’s how to use the new tool” but “here’s why this matters.” Enterprises that invest here see 2.7x higher AI proficiency and 4.1x higher user satisfaction compared to orgs that skip it.

The Honest Takeaway: Public AI Wasn’t the Problem , Ungoverned AI Was

There’s a nuance worth landing on before we close.

Public AI tools like ChatGPT and Claude aren’t bad. They’re extraordinary. They proved that AI could be accessible, useful, and genuinely transformative at scale. Without them, enterprise AI adoption would be years behind where it is today.

But tools designed for individuals were never meant to carry the weight of enterprise accountability. They don’t have data residency guarantees. They don’t integrate with your identity management systems. They don’t generate audit logs that satisfy your compliance team. They don’t know your business.

The enterprises moving away from public AI aren’t rejecting the technology. They’re graduating from it, to something built for the context, the scale, and the stakes of a real organization.

🏁 The bottom line: The organizations winning with AI in 2026 aren’t the ones using the most tools. They’re the ones who figured out which tools belong inside the walls — and built the governance to back it up.

Public AI was the gateway. Enterprise AI is the destination.

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here