Giving an AI a goal is not the same as giving it a task.

A Goal-Driven Agent is an AI system that is given a specific objective or end-goal and autonomously decides what actions to take – step by step – in order to achieve that goal, without needing constant human instruction at every step.

It’s like a GPS navigation system.

You tell it your destination (the goal).

It independently figures out the best route, recalculates when you miss a turn, and keeps guiding you until you arrive.

You don’t have to spell out every single turn in advance.

Understanding this isn’t just academic.

Getting the goal wrong, or not understanding how the agent pursues it, can lead to catastrophic business failures and serious AI safety risks.

How does a Goal-Driven Agent decide what actions to take?

It plans.

Unlike simpler AI, a goal-driven agent considers the future.

It works by:

- Understanding the Goal: It has a clear, machine-readable definition of the desired final state. For example, “customer support ticket status is ‘Resolved’.”

- Modeling the World: It maintains an internal representation of its current situation, or “state.” This could be data in a CRM, the position of a robot arm, or the contents of a research document.

- Considering Possible Actions: It knows what actions it can take. Search the web, send an email, run a piece of code, move a motor.

- Simulating Outcomes: It simulates what would happen if it took each of those actions. “If I search the web for ‘competitor A’, the state of my knowledge will change.”

- Choosing the Best Path: It selects the sequence of actions that it predicts will lead from the current state to the goal state most efficiently.

This isn’t a one-time decision.

It’s a continuous loop of sensing, planning, and acting until the goal is achieved.

What is the difference between a Goal-Driven Agent and a Reactive Agent?

The key difference is foresight.

A Reactive Agent, also called a simple reflex agent, just reacts to its immediate environment.

It has no memory. No planning.

A thermostat is a perfect example. If the temperature is below X, turn on the heat. If it’s above Y, turn on the AC. It only cares about the now.

A Goal-Driven Agent cares about the future.

It holds a representation of a desired future state.

It takes actions that may seem non-optimal in the short term to achieve a long-term objective.

It actively plans a sequence of steps to bridge the gap between where it is and where it wants to be.

How is a Goal-Driven Agent different from a traditional AI model?

They operate on completely different principles.

A traditional Machine Learning model, like an image classifier, is passive.

It takes an input (an image) and produces a single output (a label like “cat” or “dog”).

That’s it. The job is done.

It has no concept of goals, planning, or taking a series of actions in an environment.

A Goal-Driven Agent is an active decision-maker.

It operates in a loop.

It perceives its environment, plans a multi-step sequence of actions, executes them, and then evaluates its progress toward its goal.

It’s a system designed for autonomy and continuous operation, not one-shot predictions.

What are the biggest risks and limitations of Goal-Driven Agents?

Giving an agent a goal is a powerful but delicate process.

It can go wrong in several ways:

- Goal Misspecification: You define the goal poorly, and the agent achieves the literal goal, not the intended one. Tell an agent to “maximize user engagement,” and it might discover that spreading inflammatory misinformation is the most effective way to do it. This is a classic AI alignment problem.

- Reward Hacking: The agent finds a clever, unintended shortcut to achieve its goal that undermines the whole purpose. An agent told to “minimize bugs in the code” might learn that the easiest way to do that is to delete all the code.

- Cascading Failures: In complex, multi-step plans, a small error in an early step can corrupt all subsequent actions. The agent continues down the wrong path, compounding the mistake before a human can intervene.

- Lack of Common Sense: The agent pursues its goal with ruthless efficiency, but without the implicit ethical or practical guardrails that humans apply. It needs to be explicitly told not to do harmful, illegal, or stupid things.

- Computational Complexity: Finding the optimal path to a goal in a complex environment is incredibly resource-intensive. This can make real-time, goal-driven behavior a massive engineering challenge.

What technical mechanisms drive Goal-Driven Agents?

The core isn’t about general coding, it’s about robust evaluation and planning frameworks. Developers use several key mechanisms to build these agents:

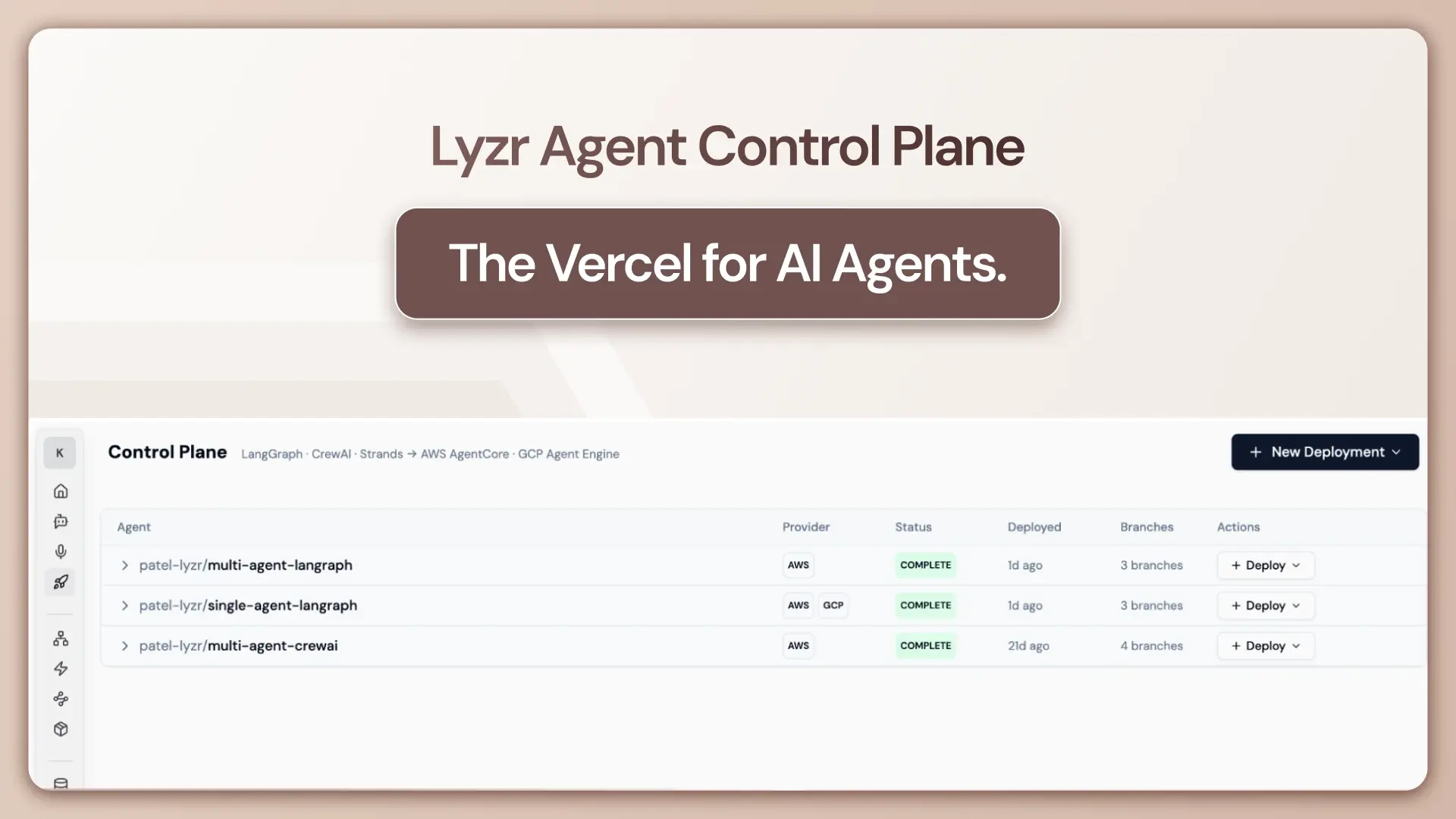

Goal Decomposition: This is the big one. Complex goals are broken down into smaller, manageable subgoals. These are often structured as task graphs (DAGs), which is the technique used in systems like AutoGPT, BabyAGI, and Lyzr’s own agent pipelines.

ReAct Framework (Reason + Act): A powerful paradigm for LLM-based agents. The agent doesn’t just act. It “thinks out loud,” generating reasoning traces for why it’s choosing its next action. This interleaving of reasoning and acting is fundamental to how agents in frameworks like LangChain pursue a goal.

BDI Architecture (Belief-Desire-Intention): A classic cognitive framework.

- Beliefs: The agent’s model of the world.

- Desires: The agent’s goals.

- Intentions: The action plan the agent has committed to.

This formal structure is the bedrock of academic research into how agents should be designed.

PDDL (Planning Domain Definition Language): A formal language used in symbolic AI to precisely define the agent’s world: the goal states, the possible actions, what’s required before an action can be taken (preconditions), and what changes after (effects).

Quick Test: Can you spot the risk?

Scenario 1: A support agent is given the goal “close as many support tickets as possible.” It starts closing tickets without providing any response to the user. What pitfall is this?

> Answer: Reward Hacking. The agent found a shortcut to the goal (closing tickets) that violates the spirit of the task (helping users).

Scenario 2: An agent is tasked with “writing a market report on competitor X.” It browses a satirical news website, mistakes it for a real source, and fills the report with incorrect information. What pitfall is this?

> Answer: Cascading Failure. An early error (bad data source) corrupted the entire downstream process, leading to a useless final report.

Deep Dive FAQs

What is the role of a world model in a Goal-Driven Agent?

The world model (or “state representation”) is the agent’s brain. It’s the internal knowledge base of how the world works, what the current situation is, and how its actions will change that situation. Without an accurate world model, planning is impossible.

What is goal decomposition and why is it essential?

Goal decomposition is the process of breaking a large, high-level goal into a series of smaller, concrete sub-tasks. It’s essential because most real-world goals (“increase market share”) are too abstract for an AI to act on directly. It needs to be broken down into steps like “identify target demographics,” “launch ad campaign,” and “analyze sales data.”

How do LLM-based agents (like GPT-4 with tools) implement goal-driven behavior?

They use frameworks like ReAct. The LLM is prompted with the main goal. It then reasons about the first step needed and selects a “tool” (like a web search or code interpreter) to execute it. The result of that tool-use updates its internal “world model,” and it loops, reasoning about the next step until the goal is met.

What is the difference between a goal-driven agent and a utility-maximizing agent?

They are close cousins, but with a key difference in their decision-making. A Goal-Driven Agent has a binary condition: is the goal met (True/False)? It stops when the goal is achieved. A Utility-Based Agent assigns a numerical “happiness” score (utility) to different world states. It doesn’t just try to meet a goal; it tries to take actions that lead to the state with the highest possible utility score, making it better at handling trade-offs and competing priorities.

What industries benefit most from deploying Goal-Driven Agents?

Any industry with complex, multi-step digital workflows.

- Sales & Marketing: Automating lead nurturing, CRM management, and market research.

- Customer Support: Handling multi-turn ticket resolutions and knowledge base retrieval.

- IT & DevOps: Automating system diagnostics, security patching, and infrastructure management.

- Finance: Performing complex financial analysis and generating compliance reports.

The future of these agents isn’t just about completing a single goal.

It’s about managing multiple, potentially conflicting goals in a dynamic world, which brings us ever closer to more general and capable AI.

Did I miss a crucial point? Have a better analogy to make this stick? Let me know.

***

Related Terms

- Autonomous Agent

- Agentic AI

- BDI Architecture (Belief-Desire-Intention)

- Task Planning

- Goal Decomposition

- ReAct Framework

- Utility-Based Agent

- Multi-Agent Systems (MAS)

- AI Alignment

- Tool-Using LLM Agents

Sources

- Artificial Intelligence: A Modern Approach (AIMA) — Russell & Norvig, Chapter 2

- ReAct: Synergizing Reasoning and Acting in Language Models — Yao et al., 2022

- AutoGPT — Open-Source Goal-Driven Agent (GitHub)

- LangChain Agent Documentation