Table of Contents

ToggleTL;DR – Key Takeaways

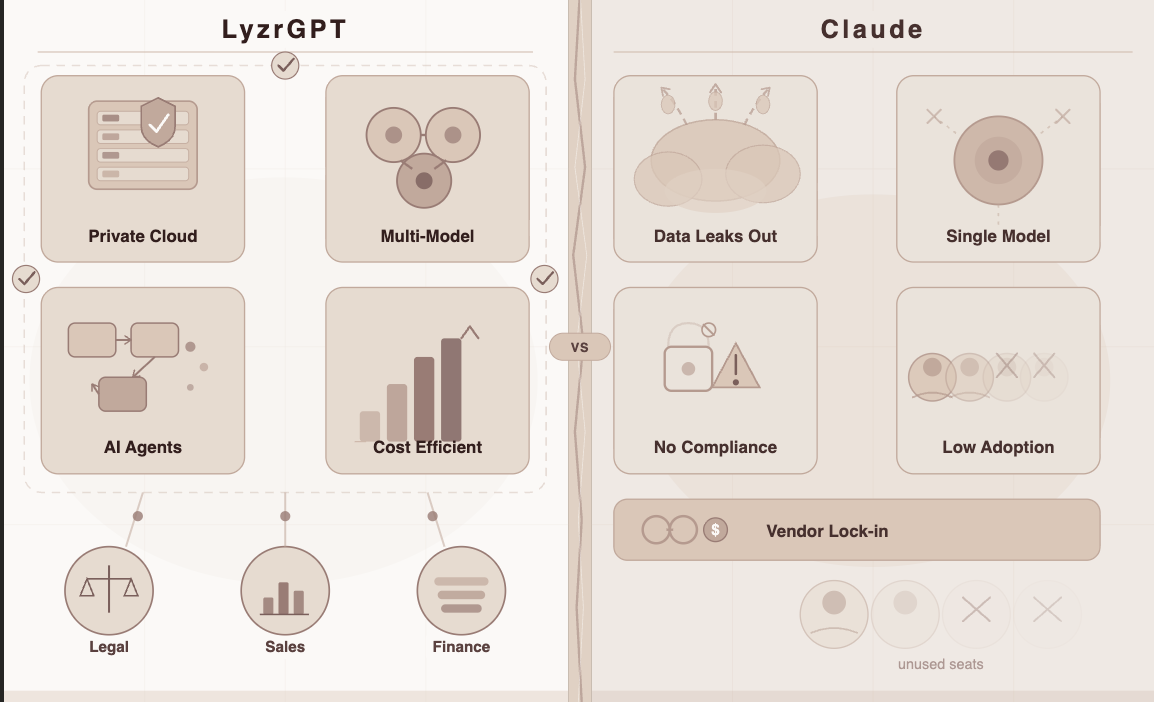

- Claude is excellent for individuals but creates data, cost, and vendor lock-in problems at enterprise scale

- Enterprise AI platforms need five things: private deployment, multi-model support, consumption pricing, pre-built agents, and robust governance

- Per-seat pricing becomes expensive fast – at 5,000 users, you’re paying for people who barely use the tool

- Data residency isn’t optional – HIPAA, GDPR, and industry regulations require data to stay in your environment

- LyzrGPT offers a complete alternative with VPC deployment, model-agnostic architecture, and purpose-built agentic workflows for every department

It starts the same way for almost everyone.

You discover Claude. Maybe a colleague shares a link, maybe you stumble across it on your own. You ask it something, and the response genuinely surprises you – thoughtful, well-reasoned, a little better than you expected.

So you use it more. You draft emails with it, summarize long documents, think through problems out loud. It becomes a quiet productivity habit.

Then at some point, someone in your organization says, “Hey, should we roll this out more broadly?”

And that’s when things get complicated.

The Enterprise AI Gap Nobody Talks About

Here’s the thing: Claude is a genuinely impressive product. For an individual, or even a team of ten doing everyday knowledge work? Brilliant.

But enterprise AI isn’t about ten people. It’s about three hundred. Or three thousand. And at that scale, three very uncomfortable questions surface:

- “Where exactly does our data go?”

- “How much is this going to cost us at 5,000 users?”

- “What happens if a better model comes out tomorrow?”

Let’s take each one honestly.

The Data Residency Problem

When you use Claude, your prompts – along with every piece of context you feed them – travel to Anthropic’s servers. For a consumer user, that’s perfectly fine. But for enterprise teams, it’s a different story:

- A hospital processing patient records can’t let that data leave its environment – that’s a HIPAA violation waiting to happen

- A bank analyzing loan applications is working with data that regulators explicitly govern

- An insurer reviewing claims has confidentiality obligations that SaaS deployments simply can’t satisfy

- A law firm drafting strategy documents can’t risk privileged information sitting in a shared cloud

HIPAA doesn’t care that the model gave a great answer. GDPR doesn’t care that the vendor has good intentions. If the data moved outside your controlled environment, you have a compliance problem.

This is where AI governance for enterprises becomes non-negotiable, not just a checkbox exercise.

The Cost Problem at Scale

Claude’s enterprise tier – like almost every enterprise AI tool on the market – charges per seat. Here’s what that looks like in practice:

| Team Size | Monthly Cost (Estimated) | Reality Check |

| 50 users | Manageable | Looks fine in a pilot |

| 500 users | Expensive | Budget conversations start |

| 5,000 users | Painful | You’re paying for people who log in twice a month |

Most enterprise AI spend quietly goes unused because adoption is uneven – some teams live in the tool, others barely touch it. Per-seat pricing punishes you for that natural variation.

The math breaks down fast. If 40% of your licensed users only use AI tools occasionally, you’re still paying 100% of the cost for them. That’s not just inefficient – it’s unsustainable.

The Vendor Lock-In Problem

Claude is one model, from one vendor, on one roadmap. That means:

- If Anthropic raises prices → you’re stuck with it

- If a better model launches from another lab → you can’t easily switch

- If your use cases evolve → you’re limited to whatever Claude does next

- If regulatory requirements change → you’re dependent on one vendor’s roadmap

For enterprises that plan 12-24 months ahead, that’s a strategic liability. The AI landscape changes quarterly. Being locked into a single model provider is like signing a long-term contract with a smartphone maker in 2007 – the market will move whether you can or not.

So the Search for a Claude Alternative Begins

If you’ve ever typed “Claude alternative” into a search bar, you know the results get confusing fast. Dozens of tools claim to be enterprise-ready, secure, or “ChatGPT but private.”

Most are wrappers – a frontier model, a UI on top, some branding, and a sales deck.

The real question isn’t which AI model is smartest – that race changes every few months anyway. The real question is:

Which platform is actually built for how enterprises work?

That means filtering for the things that actually matter:

- ✅ Private deployment inside your own environment

- ✅ Multi-model flexibility – no vendor lock-in

- ✅ Pricing that scales with usage, not headcount

- ✅ Pre-built workflows for real business functions

- ✅ Governance, audit trails, and compliance architecture

- ✅ Integration with existing enterprise systems

When you apply that filter, one platform keeps rising to the top: LyzrGPT.

What LyzrGPT Actually Is

“We built LyzrGPT because enterprises kept telling us the same thing: ‘We want ChatGPT-level intelligence, but we can’t risk our data leaving our environment.'” – Lyzr AI Team

Launched in March 2026, LyzrGPT is a private, multi-model AI platform built to sit inside your environment – not alongside it. It’s not a chatbot. It’s not a wrapper. It’s what enterprise AI looks like when it’s designed for the way businesses actually operate.

Here’s what that means in practice.

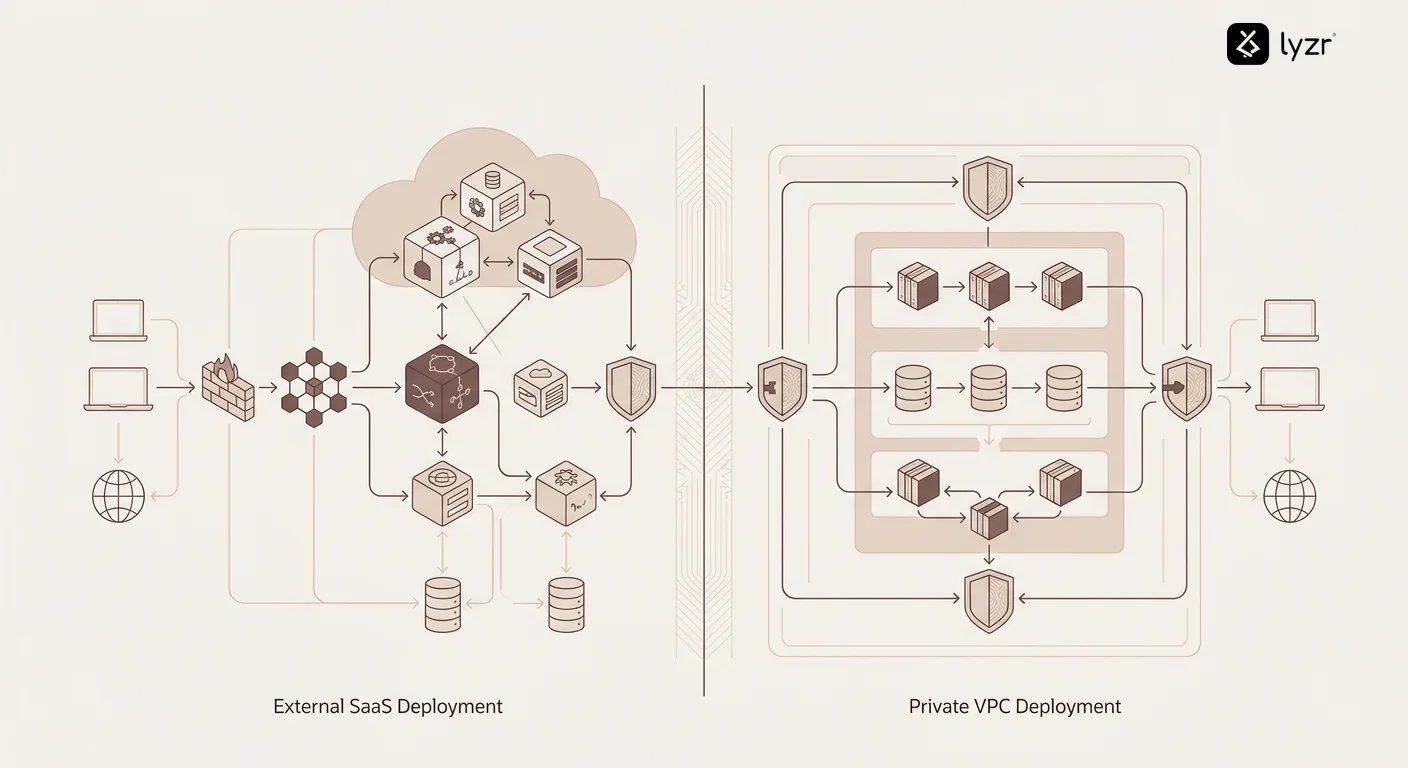

1. Your Data Never Leaves Your Environment

LyzrGPT deploys entirely within your own VPC or on-premise infrastructure. Nothing leaves your perimeter.

| Scenario | With Claude | With LyzrGPT |

| Employee queries a policy doc | Data sent to Anthropic’s servers | Stays within your VPC |

| HR processes a job application | Leaves your environment | Private and contained |

| Finance runs a forecast | External cloud processing | On-prem, fully controlled |

| Legal drafts a strategy doc | Shared SaaS infrastructure | Zero external exposure |

For regulated industries, this isn’t a feature – it’s the foundation everything else is built on. This approach to enterprise automation ensures compliance from day one.

2. One Interface, Every Model – No Lock-In

LyzrGPT is completely model-agnostic. Instead of being tied to Claude’s roadmap, you get:

- GPT-4, Claude, Gemini, and others – all accessible from one interface

- Intelligent auto-routing – LyzrGPT picks the right model for each task automatically

- Mid-conversation switching – change models without losing context

- Cost optimization built in – simple queries go to faster, cheaper models; complex ones go to frontier models

Think about what this means strategically. When a better model comes out – and they always do – you adopt it without migrating your entire stack. You stay current without starting over.

This is fundamentally different from traditional single-model approaches. You’re not just using AI agents – you’re using the best available agent for each specific task.

3. Consumption-Based Pricing That Actually Scales

LyzrGPT doesn’t charge per seat. You pay for what’s actually used.

Here’s why that matters at scale:

- Uneven adoption? No problem. Heavy users and occasional users don’t cost the same

- Scaling up? Costs grow proportionally – not exponentially

- Piloting new teams? No seat minimums dragging up your bill

- Real ROI visibility – you see exactly what’s being used and what it costs

For large organizations, this isn’t a small distinction. It’s the difference between AI being a strategic investment and AI being a quarterly budget argument.

One financial services company saw their AI costs drop 47% in the first quarter after switching from per-seat to consumption pricing – not because they used less AI, but because they stopped paying for seats that barely logged in.

4. Pre-Built Agents for Every Team

This is where LyzrGPT goes furthest beyond a simple Claude alternative. It ships with a full library of purpose-built AI agents for enterprises – not generic writing assistants, but workflows that plug directly into how your teams operate.

| Team | Agents Available | What It Replaces |

| Sales & Marketing | AI SDR, Deal Nurturer, Lead Enrichment, ABM Agent | Manual outreach, pipeline follow-ups, prospect research |

| Banking & Fintech | Loan Origination, Loan Servicing, KYC Processing, Regulatory Monitoring | Weeks of custom dev work for each workflow |

| Insurance | Claims Processing, Policy Underwriting, Litigation Clause Extraction, Compliance Checks | Manual review queues and compliance bottlenecks |

| HR & Internal Ops | AI Hiring Assistant, Document Intelligence, Approval Workflow Automation | Repetitive screening, doc hunting, slow approvals |

These aren’t prompts you tweak and hope for the best. They’re agentic workflows that integrate with your CRM, ERP, databases, and internal knowledge bases – and take action.

There’s a big difference between AI that tells you what to do and AI that does it.

5. Memory That Persists Across Sessions

One of the most frustrating limitations of Claude and similar tools is that each conversation starts fresh. You lose context. You repeat yourself. You re-upload documents.

LyzrGPT maintains conversational memory and context across sessions, teams, and workflows:

- Reference decisions made three weeks ago without re-explaining

- Build knowledge incrementally rather than starting over

- Share context across team members working on the same project

- Connect insights from different departments into a unified view

This is critical for complex enterprise workflows where context builds over weeks or months, not minutes.

6. Governance That Holds Up Under Scrutiny

Enterprise AI isn’t just about capabilities – it’s about control. LyzrGPT includes governance features that most alternatives skip:

- Complete audit trails – every query, every response, every action logged

- Role-based access control – different permissions for different teams and functions

- Data lineage tracking – understand exactly what data influenced which decisions

- Compliance reporting – automated documentation for regulatory requirements

- Model behavior monitoring – detect and flag anomalies or drift

When your auditors ask “how do you know your AI is compliant?” you need better answers than “the vendor says it’s fine.”

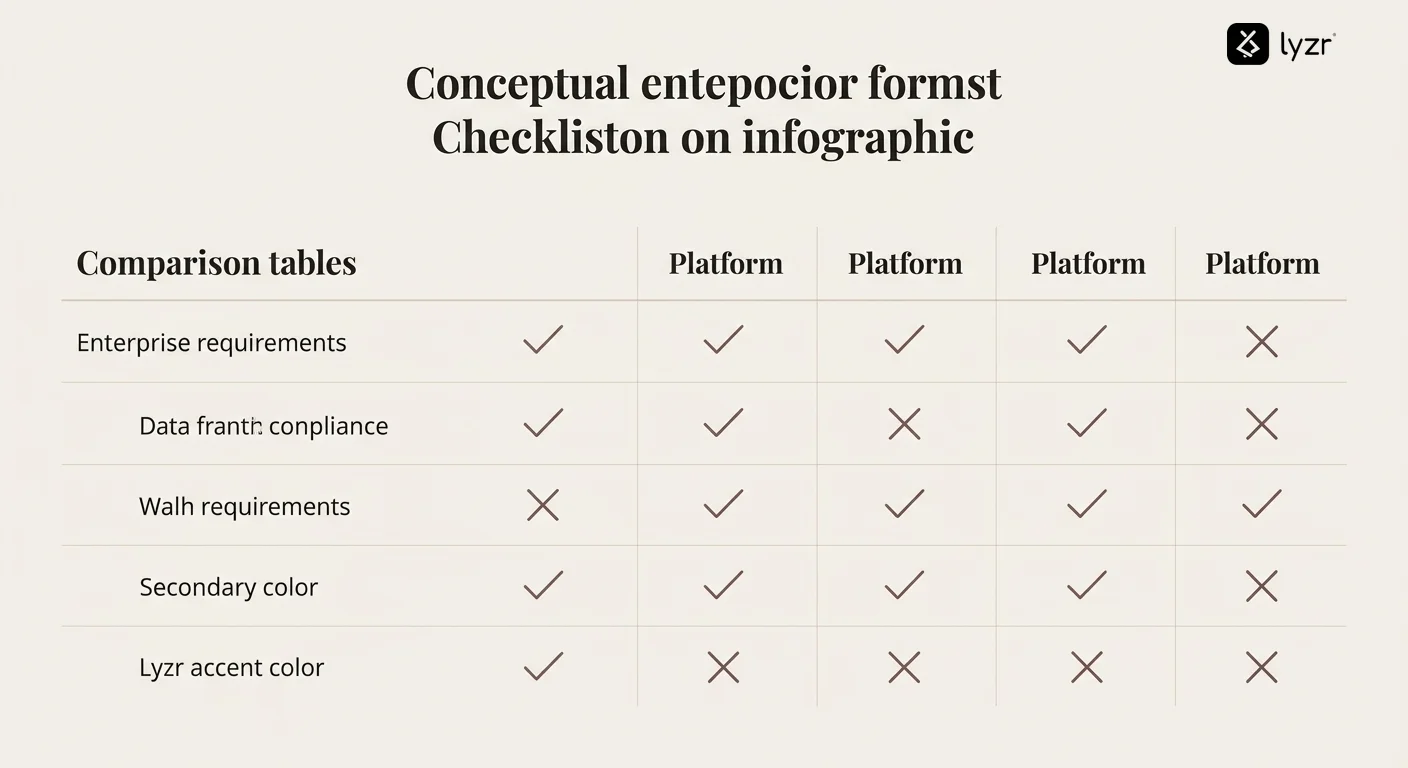

LyzrGPT vs. Claude: The Complete Picture

Here’s how the platforms compare on the dimensions that matter most to enterprise buyers:

| Capability | Claude | LyzrGPT |

| Deployment | Cloud SaaS only | VPC, on-premise, or hybrid |

| Data residency | Anthropic’s infrastructure | Your environment, never leaves |

| Model options | Claude only | GPT-4, Claude, Gemini, custom models |

| Pricing model | Per seat | Consumption-based |

| Pre-built workflows | Generic chat interface | 50+ department-specific agents |

| Memory persistence | Session-based only | Cross-session, team-wide memory |

| Audit & compliance | Basic logging | Full governance framework |

| Integration flexibility | API-based | Native CRM, ERP, database connectors |

| Customization | Prompt engineering only | Custom agents, workflows, and models |

Real-World Use Cases: Where LyzrGPT Replaces Claude

Financial Services: Loan Processing Without Compliance Risk

A regional bank needed to accelerate loan origination without exposing customer financial data to external platforms. With Claude, every loan application analysis would mean sending sensitive PII and financial documents to Anthropic’s servers.

LyzrGPT deployed in their VPC means:

- All customer data stays within their controlled environment

- Loan origination agent automates document review, credit analysis, and risk assessment

- Processing time dropped from 5 days to 6 hours

- Complete audit trail for regulatory compliance

Healthcare: Patient Communication at Scale

A hospital network wanted to improve patient communication and appointment scheduling but couldn’t risk HIPAA violations. Claude’s cloud deployment was a non-starter.

With LyzrGPT’s on-premise deployment:

- Patient data never leaves their infrastructure

- Customer service agents handle appointment scheduling, prescription refills, and basic triage

- Integration with existing EMR systems for seamless workflows

- Staff can focus on complex cases instead of routine inquiries

HR Operations: From Recruitment to Retention

A 10,000-person company was spending six figures on per-seat AI licenses, but only 30% of HR staff used the tools regularly. They were also concerned about candidate data privacy.

Switching to LyzrGPT delivered:

- 67% cost reduction through consumption pricing

- Pre-built agents for resume screening, interview scheduling, and employee satisfaction tracking

- All candidate data stays in their HR system

- Time-to-hire decreased from 45 days to 23 days

Making the Switch: What to Expect

If you’re currently using Claude and considering a move to LyzrGPT, here’s what the transition typically looks like:

Week 1-2: Assessment and Planning

- Audit current AI usage patterns and costs

- Identify compliance requirements and data sensitivity concerns

- Map existing workflows to LyzrGPT’s pre-built agents

- Define deployment architecture (VPC vs on-premise)

Week 3-4: Deployment and Integration

- LyzrGPT deploys in your environment

- Connect to existing data sources, CRM, and business systems

- Configure governance policies and access controls

- Pilot with a small team before broader rollout

Week 5-6: Rollout and Optimization

- Expand access across departments

- Monitor usage patterns and adjust model routing

- Fine-tune agents based on real workflow feedback

- Decommission Claude licenses as adoption grows

Most enterprises are fully transitioned within 6-8 weeks, with measurable ROI visible by week 4.

Frequently Asked Questions

Can I use LyzrGPT alongside Claude during a transition period?

Yes. Many organizations run both platforms in parallel while migrating workflows. LyzrGPT’s multi-model support means you can even access Claude through LyzrGPT’s interface during the transition.

How does LyzrGPT handle model updates when new versions release?

Because LyzrGPT is model-agnostic, new model versions are added to the platform without requiring changes to your workflows. You can test new models, compare performance, and switch when ready – all from the same interface.

What happens to our data if we stop using LyzrGPT?

Since LyzrGPT runs in your environment, you retain complete control of all data. There’s no vendor lock-in on your information. You can export everything or simply shut down the platform – the data stays with you.

Does LyzrGPT require dedicated IT resources to maintain?

While LyzrGPT deploys in your infrastructure, it’s designed for minimal maintenance. Most organizations assign 0.5-1 FTE for ongoing management, significantly less than building custom AI infrastructure would require.

Can we build custom agents beyond the pre-built library?

Absolutely. LyzrGPT provides both pre-built agents and a framework for creating custom workflows specific to your business processes. Most enterprises use a combination of both.

How does consumption pricing compare to per-seat at different scales?

At 100 users, the difference is often marginal. At 1,000+ users, consumption pricing typically delivers 40-60% savings. The gap widens further at 5,000+ users, where uneven adoption makes per-seat pricing increasingly inefficient.

Action Checklist: Evaluating Your Claude Alternative

If you’re seriously evaluating whether to switch from Claude to a more enterprise-appropriate platform, use this checklist:

Data & Compliance Assessment

- ☐ Document what types of data your teams currently put into Claude

- ☐ Identify which data types have regulatory restrictions (HIPAA, GDPR, etc.)

- ☐ Review your organization’s data residency requirements

- ☐ Audit current compliance gaps with external SaaS AI tools

- ☐ Calculate potential compliance risk exposure

Cost Analysis

- ☐ Calculate current spend on Claude licenses

- ☐ Measure actual usage rates across licensed users

- ☐ Identify users who are licensed but rarely log in

- ☐ Project costs at 2x and 5x current user count

- ☐ Model consumption-based pricing for your usage patterns

Strategic Flexibility

- ☐ Assess vendor lock-in risk with single-model dependency

- ☐ Evaluate need for model flexibility as AI landscape evolves

- ☐ Identify use cases where different models might perform better

- ☐ Review frequency of model performance improvements across providers

Workflow Requirements

- ☐ Map current AI use cases by department

- ☐ Identify repetitive workflows that could use pre-built agents

- ☐ Document integration needs with existing business systems

- ☐ Assess need for persistent memory across sessions

- ☐ Evaluate governance and audit requirements

Platform Evaluation

- ☐ Request LyzrGPT demo focused on your specific use cases

- ☐ Run pilot with 10-20 users across different departments

- ☐ Test pre-built agents relevant to your workflows

- ☐ Validate deployment in your VPC or on-premise environment

- ☐ Measure performance difference vs Claude for your use cases

- ☐ Compare total cost of ownership over 12 and 24 months

Where This Leaves You

Claude is an excellent product. For individual productivity, it’s hard to beat. But enterprise AI isn’t about individual productivity – it’s about organizational capability at scale.

And at scale, three things become non-negotiable:

- Data must stay in your environment – not because it’s ideal, but because regulations require it

- Costs must scale with value – paying for unused seats is unsustainable

- You need strategic flexibility – the AI landscape changes too fast to lock into one vendor’s roadmap

LyzrGPT was built to solve all three problems simultaneously. Private deployment. Multi-model flexibility. Consumption pricing. Pre-built agents. Enterprise governance.

It’s not about whether Claude is good. It’s about whether it’s built for how enterprises actually work.

For most organizations evaluating their options, the answer is increasingly clear: probably not.

Ready to See It for Yourself?

The difference between reading about enterprise AI and actually using it is substantial. If you’re serious about finding a Claude alternative that works at enterprise scale, the next step is simple.

Book a demo focused on your specific use cases. See LyzrGPT deployed in an environment similar to yours. Test the pre-built agents relevant to your workflows. Ask the hard questions about compliance, cost, and control.

Most importantly: see what enterprise AI looks like when it’s actually designed for enterprises.

Visit lyzr.ai to schedule your demo and discover how enterprises are getting started with generative AI the right way – with data privacy, cost control, and strategic flexibility built in from day one.

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here