TL;DR

We’re aligning price with actual work done by your agents. Credits meter the actions your agents take (pages checked, knowledge lookups, API calls, safety checks, etc.). LLM/model usage is billed transparently at pass-through rates. Run on your own VPC? You pay your cloud and model providers directly and use Lyzr credits only for agent actions. Run on Lyzr SaaS? Your invoice reflects model usage at pass-through plus agent credits clean and predictable.

Why we moved to Effort-Based Pricing

Earlier models (seats, feature tiers, flat bundles) didn’t match how agents work. The same “one click” can mean very different amounts of behind-the-scenes work: a quick KB lookup vs. a multi-page web read + AML checks + internal system updates. Effort-based pricing ensures you pay in proportion to the actual work performed nothing more.

- Fair: Light tasks cost a little; heavy tasks cost more.

- Transparent: You can see which actions consumed credits in the calculator.

- Durable: Because your cost tracks real operational work, we can keep improving the platform without surprise markups.

What changed (at a glance)

Previously (Credits)

- Plans tried to predict usage with broad buckets.

- Perception risk: large “included credits” that some customers never used, others blew through.

- $1 = 100 C

Now (Agent Credits)

- You pay for agent actions (effort): pages checked, knowledge lookups, app/API calls, memory steps, safety checks, evaluation runs, plus optional KB ingestion/storage.

- LLM/model costs are pass-through (plus handling and hosting fee).

- Billing granularity: 0.001 C (so small tasks are genuinely cheap).

- $1 = 1 C (we just changed the exchange rate).

How agent credits are calculated (in plain English)

Lyzr meters the specific steps your agents take:

- Pages checked (web/PDF)

- Extra pages (same site crawl)

- Knowledge lookups (from your KB)

- Memory steps (save/reuse context)

- Safety checks (Responsible AI/Hallucination Management)

- App/API actions (email, CRM, Slack, search APIs, etc.)

- Agent chatter (inter-agent coordination tokens)

- Evaluation runs (tests)

- KB ingestion & storage (when you add content)

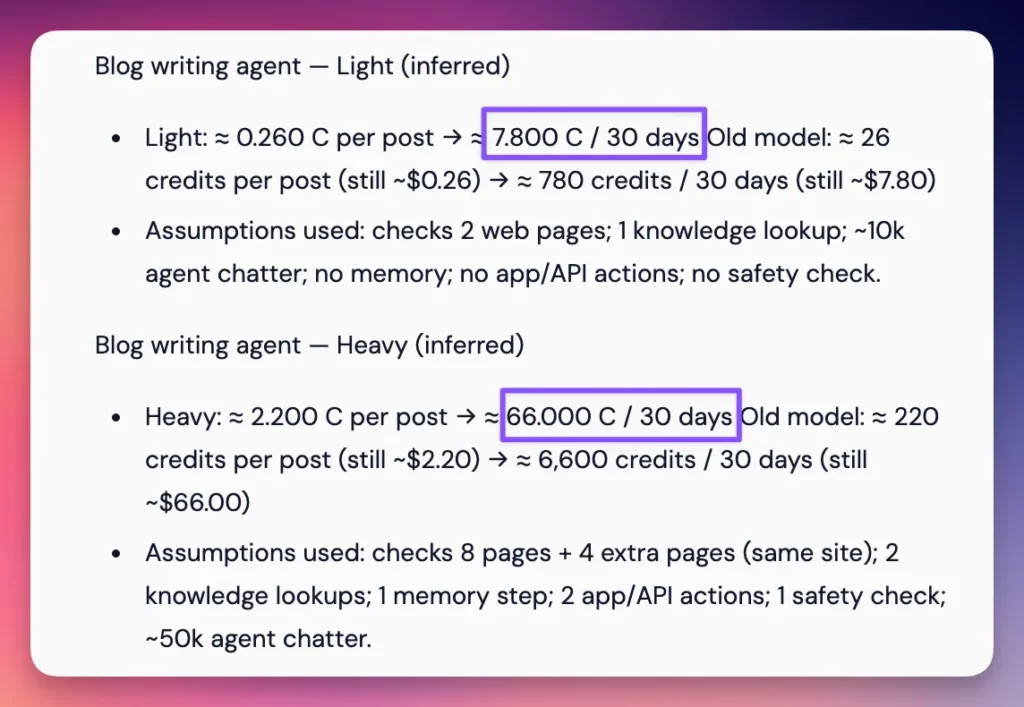

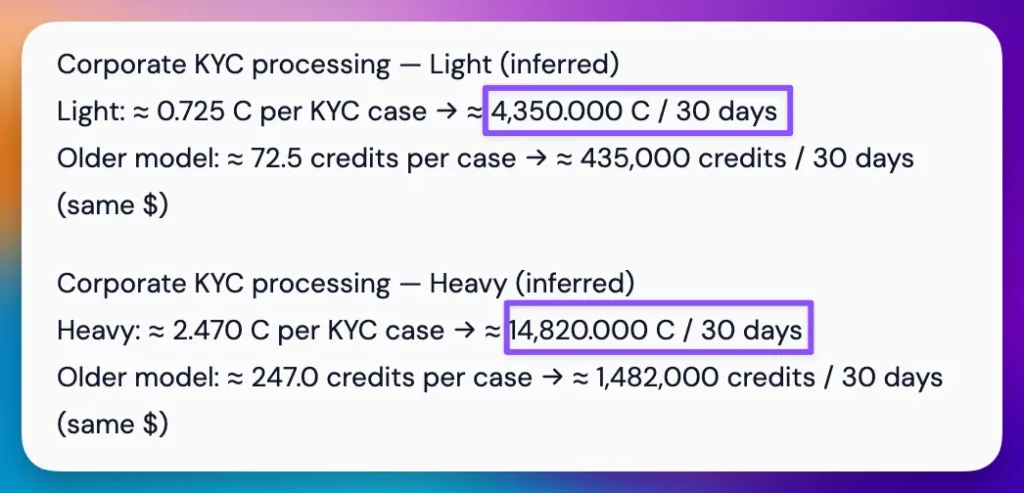

Two quick examples (illustrative)

Simple research → blog draft: a handful of web page checks + a KB lookup + one safety check = a small fraction of a credit (agent work) + whatever your model tokens cost at pass-through.

Complex onboarding (bank KYC/AML): multi-document parsing, multiple data-source checks, safety passes, and system updates = more credits because the agent does more work. Still typically far below the cost of manual effort.

We benchmark agent actions to target

~90% cost-efficiency vs. equivalent human effort

What we’ve observed so far

- Most customers’ monthly spend stayed in line with prior expectations light tasks got cheaper; heavy automations cost more but also delivered more value.

- Power users (very long workflows, many external systems) can see higher per-task totals because their agents are doing more real work.

- You can tune cost by adjusting depth (e.g., fewer pages checked, caching lookups, or batching evaluation runs).

Safeguards and clarity

- Predictability: 30-day projections, clear per-action rates, and 0.001-credit precision.

- Rate Limits: Ability to set up rate limits at a subaccount level.

- No surprises: LLM/model usage is pass-through; external vendor APIs (e.g., paid data sources) are pass-through too.

VPC vs. Lyzr SaaS

- Your VPC / on-prem: You pay your own compute and LLM providers directly. You pay Lyzr only for agent credits.

- Lyzr SaaS: Your bill reflects LLM usage at pass-through + agent credits. Compute/orchestration is handled and reflected transparently.

Frequently asked (fast answers)

- “What is an agent credit?” A unit that measures agent effort (the actions your agents take to complete a task).

- “Will I get bill shock?” Unlikely – rates are tiny and visible, the calculator shows 30-day projections, and we support soft-limit prompts for unusually big tasks.

- “Why do two ‘similar’ clicks cost differently?” Because the underlying work can be very different (1 KB lookup vs. 8 page checks + 4 extra pages + 6 API calls).

Calculator (try your own workflow)

We’re committed to a model that is fair, transparent, and sustainable.