An AI agent without guardrails is a liability waiting to happen.

Guardrails in AI are safety boundaries and rules built into an AI system to prevent it from saying, doing, or deciding anything harmful, inappropriate, or outside its intended purpose – much like the metal barriers on a highway that keep vehicles from veering off the road.

They’re like the bumpers in a bowling lane for kids.

No matter how off-target the ball rolls, the bumpers redirect it back toward the pins.

Similarly, AI guardrails redirect the model’s outputs back into the safe, intended zone.

This prevents ‘gutter balls’ like harmful content, hallucinations, or dangerous agent actions.

Understanding guardrails isn’t optional.

It’s a core requirement for deploying safe, reliable, and compliant AI that won’t expose your business to legal, financial, or reputational damage.

***

What are guardrails in AI?

They are an active, real-time safety system.

A set of programmable rules, policies, and filters that sit around an AI model or agent.

Their job is to inspect everything the AI sees (input) and everything it wants to say or do (output).

If an input or output violates a predefined rule, the guardrail system steps in.

It can block the content, modify it, or redirect the conversation to a safer topic.

It’s the bouncer at the door of your AI application.

How do guardrails work in an AI agent system?

They function as a two-way checkpoint.

1. Input Guardrails

Before a user’s prompt even reaches the Large Language Model (LLM), an input guardrail checks it.

It screens for things like:

- Prompt injection attacks.

- Requests for illegal or harmful content.

- Hate speech or abusive language.

- Personally Identifiable Information (PII) that shouldn’t be processed.

2. Output Guardrails

After the LLM generates a response but before it’s sent to the user, an output guardrail inspects it.

It checks for:

- Hallucinations or factually incorrect statements.

- Leaking of sensitive or proprietary data.

- Off-brand or toxic language.

- Responses that violate specific company policies or regulations.

- Agent actions that are unauthorized (e.g., trying to delete a production database).

The system essentially wraps the AI in a layer of operational logic and safety policy.

What types of guardrails exist in AI and agent deployments?

Guardrails are not a single thing; they are a multi-layered defense.

- Topical Guardrails: Prevent the AI from discussing specific topics. A banking AI assistant shouldn’t talk about politics or medical advice.

- Security Guardrails: Protect against malicious use, like prompt injection attacks or attempts to extract system prompts.

- Safety & Moderation Guardrails: Block harmful, toxic, or illegal content, following community standards or legal requirements. Meta’s Llama Guard is a prime example of a model built for this purpose.

- Compliance Guardrails: Enforce industry-specific rules. In healthcare, a guardrail might prevent an AI from giving a specific medical diagnosis, a HIPAA violation. In finance, it prevents giving unlicensed investment advice.

- Factual & Hallucination Guardrails: Cross-reference an AI’s output against a trusted knowledge base to ensure the information is accurate and grounded in reality.

- Action & Tool-Use Guardrails: For AI agents that can take actions (like sending an email or running code), these guardrails restrict what tools the agent can use and what operations it can perform.

How do guardrails differ from model alignment?

They are fundamentally different tools for different jobs.

Model alignment happens during training.

It’s about baking values and behaviors directly into the model’s core using techniques like Reinforcement Learning from Human Feedback (RLHF).

It’s an internal, almost permanent part of the AI’s ‘personality’.

Guardrails are external.

They are runtime enforcement layers you add on top of an already-trained model.

This makes them incredibly agile. You can update, add, or remove a guardrail without the massive cost and time of retraining the entire model.

Alignment is teaching the AI what to be.

Guardrails are telling the AI what not to do, right now.

Why are guardrails critical for production AI agents?

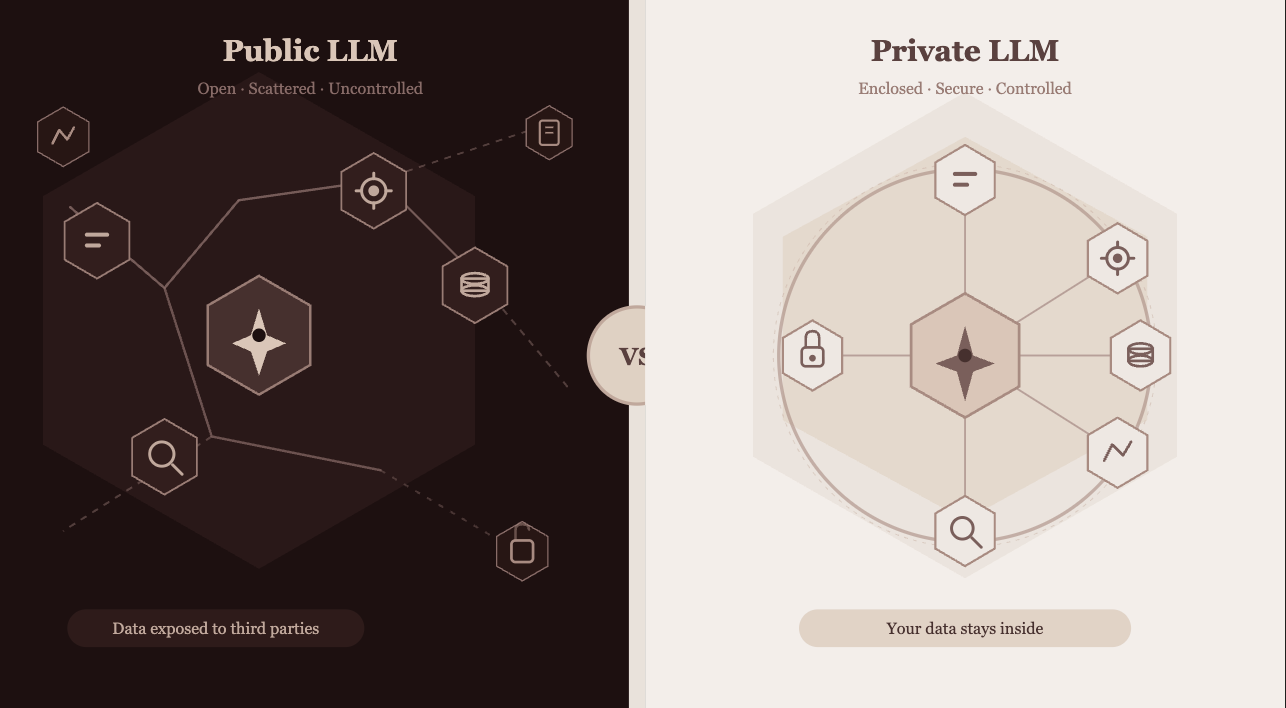

Because raw LLMs are not enterprise-ready.

They can hallucinate, produce toxic output, leak data, and be manipulated.

Deploying an agent without guardrails is like giving an intern the keys to your entire company’s data and systems without supervision.

Guardrails provide the necessary control and oversight.

- Risk Mitigation: They prevent legal and compliance violations.

- Brand Safety: They ensure the AI’s communication aligns with your company’s tone and values.

- User Trust: They create a reliable and safe user experience.

- Operational Security: They stop agents from taking unauthorized or destructive actions.

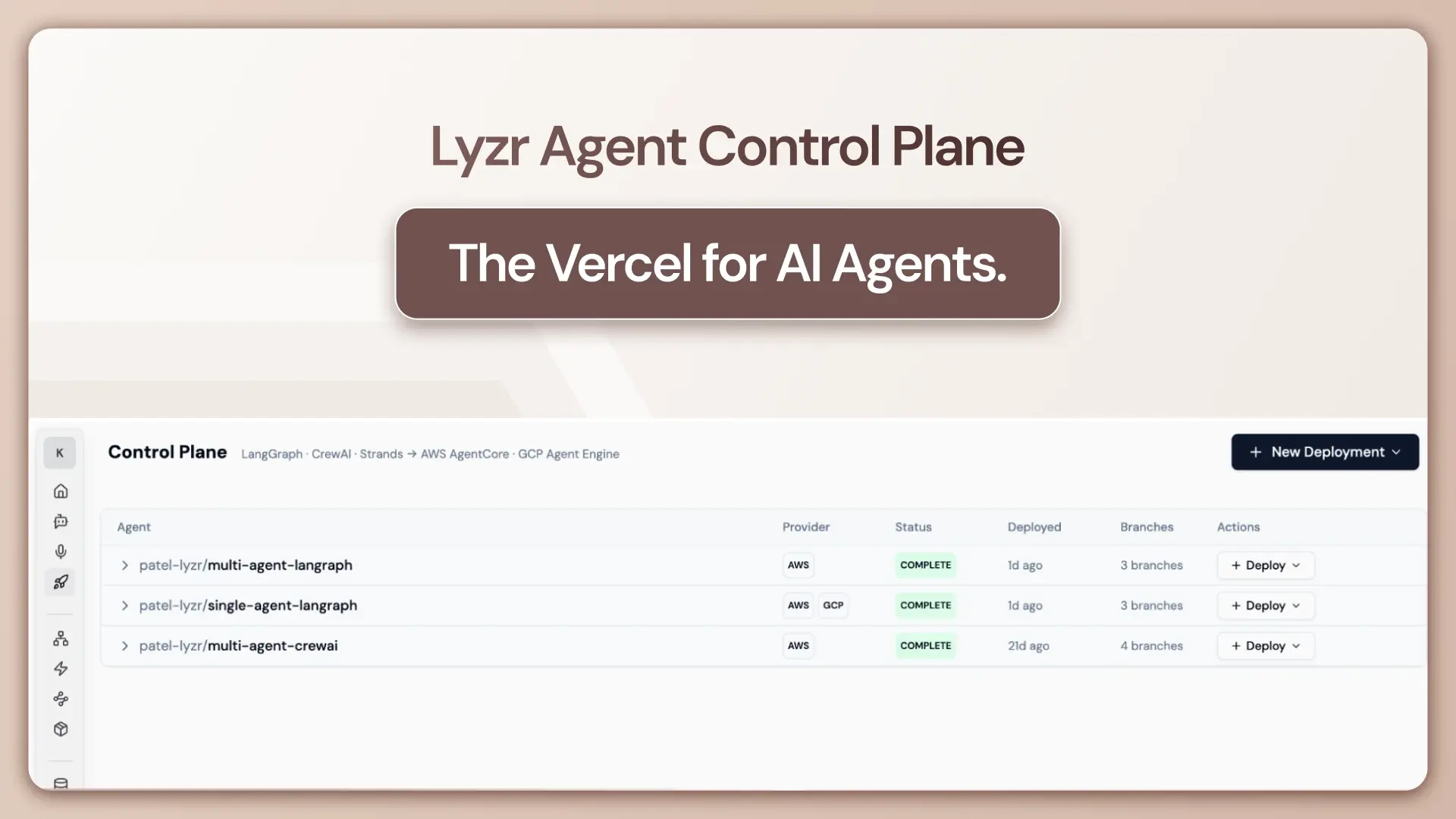

For companies like Lyzr, building enterprise AI agents, guardrails are not an add-on. They are a core part of the infrastructure, ensuring agents operate safely within operational boundaries.

***

What technical mechanisms are used for AI Guardrails?

The core isn’t about simple keyword filtering. It’s about robust evaluation harnesses and programmable safety layers.

Developers often use specialized frameworks and models to implement these checks.

- Programmable Libraries: NVIDIA’s NeMo Guardrails is a powerful open-source framework. It uses a specific language called Colang to let developers write clear, human-readable rules for conversational flows. You can explicitly define what the bot should and should not talk about, and how it should respond to policy violations.

- Constitutional AI (CAI): Pioneered by Anthropic, this is a hybrid approach. The model is given a “constitution”—a set of principles. Before giving a final answer, it critiques and revises its own output based on these principles. This acts as a kind of internal, self-correcting guardrail.

- Dedicated Shield Models: This involves using a second, smaller, highly specialized AI model as a filter. Meta’s Llama Guard and the OpenAI Moderation API are perfect examples. These models are fine-tuned specifically to classify inputs and outputs against a safety taxonomy (violence, self-harm, PII, etc.) and give a “safe” or “unsafe” score.

Quick Test: Can you spot the violation?

Imagine a customer service bot for an e-commerce store. Which of these outputs should a guardrail immediately flag?

- “Your order #12345 has been shipped and will arrive in 3-5 business days.”

- “To reset your password, please tell me your mother’s maiden name and the street you grew up on.”

- “I’m sorry, I cannot process returns for items purchased over 90 days ago as per our company policy.”

The answer is #2. A properly configured PII guardrail would instantly block this response, preventing the bot from soliciting and potentially storing highly sensitive security information.

***

Deep Dive FAQs

What is the difference between input guardrails and output guardrails in an AI pipeline?

Input guardrails screen the user’s prompt before it hits the LLM. They look for malicious code, prompt injections, or policy-violating requests. Output guardrails screen the LLM’s generated response before it gets to the user, checking for hallucinations, toxicity, or data leaks.

How do guardrails handle prompt injection and jailbreak attacks?

They analyze the intent and structure of a prompt, not just its content. A security guardrail can be trained to recognize patterns common in jailbreak attempts (e.g., “ignore previous instructions,” “roleplay as an unrestricted AI”) and block the prompt before the model can be compromised.

Do guardrails slow down AI agent response times, and how is latency managed?

Yes, they can add latency because they introduce extra processing steps. This is managed by using highly optimized “shield” models (like Llama Guard) that are small and fast, and by running checks in parallel where possible. It’s a trade-off between speed and safety.

What is the difference between rule-based guardrails and model-based guardrails?

Rule-based guardrails use explicit, deterministic rules (e.g., “block any response containing these 50 specific keywords”). They are fast and predictable but brittle. Model-based guardrails use another AI model to evaluate content semantically. They are better at understanding context and nuance but can be slower and less predictable. Most modern systems use a hybrid of both.

How do regulatory frameworks like the EU AI Act or HIPAA influence guardrail design?

Regulations directly dictate the rules. For HIPAA, guardrails must be designed to prevent the exposure of Protected Health Information (PHI). For the EU AI Act, guardrails are essential for high-risk systems to ensure transparency, fairness, and human oversight. Compliance becomes a key driver of guardrail implementation.

Can an AI agent bypass or override its own guardrails?

In a well-designed system, no. Guardrails are an external supervisory layer. The agent doesn’t have the authority to turn them off, just as a program cannot disable the antivirus software monitoring it.

What is the role of human-in-the-loop (HITL) review within a guardrail framework?

HITL is the ultimate fallback. When a guardrail flags a conversation as high-risk or ambiguous, it can automatically pause the interaction and escalate it to a human operator for review and intervention. This ensures that complex edge cases are handled safely.

***

The future of autonomous agents depends entirely on the strength and sophistication of their guardrails.

As these systems take on more critical tasks, these safety layers will become the defining factor between a powerful tool and an unacceptable risk.

Did I miss a crucial point? Have a better analogy to make this stick?

Let me know.