Table of Contents

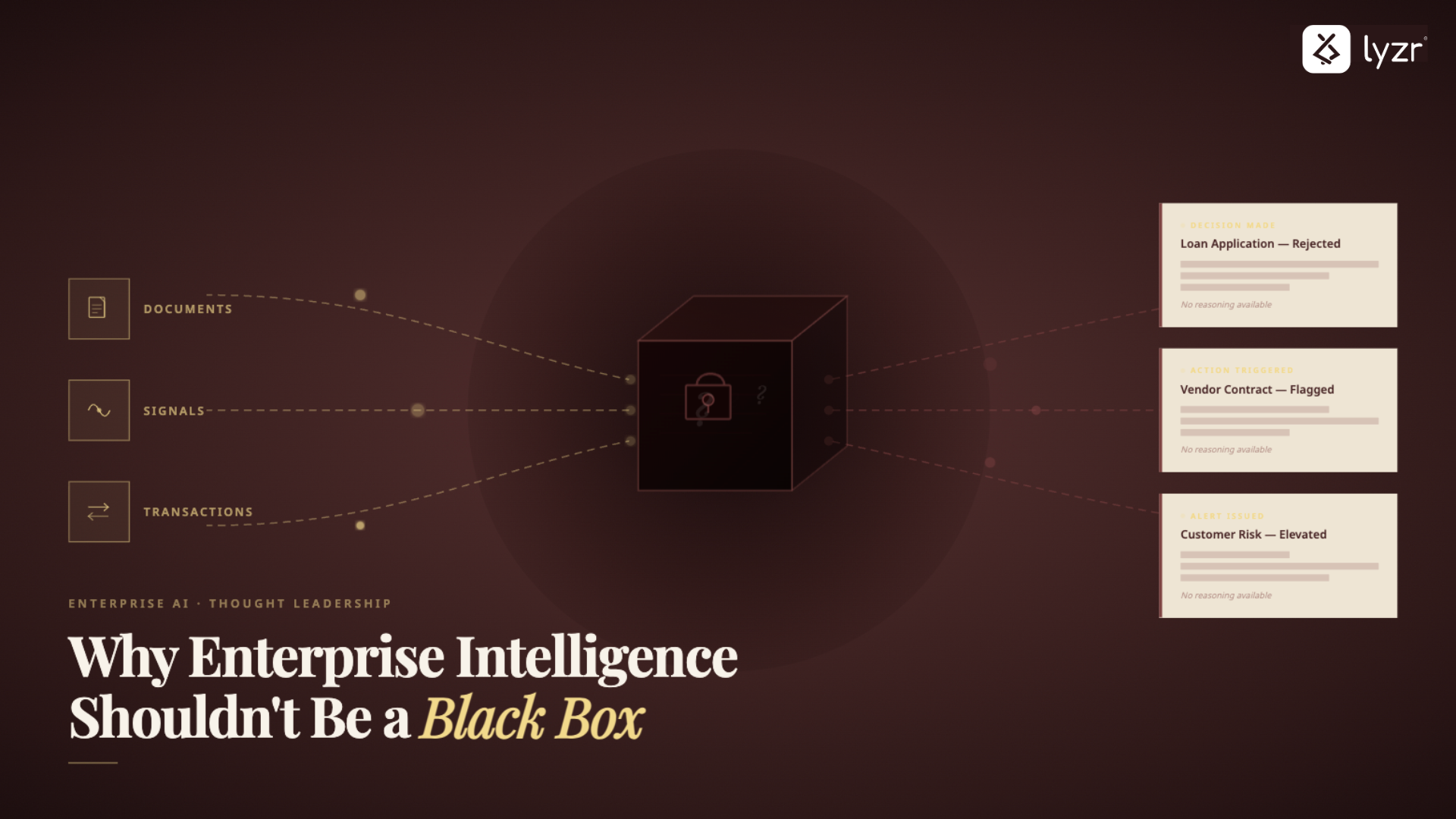

ToggleHere’s something that should bother anyone paying attention to enterprise intelligence: the industry has collectively accepted that these platforms should be mysterious.

Organizations buy platforms that promise to make sense of their data, generate insights, and improve decision-making. And somewhere in the contract, implicit or explicit, there’s an understanding that they won’t really know how it works. The logic stays hidden. The algorithms are proprietary. The decision-making process? That’s the vendor’s secret sauce.

For years, that was acceptable. Intelligence platforms were observation tools. They showed patterns. Surfaced insights. Highlighted anomalies. But humans decided what to do about it. A person was always in the loop.

But that’s not how enterprise intelligence works anymore.

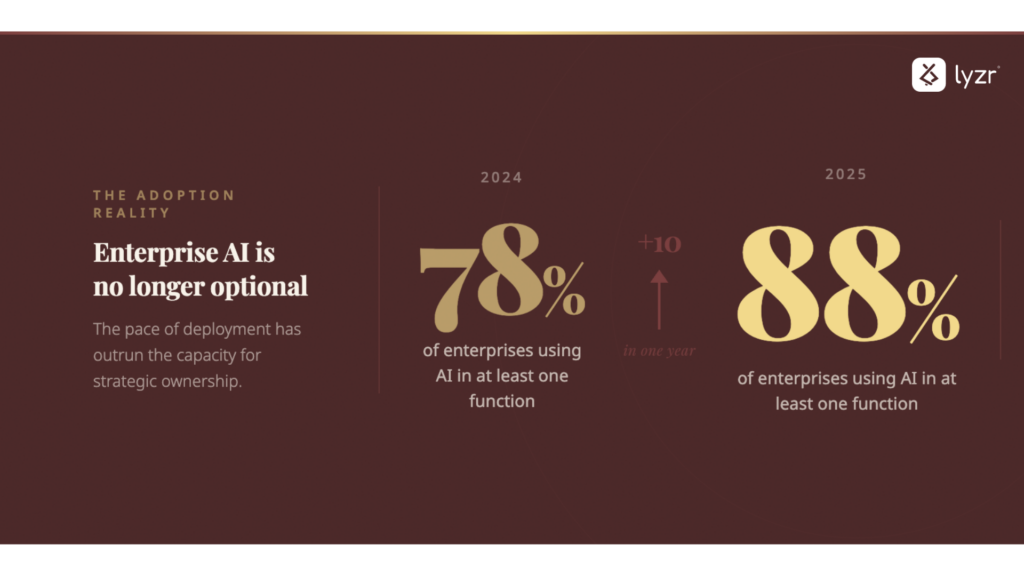

The numbers make that clear. According to McKinsey’s 2025 survey, 88% of organizations now use AI in at least one business function, up from 78% just a year earlier.

Today’s intelligence systems don’t just observe. They act. They route approvals, flag transactions, trigger investigations, allocate resources. The system sees a pattern and responds to it, automatically, without asking permission.

And that changes what organizations should tolerate when it comes to opacity.

The Black Box Model Was Built for Observation, Not Operation

Enterprise intelligence didn’t always operate this way.

A platform would analyze supply chain data or monitor financial transactions. It would process millions of data points, apply its algorithms, and output a dashboard. It might highlight anomalies. Surface unexpected trends. Rank suppliers using a proprietary scoring system.

But the intelligence stayed on the screen.

A human reviewed that dashboard. A person evaluated the anomalies. Someone looked at the supplier ranking and decided whether to act. The platform recommended. The human executed.

In that world, limited visibility into internal logic was frustrating but manageable. It was inconvenient not being able to explain precisely why a supplier was flagged or how a risk score was calculated. But there was always a human checkpoint. Context, judgment, and accountability remained intact.

Palantir built an empire on this model. Massive data ingestion. Sophisticated analysis. Synthesized intelligence that feels almost magical. The internal mechanics are deliberately obscured. Part of the mystique. Part of the appeal.

For observational intelligence, that model worked.

But what happens when the intelligence doesn’t just observe? What happens when it starts making decisions on its own?

The Hidden Cost of Operational Opacity

When intelligence platforms start taking action instead of offering advice, opacity stops being a minor annoyance and becomes a serious risk.

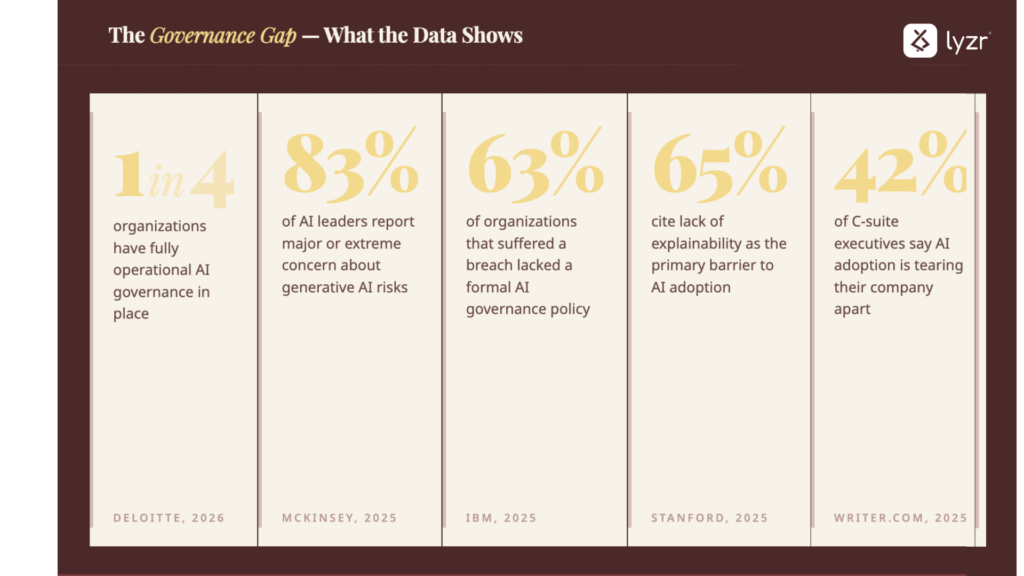

The data is stark: only one in four organizations have fully operational AI governance, despite widespread awareness of regulations. Even more telling?83% of AI leaders report major or extreme concern about generative AI, an eightfold increase in just two years.

The issue is simple:

Organizations cannot defend decisions they cannot explain

If a platform flags a transaction as suspicious and automatically freezes an account, the action may be efficient. But when a regulator, customer, or audit committee asks why the account was frozen, “the platform flagged it” is not a sufficient answer..

Why did the platform flag it?

What signals were used?

How were they weighted?

Was the threshold appropriate?

In an observational model, this wouldn’t matter. A human would have reviewed the flag and made the freeze decision. But in an operational model, the platform did. And the organization holds the liability.

This isn’t theoretical. According to IBM’s Cost of a Data Breach Report, 63% of organizations experiencing a breach didn’t have a formal AI governance policy in place. Stanford’s AI Index Report finds that over 65% of organizations cite “lack of explainability” as the primary barrier to AI adoption.

Invisible failures compound before anyone notices

The most concerning aspect of black box operational systems is how quietly they fail.

A procurement engine subtly favoring certain suppliers.

A risk model consistently underweighting certain signals.

A workflow system optimizing for speed at the expense of compliance.

These failures don’t trigger alarms. They become normalized embedding biases and inefficiencies into your operations without anyone realizing it’s happening.

In observational intelligence, a human might spot these patterns and question them. “Wait, why do we always seem to go with this supplier?” or “Why does every request from this department get routed differently?”

In operational intelligence, those questions never get asked. The system just runs. Optimization continues and the organization gradually aligns itself to logic it cannot inspect. It outsources judgement. It has ceded control over how the business actually runs.

That’s not intelligence. That’s dependence.

According to a 2025 survey by Writer.com, 42% of C-suite executives say AI adoption is “tearing their company apart.” McKinsey found that only 28% of organizations have CEOs directly responsible for AI governance oversight.

What Transparent Intelligence Actually Looks Like

The path forward isn’t to eliminate AI-driven intelligence. It’s to eliminate the black box model itself.

Transparent intelligence means making complexity governable.

Transparency in enterprise intelligence doesn’t require dumbing down models or abandoning sophisticated analysis. It requires designing systems where decision logic can be inspected, validated, and modified by the organizations that depend on them.

If a system flags a supplier, the organization should see exactly what triggered that decision. Not a vague risk score, but the actual inputs and thresholds crossed. And if the logic no longer fits the business context, it should be changeable without waiting for a vendor release cycle.

This means:

- Exposing reasoning paths: Organizations should be able to trace how input data flows through the system and influences outputs

- Documenting decision criteria: Every operational action should be traceable to explicit rules, models, or thresholds that can be reviewed and adjusted

- Providing override mechanisms: Operators must be able to intervene, redirect, or halt automated actions when logic doesn’t align with business context

None of this requires sacrificing AI capabilities. It requires rejecting the premise that intelligence must be mystical to be powerful.

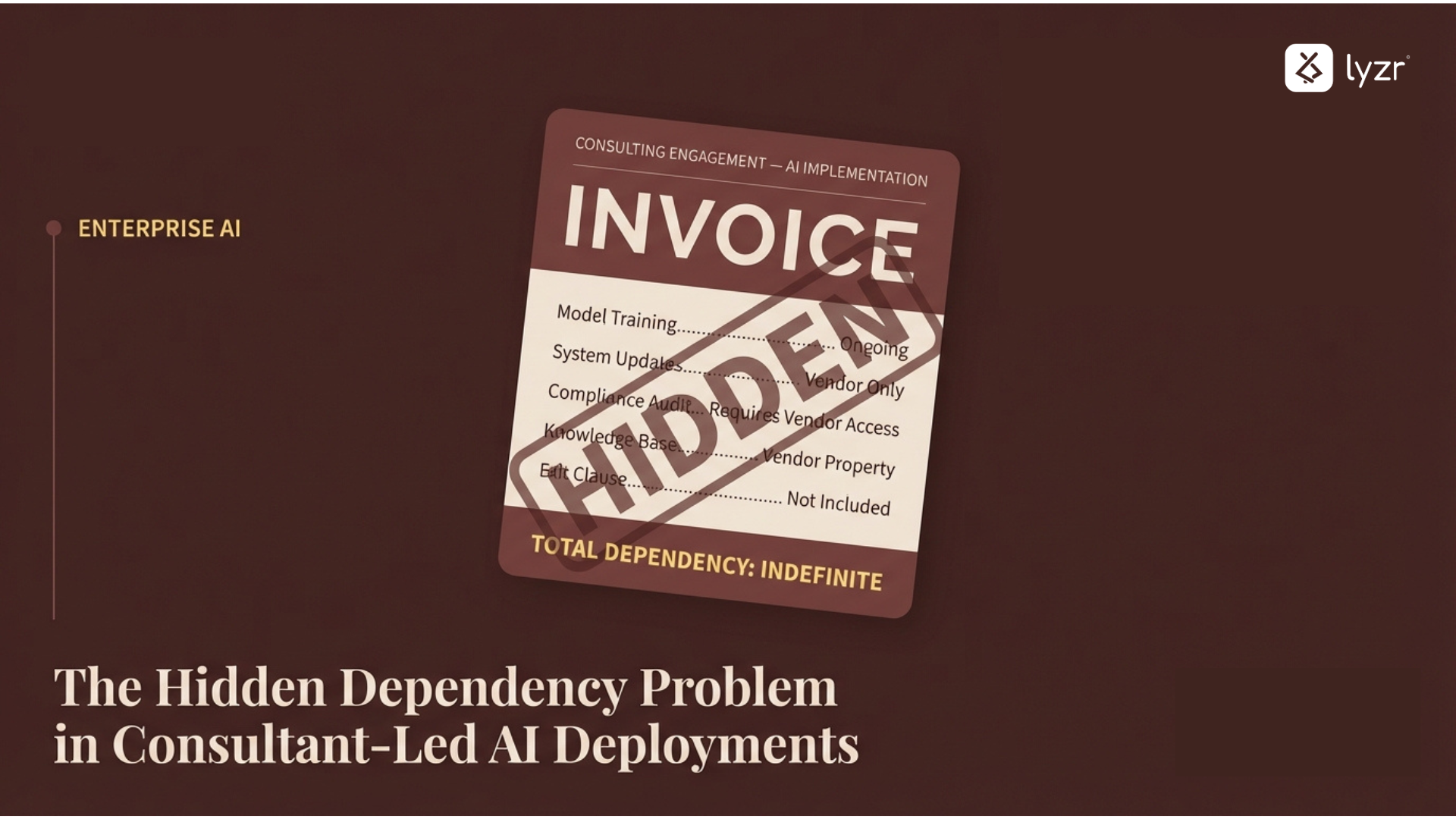

Transparent intelligence eliminates vendor opacity and enables ownership

The most direct path to transparency is ownership of the intelligence infrastructure.

When organizations control data pipelines, orchestration logic, and execution layers, they do not need vendor mediation to understand how decisions are made. They can inspect, modify, and govern systems according to their own standards.

This does not require building everything from scratch. It requires choosing platforms designed for inspectability rather than lock-in.

Platforms like Lyzr are architected to make intelligence visible by default. Organizations can see what data systems access, trace how workflows are constructed, and modify execution logic without filing a ticket. Intelligence remains sophisticated and outcomes remain powerful.

The difference is control.

Transparent intelligence are model agnostic

When an intelligence platform is inseparable from a single LLM provider or a single cloud environment, transparency alone isn’t enough. The organization can see how decisions are made but cannot change the conditions under which they’re made.

The same principle extends to deployment, intelligence that lives exclusively in a vendor’s cloud means you can see the outputs but can’t control where the data goes.

LyzrGPT is built across both dimensions.

Enterprises can switch between models: GPT, Claude, or Gemini. And choose where that runs: deploy on-prem or within private VPC environments, and connect intelligence directly to production agents for full data sovereignty.

Transparent systems get better over time.

Black box platforms create a learning ceiling. Organizations cannot improve what they cannot inspect. When intelligence logic remains vendor-controlled and opaque, enterprises are limited to requesting features, reporting bugs, and waiting for updates.

Transparent intelligence flips this dynamic.

When reasoning paths are visible, organizations can identify edge cases, refine decision criteria, and adapt intelligence to evolving business needs. The platform becomes a tool that improves with organizational knowledge rather than vendor schedules.

This is the difference between a tool that gets deployed and a system that gets better.

The Future of Enterprise Intelligence Is Transparent by Default

As intelligence systems move from observation to operation, the distinction between intelligence that is observed and intelligence that is owned becomes critical.

The regulatory and market signals are already clear.

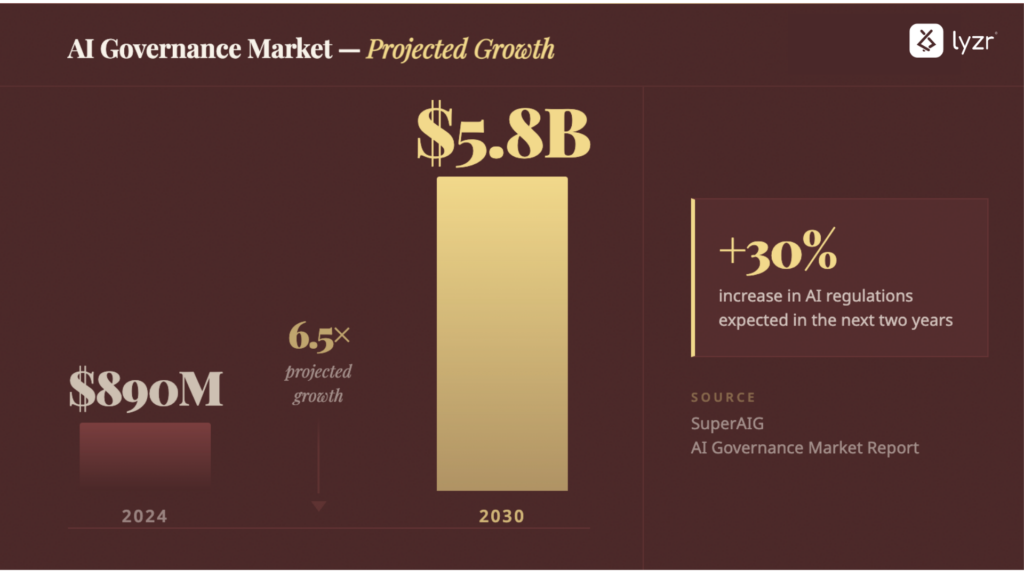

According to SuperAIG AI regulations are expected to increase by 30% in the next two years, and the AI governance market is projected to grow from $890 million to $5.8 billion by 2030, highlighting just how urgent this has become. Yet despite the surge in adoption, Deloitte’s 2026 report found that only 34% of organizations are truly reimagining their business with AI.

The gap between deployment and ownership is wide, and it’s closing fast.

Organizations that continue relying on black box platforms will find themselves defending decisions they cannot explain, correcting failures they cannot diagnose, and depending on vendors for visibility into their own operations.

Organizations that demand transparency, on the other hand, will gain something more valuable than insight: they’ll gain control. Control over how intelligence operates. Control over how decisions get made. Control over their own operational logic.

The choice here isn’t between having intelligent systems and having transparent systems. It’s between intelligence you observe and intelligence you own.

Enterprise intelligence should not be a black box. Not because black boxes are technically inferior. But because operational intelligence demands accountability, adaptability, and alignment with enterprise judgment.

That’s not just good governance. That’s basic operational sanity.

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here