Table of Contents

Toggle65% of enterprise customer support conversations fall into a small set of repeatable requests.

Order status, returns, delivery issues, and general support questions make up most inbound volume.

On the surface, these conversations look straightforward. In practice, they are not.

Some requests can be resolved with a direct answer. Others require order validation, access to backend systems, or escalation into a ticketing workflow. The complexity increases when support teams operate across multiple channels, including chat and voice.

Customers expect fast resolution, minimal back-and-forth, and no repetition, regardless of how they reach out. Each interaction must maintain context, ask only what is necessary, and move toward resolution without friction.

This shifts the problem from how to respond to how to decide.

Every interaction needs to be correctly identified, routed, and handled based on intent and operational requirements, while delivering a consistent experience across both chat and voice.

Project Background

MSP Corp is a full-service IT and digital transformation partner, delivering managed services, cybersecurity, cloud solutions, AI implementation, enterprise architecture, and other professional IT services for complex environments.

One area of this portfolio includes supporting enterprise brands in designing and operating customer support systems across chat, video, and voice channels.

As part of a broader modernization initiative, MSP Corp was engaged by a retail client to design and implement an AI-driven customer support architecture spanning chat and voice channels.

The objective was not to replace existing systems, but to introduce an approach that could better handle repeatable requests, adapt across channels, and remain controllable as interaction volumes increased.

The Existing Challenge

A large share of inbound traffic to the customer support centre consisted of familiar requests: order tracking, returns, delivery updates, and general support questions.

While these requests appeared similar, their execution paths varied significantly based on validation requirements, system access, and the context in which they arrived.

This became especially visible when these requests were handled across multiple customer support channels.

Some interactions required staged verification, others needed fast decision-making or immediate escalation. When workflows were applied uniformly without accounting for these differences, it resulted in inconsistent outcomes, unnecessary repetition, and added friction for both customers and support teams.

What were the Limitations of the Existing Workflow?

Before introducing an orchestration layer, support logic relied on a single conversational interface responsible for handling interactions end to end.

The same component was tasked with understanding intent, validating requests, and triggering downstream actions. This tight coupling made decision-making rigid and difficult to evolve as requirements changed.

As support expanded across chat and voice, limitations in this approach became more apparent. A single, uniform flow struggled to accommodate differences in validation depth, pacing, and escalation timing.

This often led to inconsistent outcomes, unnecessary repetition, and fragile escalation logic. In some cases, tickets were created before sufficient validation was completed, and even small workflow changes required manual oversight to prevent unintended behavior.

What became clear was the need for a more deliberate structure, one that separated deciding what to do from executing the action.

The system needed to:

✓ Identify intent before any execution took place

✓ Route requests to specialized handling paths instead of a single flow

✓ Apply channel-aware behavior without duplicating logic

These requirements directly shaped an orchestration-led approach, where control, consistency, and channel awareness were built into the system by design rather than managed reactively.

Solution Approach & Ownership

To address these challenges, MSP Corp introduced an orchestration-led conversational architecture as part of its client support delivery.

MSP Corp designed and implemented the solution end to end, defining agent responsibilities, routing logic, and execution flows to ensure consistent behavior across chat and voice channels.

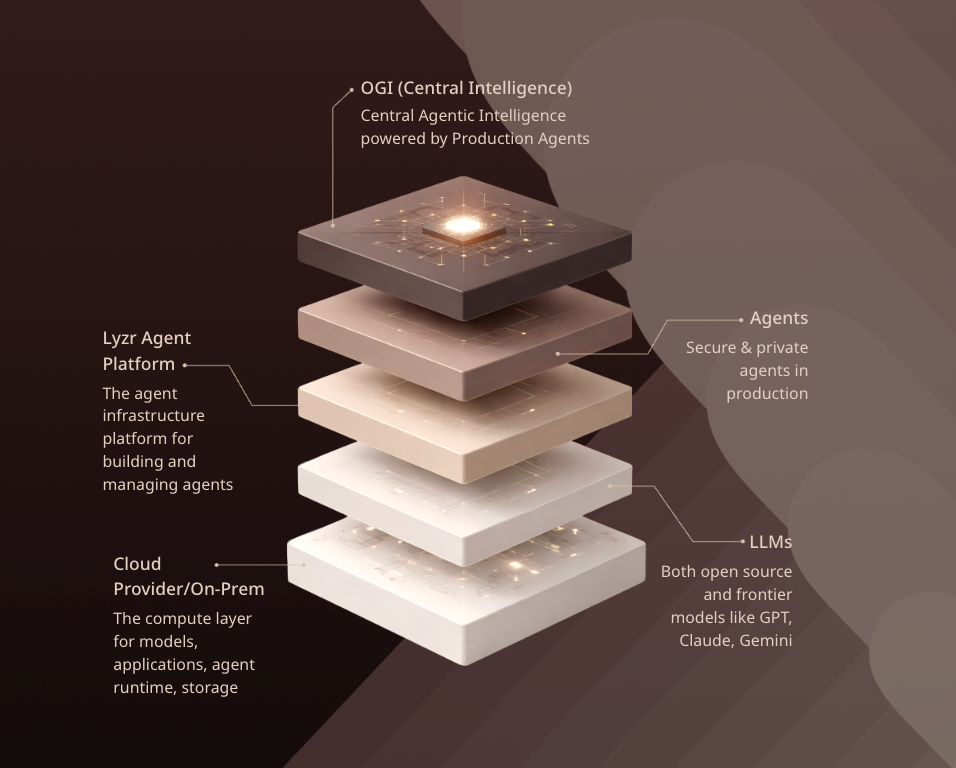

The system was implemented using Lyzr’s agent orchestration framework, which enabled a clear separation between:

- Intent detection

- Request execution

- Response handling

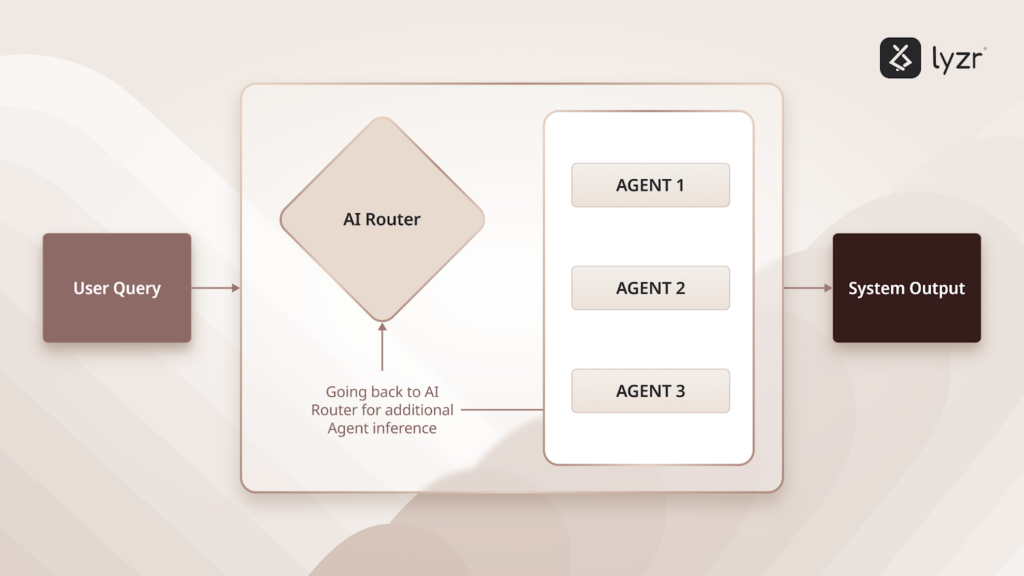

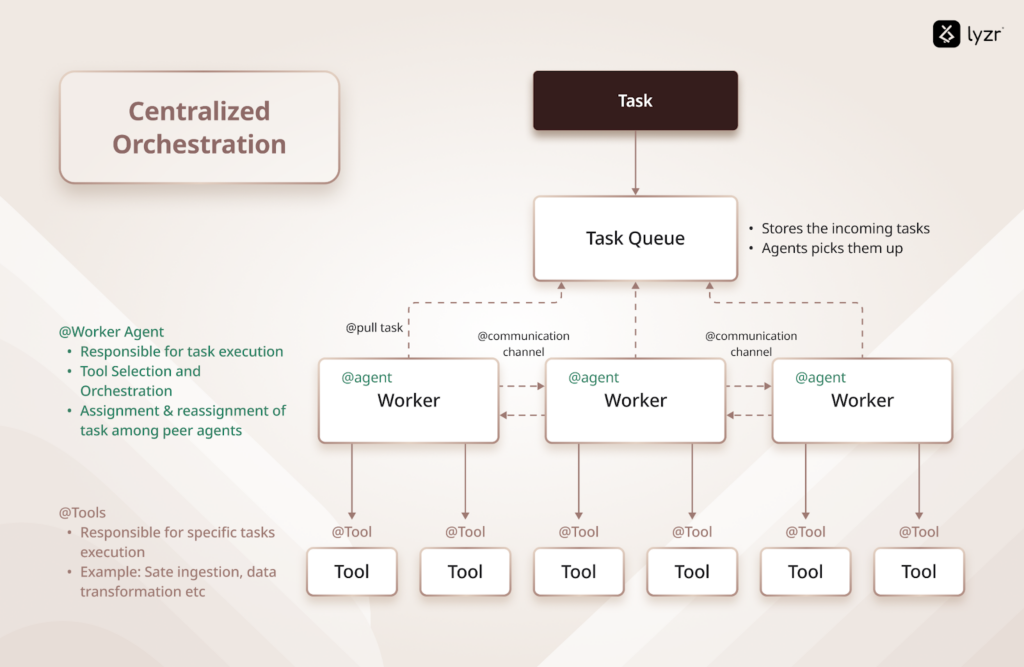

At the core of the solution is a manager–sub-agent model:

- A central Manager Agent is responsible for intent detection, request routing, and response normalization

- Specialized sub-agents handle verification, escalation, FAQ-style queries, and standardized macro responses

Why MSP Corp Chose Lyzr?

MSP Corp evaluated orchestration platforms based on their ability to support a structured, governable AI architecture without disrupting existing systems or introducing opaque decision logic.

A key requirement was maintaining explicit control over how intents are classified, how actions are triggered, and how responsibilities are distributed across agents, particularly in environments where support workflows span both chat and voice channels.

Lyzr was selected because it enabled:

- Explicit separation between intent detection and execution

- Scoped responsibilities across multiple agents

- Channel-aware behavior without duplicating logic

- Integration with existing ticketing and order systems

This aligned with MSP Corp’s broader approach to designing AI systems that are predictable, auditable, and adaptable over time to ensure that automation could scale without sacrificing oversight, reliability, or operational intent.

How Lyzr Was Applied in the Solution

Once Lyzr was selected as the orchestration layer, MSP Corp applied it to design a support architecture that separates decision-making from execution.

Rather than introducing a single conversational agent, the system was structured around explicit control and delegation. Lyzr was used to orchestrate how requests are classified, routed, and executed across specialized agents, while preserving flexibility across chat and voice channels.

This approach allowed MSP Corp to:

- Centralize intent detection and routing

- Isolate system access and business logic

- Apply consistent decision logic across channels

- Adapt execution behavior based on chat and voice constraints

This orchestration-first design directly shaped the system architecture.

Architecture Overview

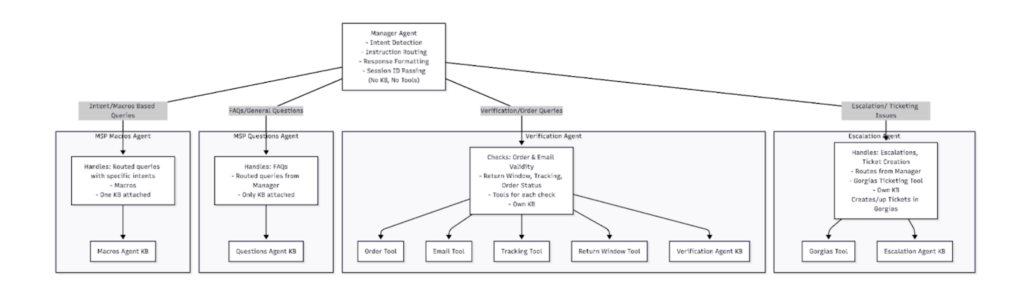

The AI support system implemented by MSP Corp is built on a manager–sub-agent orchestration model, designed to separate decision-making from execution.

Central Orchestration Layer

A single Manager Agent acts as the control layer for every interaction. It is responsible for:

- Detecting user intent

- Routing requests to the appropriate sub-agent

- Passing instructions and identifiers where applicable

- Normalizing responses before returning them to the user

The Manager Agent does not maintain its own knowledge base or system access. This ensures that all business logic and integrations remain contained within specialized execution agents.

Specialized Execution Agents

Each sub-agent is designed to handle a specific category of requests, with scoped knowledge and controlled system access.

| Sub-Agent | Responsibility | Integrations |

| Escalation Agent | Ticket creation and escalation | Ticketing system, Escalation KB |

| Verification Agent | Order validation and status checks | Order tools, Verification KB |

| Questions Agent | General and FAQ-style queries | Questions KB |

| Macros Agent | Standardized, intent-specific responses | Macros KB |

This separation enables predictable behavior, controlled downstream actions, and easier governance as the system evolves.

Chatbot Module (Session-Aware Support)

The chatbot implementation uses the manager–sub-agent architecture in a session-aware mode, allowing context to be preserved across multiple turns within a conversation.

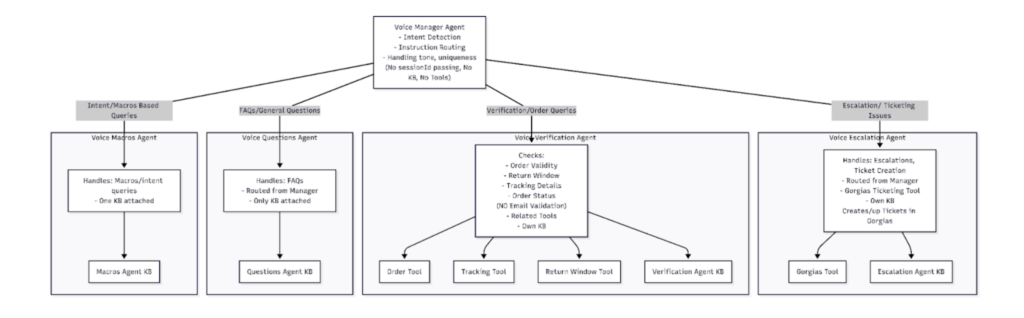

Voice Agent Module (Stateless Support)

The voice implementation uses the same manager–sub-agent architecture, adapted for a stateless interaction model.

Unlike chat, voice interactions do not preserve conversation history. Each user request is treated as a standalone input, prioritizing speed, clarity, and minimal user effort.

Outcomes & Results

The implemented solution significantly improved how customer support requests were classified, routed, and executed across both chat and voice channels. Informational queries, verification requests, and escalation cases were clearly separated, ensuring that each interaction followed the appropriate handling path.

Ticket creation became more controlled, triggered only when intent and required conditions were met. Chat interactions benefited from context-aware, multi-step handling, while voice interactions resolved faster through simplified, low-friction flows.

Despite these channel-specific adaptations, the same core decision logic was applied consistently across both environments.

From a business perspective, this led to reduced manual intervention for repeatable support requests and far more predictable escalation behavior.

Resolution speed improved without sacrificing accuracy, and the resulting support architecture proved capable of scaling with increased volume while remaining governable and easy to manage.

Wrapping Up

By adopting a manager–sub-agent architecture, MSP Corp implemented a more controlled and scalable approach to conversational support across chat and voice channels for its client environments.

The solution, designed and implemented by MSP Corp and enabled through Lyzr’s agent orchestration framework, separates intent detection from execution. This allows each interaction to be handled with the appropriate level of verification, escalation, or automation based on both intent and channel constraints.

As a result, MSP Corp now operates a customer support architecture that is consistent, governable, and adaptable, without relying on a single monolithic conversational agent, making it suitable for deployment across multiple client engagements.

Book A Demo: Click Here

Join our Slack: Click Here

Link to our GitHub: Click Here