20 Questions Every Enterprise Should Ask Before It’s Too Late

What if your “simple AI rollout” quietly locked your company into a 5‑year decision you never really evaluated?

Most teams evaluate ChatGPT Enterprise like a SaaS tool.

They look at features, UI, and a year‑one price.

They do not realize they are actually choosing an AI operating system for the next 3–5 years.

Once:

- Your employees are trained on it

- Your workflows depend on it

- Your data lives inside it

…switching becomes expensive, political, and risky.

This is not about attacking ChatGPT Enterprise.

It is about making sure you do not confuse “good enough for a pilot” with “safe to bet the business on.

So instead of a spec sheet, think of this as a ‘reality checklist’ woven into a narrative:

Twenty questions that quietly decide whether your AI strategy will scale or snap.

Before you read further, you can feel the difference yourself by trying a multi‑model, multi‑vendor setup at chat.lyzr.app, where you can switch between GPT, Claude, and Gemini in a single conversation.

Chapter 1: Where Does Your AI Actually Live?

Let’s start with every regulator, CISO, and board member.

Where does our data go at 2:37 a.m. when no one is watching?

In regulated industries like BFSI, healthcare, and government, “we don’t train on your data” sounds nice. But it is not the question.

The real question is: does the data ever leave your walls at all?

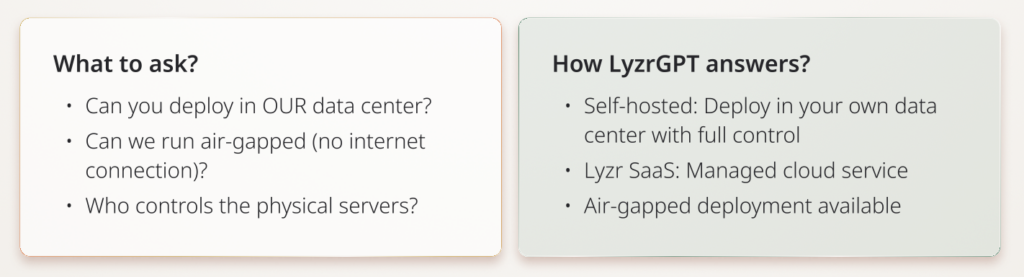

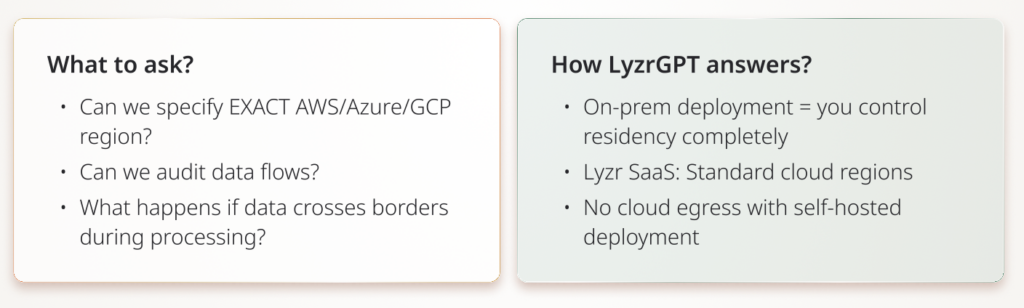

Q1: Can we deploy this entirely on‑premises or in our own VPC?

Imagine a bank that has spent a decade building a fortress‑grade infrastructure.

Then, overnight, every sensitive conversation suddenly starts flowing through a third‑party cloud. No matter how many times a vendor says “SOC 2,” the regulator’s eyebrow still goes up.

What ChatGPT Enterprise offers:

Cloud-based deployment. Data encrypted and SOC 2 compliant, but lives on OpenAI’s infrastructure (or Azure OpenAI Service if you use the API separately).

You are not renting a black box. You are extending your own architecture.

Q2: Can we guarantee data governance?

You close a flagship client in Germany.

Six months later, a regulator asks, “Prove this data never left the EU.”

If all you have is a slide and marketing copy, you are exposed.

What ChatGPT Enterprise offers:

Supports regional data residency options (7 specific regions), but ultimately cloud-based.

Residency becomes a design choice, not a slide in a sales deck.

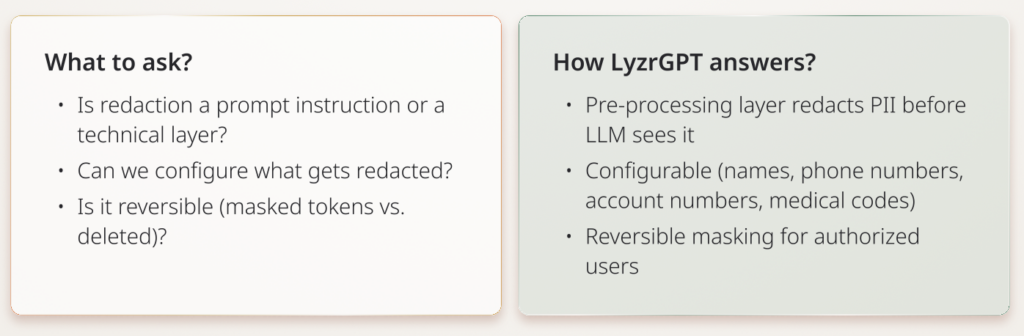

Q3: Do you have PII redaction at the infrastructure level?

Most AI systems rely on prompts like “don’t include PII.” That’s not enough. You need automatic redaction before data touches the model. You need a hard barrier.

What ChatGPT Enterprise offers:

Standard content moderation. No infrastructure-level PII redaction mentioned in public documentation.

Safety becomes architecture, not vibes.

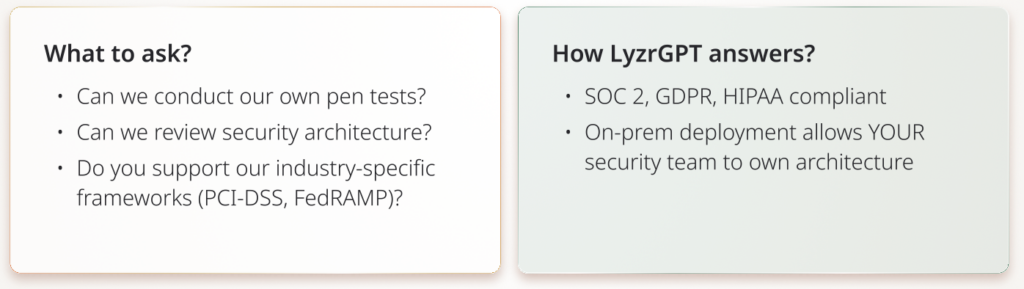

Q4: What compliance certifications do you have, and can we audit?

For a board or regulator, SOC 2 is table stakes. Real question: Can we audit your security ourselves? Can you sign our BAA (HIPAA)? Our DPA (GDPR)?

What ChatGPT Enterprise offers:

- SOC 2 Type 2

- GDPR DPA available

- HIPAA BAA available (Enterprise, Team, Edu, API tiers)

LyzrGPT goes further with private VPC deployment offering full logs and transparency for auditing, plus a Responsible AI Framework that ensures data compliance through infrastructure-level controls.

Compliance shifts from “trust our paperwork” to “own your stack.”

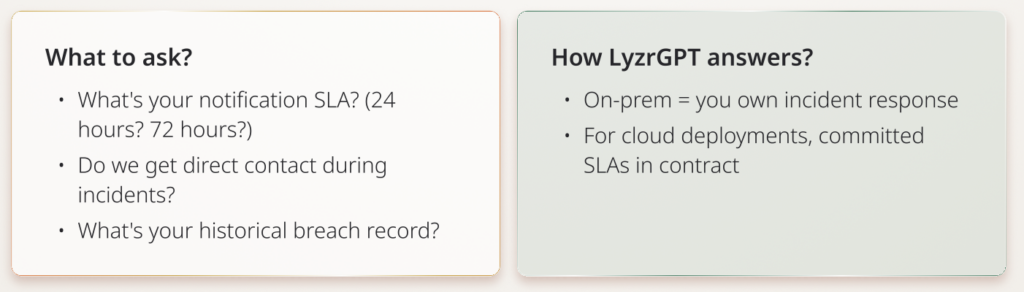

Q5: What’s your incident response and breach notification process?

When (not if) there’s a security incident, how fast do you notify us? The question is not if but when, and how you find out

You should never first hear about your AI vendor’s breach on X.

What ChatGPT Enterprise offers:

Standard enterprise SLAs. Specific notification SLAs typically negotiated per contract.

You are not waiting on a PR cycle. You are executing a plan.

Chapter 2: The Cost Story No One Puts On The Slide

At first glance, AI pricing feels simple. Then adoption spikes, and suddenly Finance is asking, “Why did our AI bill triple last quarter?”

This chapter is about the quiet financial compounding that turns a clever pilot into a budget fight.

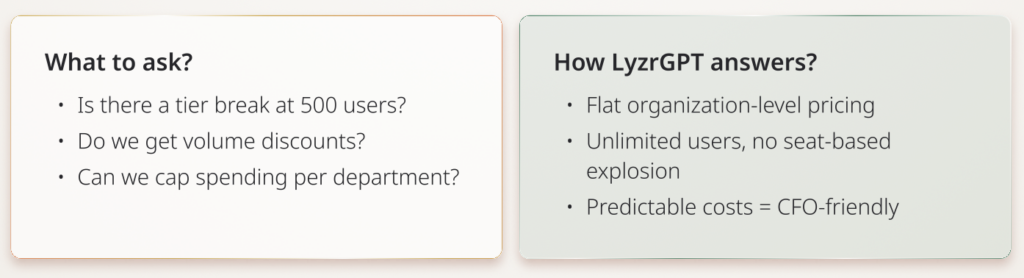

Q6: What’s the true cost when we scale from 150 to 500 to 1,000 users?

At 150 users , $60/user/month is $108K/year .

At 500 users , it jumps to $360K/year .

At 1,000 users , you are negotiating with the CFO, not just IT.

AI should be a force multiplier , not a recurring budget crisis.

What ChatGPT Enterprise offers:

Seat-based pricing. Estimated ~$60/user/month for Enterprise (not publicly listed). Requires sales negotiation.

Your AI story scales with your people, not against your budget.

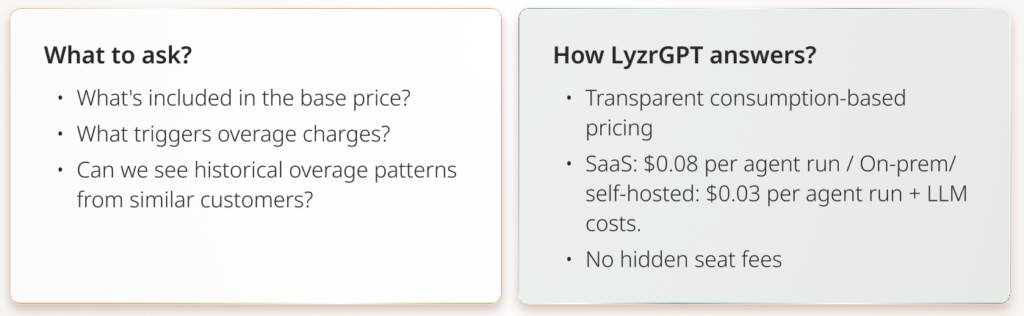

Q7: Are there overage fees or hidden costs beyond the subscription?

Seat fees are obvious.But what about API usage? Model upgrades? Storage? Integration fees? These show up as “miscellaneous” on invoices and headaches in budget reviews.

What ChatGPT Enterprise offers:

Includes API credits. Additional API usage beyond credits may incur costs.

Your finance team should never need a decoder ring for the AI bill.

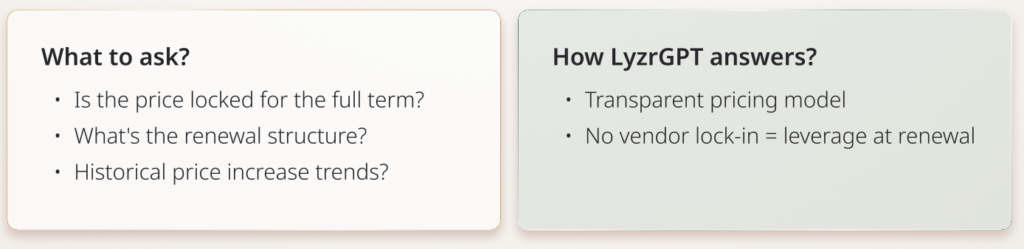

Q8: Is pricing locked for the contract term? What about renewals?

Many SaaS contracts quietly bake in price escalators at renewal. If you’re locked into auto-renewal with price escalation clauses, you have zero leverage.

What ChatGPT Enterprise offers:

Pricing negotiated per contract. Annual contracts are typical. The details vary and are often asymmetric in favor of the vendor.

When you can leave, you negotiate from strength.

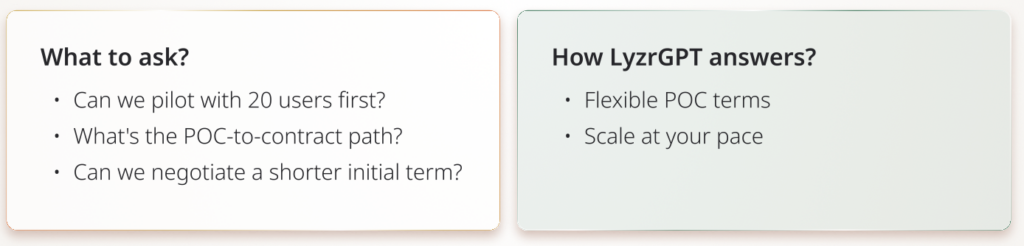

Q9: Can we start with a POC before committing to an annual contract?

A lot of AI journeys begin with, “Let’s just try it with a small team.” But if the minimum is 150 users × $60/month = $9K/month and a 12‑month lock , that is no longer a pilot – it is a bet.

What ChatGPT Enterprise offers:

150-seat minimum, 12-month commitment required (based on research).

You should be testing hypotheses, not signing away optionality.

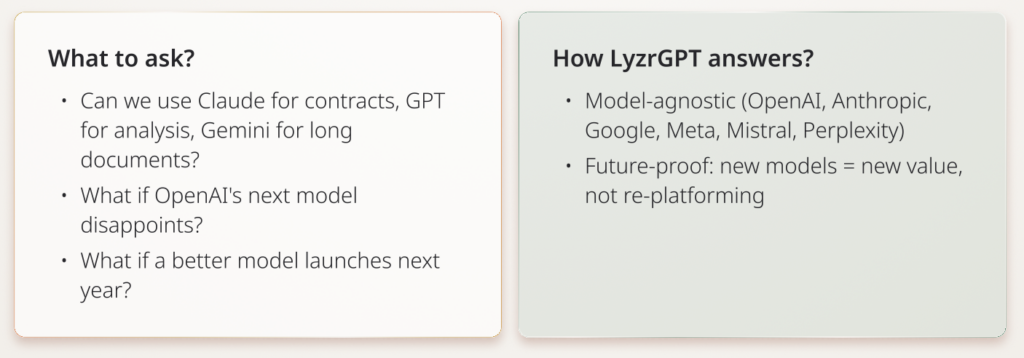

Chapter 3: Models, Lock‑In, And The AI Future You Cannot Yet See

This is where most teams underestimate the speed of AI evolution. Models are not static products. They are moving targets that change every quarter.

Locking into a single vendor is like signing a 5‑year deal with one smartphone maker before the iPhone appears.

Q10: Can we use AI models besides OpenAI’s?

Different models are already better at different things:

- Claude often shines in legal and long‑form reasoning

- Gemini offers multi‑million token context windows

- Llama is open‑source and cost‑efficient

If you tie your fate to one vendor, you are betting their roadmap will always be enough.

What ChatGPT Enterprise offers:

OpenAI models only (GPT-4, GPT-4o, GPT-5).

Your strategy becomes: “best model for each job,” not “best we can do with what we chose two years ago.”

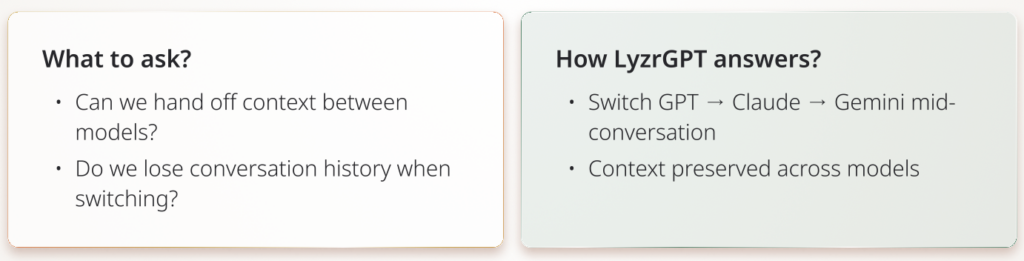

Q11: Can we switch models mid‑conversation without losing context?

In real work, a single thread might move from:

- Brainstorming with GPT‑4

- Deep structured writing with Claude

- Long document analysis with Gemini

If switching models means starting a new chat , you are losing time and context.

What ChatGPT Enterprise offers:

Locked to OpenAI models per conversation.

It feels like one intelligent workspace, not a set of disconnected rooms.

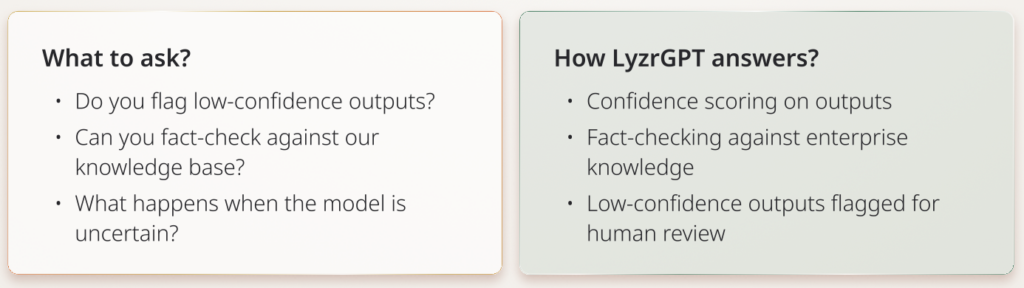

Q12: How do you handle hallucinations and inaccurate outputs?

A hallucinated poem is cute.

A hallucinated compliance report is a liability.

A fabricated contract clause is a lawsuit in slow motion.

What ChatGPT Enterprise offers:

Standard content moderation. No public documentation of confidence scoring or fact-checking layers.

You are not outsourcing judgment; you are augmenting it with guardrails.

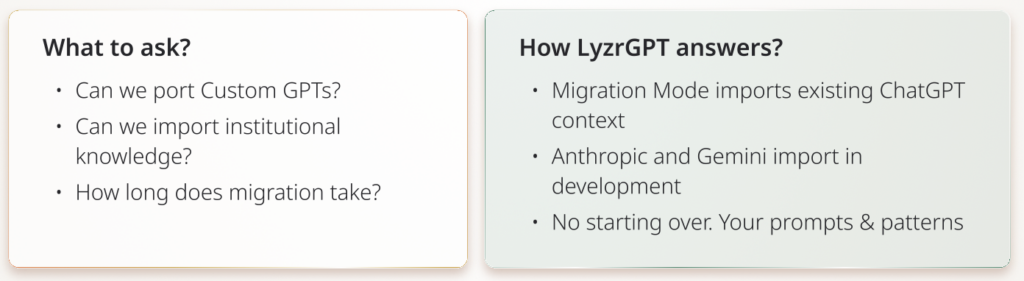

Q13: Can we import our existing ChatGPT/Copilot conversation history?

Your teams already have:

- Saved prompts in ChatGPT

- Copilot workflows inside Office

- Tribal knowledge spread across different tools

If migration means “everyone starts from zero,” adoption will be painful and slow.

What ChatGPT Enterprise offers:

Migration from personal ChatGPT to Enterprise isn’t seamless (based on research noting this gap).

You carry your intelligence forward, instead of abandoning it.

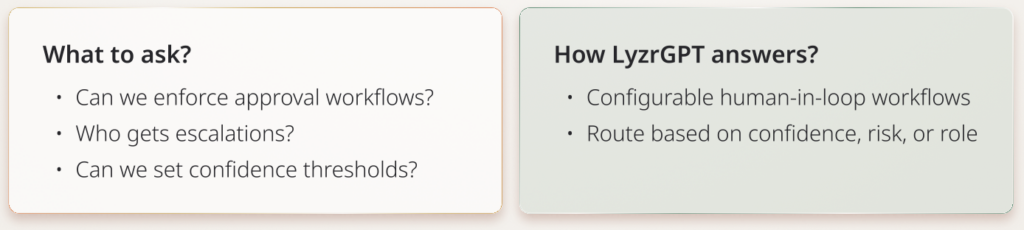

Q14: Do you support human‑in‑the‑loop for high‑stakes workflows?

Some tasks must have human checkpoints:

- Compliance approvals

- Contract sign‑offs

- Financial actions

Your AI should be able to say, “Stop here, a human must decide.”

What ChatGPT Enterprise offers:

Custom GPTs can be configured with workflows, but human-in-loop specifics not detailed publicly.

Your AI behaves more like a junior analyst, not an unsupervised executor.

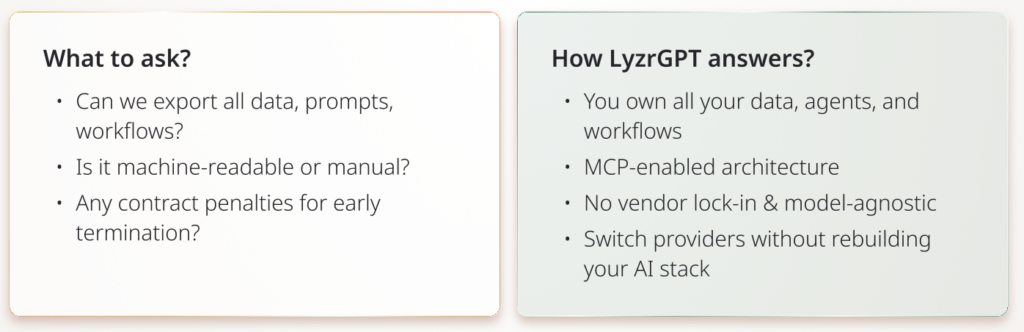

Q15: What’s our exit strategy if we need to switch vendors?

No one wants to talk about breakups at the start of a relationship.

But markets move, vendors pivot, M&A happens, regulation changes.

You need a clear exit ramp before you merge traffic.

What ChatGPT Enterprise offers:

Data export available, but process and format not detailed publicly.

You are never trapped by yesterday’s decision.

Chapter 4: Your AI Should Behave Like An Enterprise System, Not A Toy

The difference between a clever chatbot and a real AI platform is architecture.

In an enterprise, nothing important is ever a single system.

Everything is an orchestration.

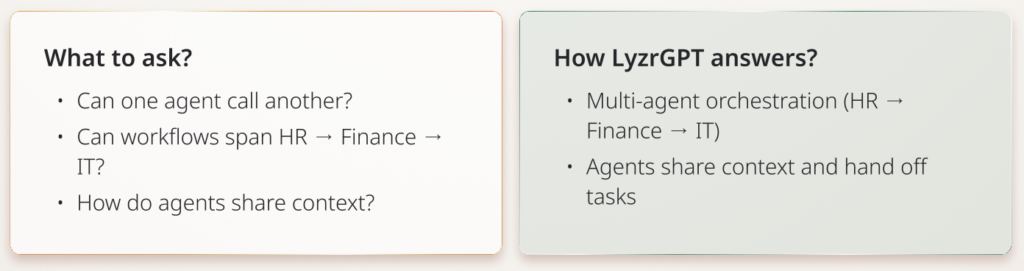

Q16: Can agents orchestrate across departments and systems?

Real workflows cross:

- HR onboarding

- Finance provisioning

- IT account creation

If each agent is a silo, you end up with islands of automation that never talk.

What ChatGPT Enterprise offers:

Custom GPTs available, but multi-agent orchestration across systems is not a highlighted feature.

Your AI operates like a cross‑functional team, not a collection of disconnected bots.

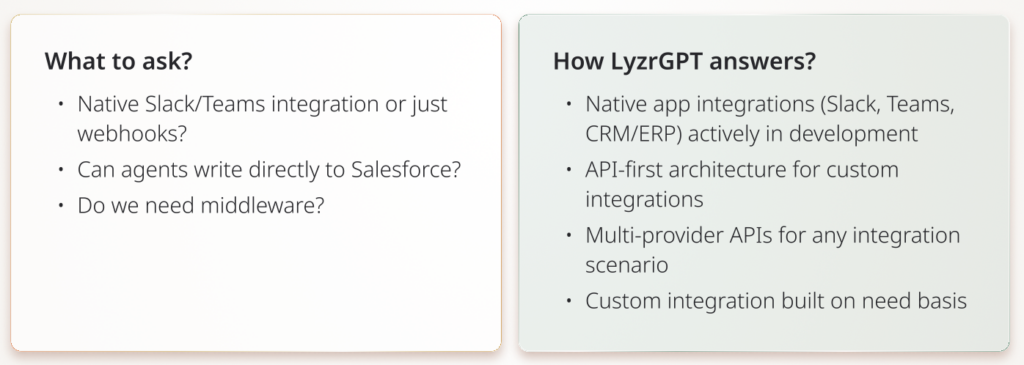

Q17: How deep are your integrations with tools we already use?

If your team has to leave Slack, Teams, Salesforce, or SAP to use AI, usage drops.

The adoption cliff is real.

What ChatGPT Enterprise offers:

Integrations exist but depth varies. Primarily API-based.

AI lives where your people already work.

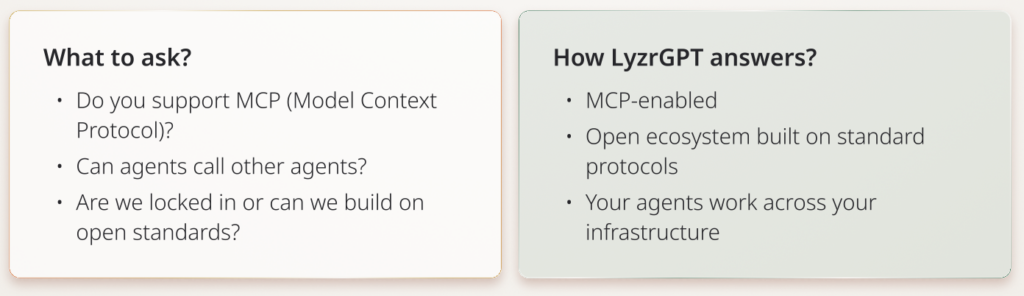

Q18: Are you compatible with other AI frameworks we might use?

Your AI landscape will not be monolithic:

- Some teams might build on LangChain

- Others experiment with CrewAI

- Internal tools might adopt emerging standards like MCP (Model Context Protocol)

If your AI platform cannot talk to these, you are building a walled garden.

What ChatGPT Enterprise offers:

OpenAI API standard. Custom GPTs are OpenAI-specific format, agents built there don’t travel well.

Your architecture stays flexible as the AI tooling landscape evolves.

Chapter 5: Outages, Shadow IT, And The Risks You Don’t See On Day One

Finally, we arrive at the part that only shows up once you are live.

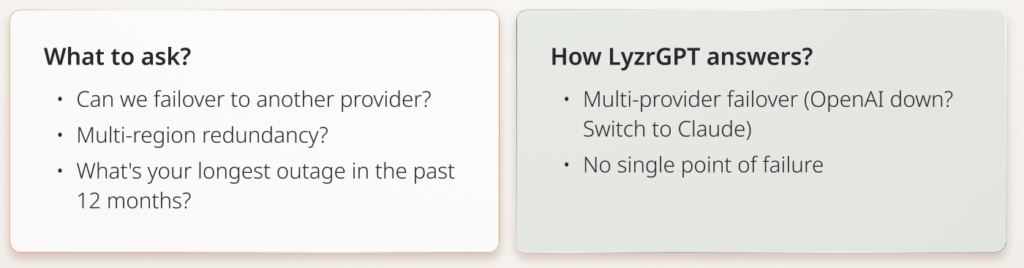

Q19: What happens if OpenAI has an outage?

In November 2024, OpenAI experienced a multi‑hour outage .

For teams fully dependent on a single AI provider, work simply stopped.

Your risk is not theoretical if your entire AI stack is tied to one endpoint.

What ChatGPT Enterprise offers:

99.9% uptime SLA typical for enterprise services.

AI becomes a resilient utility, not a fragile luxury.

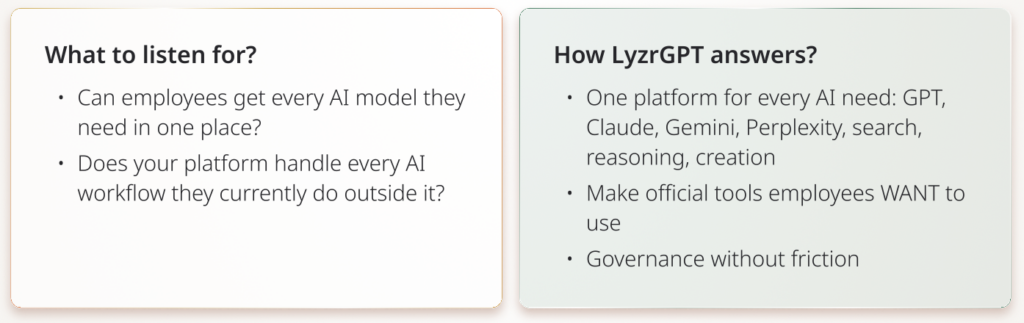

Q20: How do you prevent “shadow AI” where employees bypass official tools?

Studies show ~90% of employees already use consumer ChatGPT at work.

The solution isn’t blocking consumer tools. It’s making your official platform so complete, they never want to leave.

What ChatGPT Enterprise says:

Enterprise version with better features than consumer ChatGPT, but limited to OpenAI’s ecosystem.

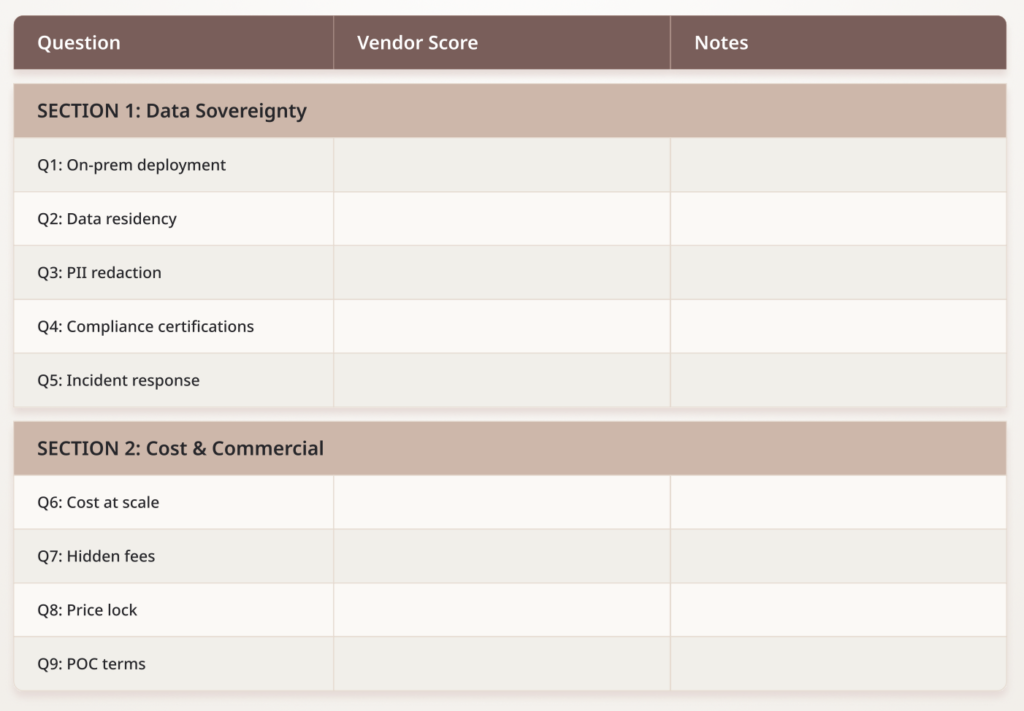

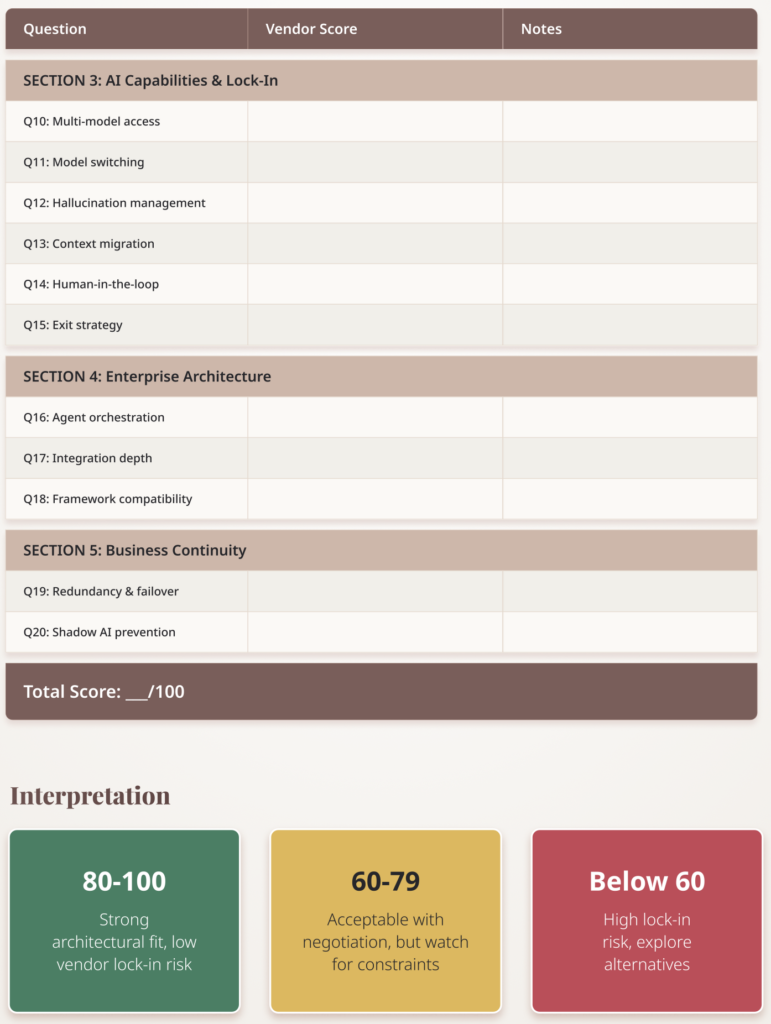

Evaluation Scorecard

Use this to rate vendor responses (1-5 scale)

How ChatGPT Enterprise & LyzrGPT Compare

Next Steps

For CIOs: Use this checklist to compare ChatGPT Enterprise, Microsoft Copilot, Google Gemini Enterprise, and LyzrGPT. Focus on questions 10, 15, 16, 18, 19 (vendor lock-in and architecture).

For CFOs: Pay closest attention to Section 2 (Cost & Commercial Terms). The difference between seat-based and consumption-based pricing can be millions of dollars at scale.

For CISOs: Section 1 (Data Sovereignty) is critical. Questions 1-3 determine whether you can meet compliance requirements.

Try before you buy

Experience multi-model AI at chat.lyzr.app

Compare solutions

Book a demo to see how LyzrGPT answers these 20 questions in your specific environment.